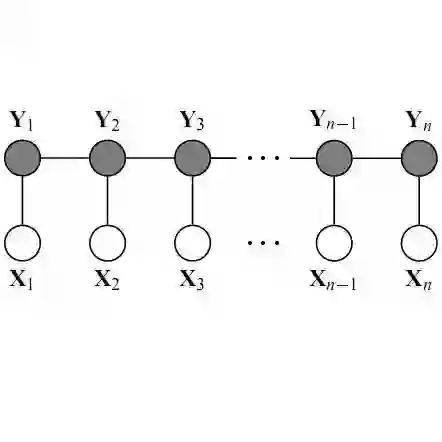

Random forest is an important method for ML applications due to its broad outperformance over competing methods for structured tabular data. We propose a method for transfer learning in nonparametric regression using a centered random forest (CRF) with distance covariance-based feature weights, assuming the unknown source and target regression functions are different for a few features (sparsely different). Our method first obtains residuals from predicting the response in the target domain using a source domain-trained CRF. Then, we fit another CRF to the residuals, but with feature splitting probabilities proportional to the sample distance covariance between the features and the residuals in an independent sample. We derive an upper bound on the mean square error rate of the procedure as a function of sample sizes and difference dimension, theoretically demonstrating transfer learning benefits in random forests. In simulations, we show that the results obtained for the CRFs also hold numerically for the standard random forest (SRF) method with data-driven feature split selection. Beyond transfer learning, our results also show the benefit of distance-covariance-based weights on the performance of RF in some situations. Our method shows significant gains in predicting the mortality of ICU patients in smaller-bed target hospitals using a large multi-hospital dataset of electronic health records for 200,000 ICU patients.

翻译:随机森林因其在结构化表格数据上普遍优于竞争方法而成为机器学习应用的重要方法。本文提出一种非参数回归中的迁移学习方法,采用基于距离协方差特征权重的中心化随机森林,并假设未知的源域与目标域回归函数在少数特征上存在差异(稀疏差异)。我们的方法首先使用源域训练的中心化随机森林预测目标域响应变量并获取残差。随后,我们以特征与残差在独立样本中的样本距离协方差成比例的特征分裂概率,对残差拟合另一个中心化随机森林。我们推导了该方法均方误差率关于样本量与差异维度的上界,从理论上证明了随机森林中迁移学习的优势。在仿真实验中,我们证明中心化随机森林的结论在采用数据驱动特征分裂选择的标准随机森林方法中同样数值成立。除迁移学习外,我们的结果还表明基于距离协方差的权重在某些情况下能提升随机森林的性能。通过使用包含20万ICU患者的大型多医院电子健康记录数据集,我们的方法在预测较小规模目标医院ICU患者死亡率方面展现出显著优势。