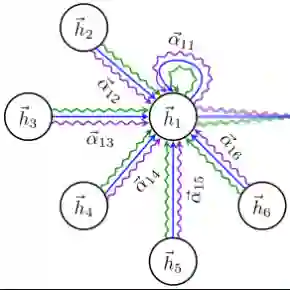

Graph Neural Networks (GNNs) have become fundamental in graph-structured deep learning. Key paradigms of modern GNNs include message passing, graph rewiring, and Graph Transformers. This paper introduces Graph-Rewiring Attention with Stochastic Structures (GRASS), a novel GNN architecture that combines the advantages of these three paradigms. GRASS rewires the input graph by superimposing a random regular graph, enhancing long-range information propagation while preserving structural features of the input graph. It also employs a unique additive attention mechanism tailored for graph-structured data, providing a graph inductive bias while remaining computationally efficient. Our empirical evaluations demonstrate that GRASS achieves state-of-the-art performance on multiple benchmark datasets, confirming its practical efficacy.

翻译:图神经网络(GNNs)已成为图结构深度学习的基础。现代GNN的核心范式包括消息传递、图重连和图Transformer。本文提出了一种新颖的GNN架构——具有随机结构的图重连注意力网络(GRASS),它融合了这三种范式的优势。GRASS通过在输入图上叠加一个随机正则图来实现图的重连,从而在保留输入图结构特征的同时增强长程信息传播。该架构还采用了一种专为图结构数据设计的独特加性注意力机制,在保持计算效率的同时提供了图归纳偏置。我们的实证评估表明,GRASS在多个基准数据集上达到了最先进的性能,证实了其实际有效性。