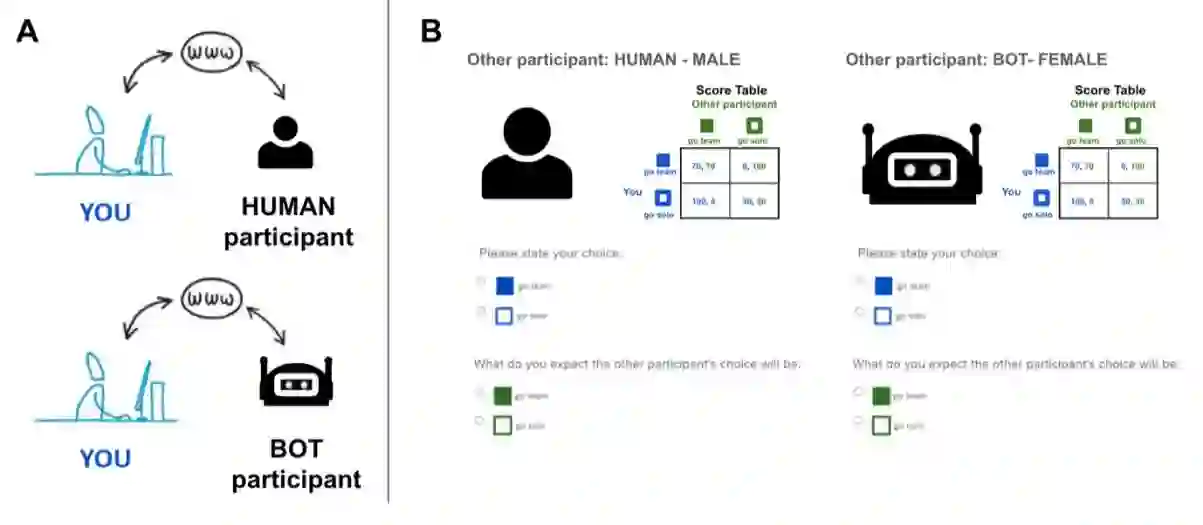

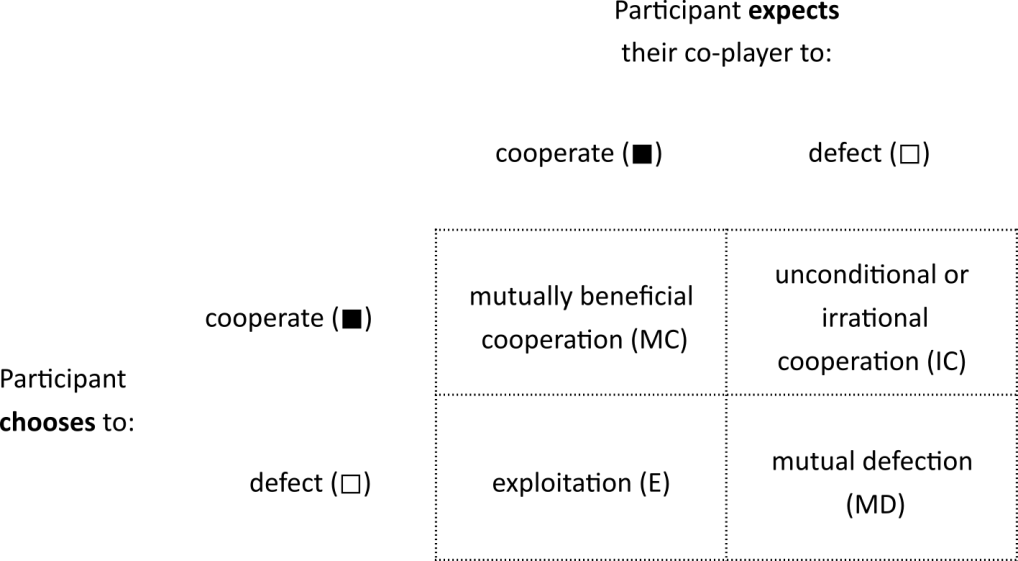

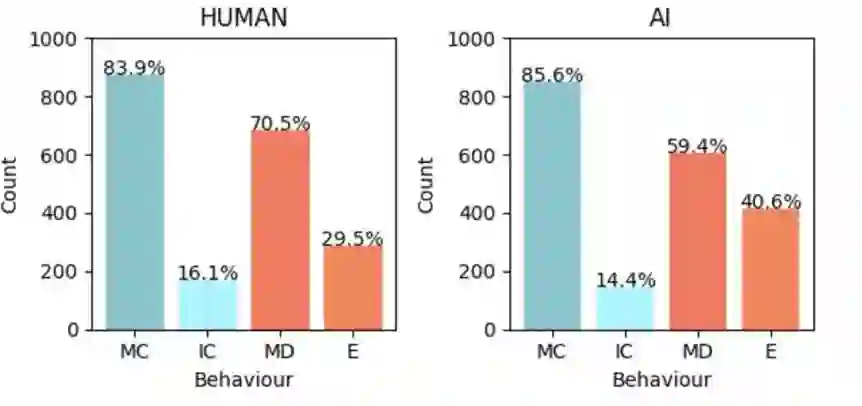

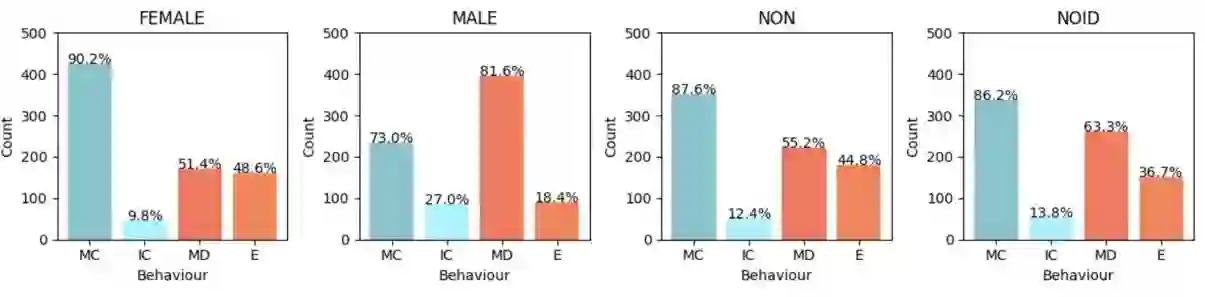

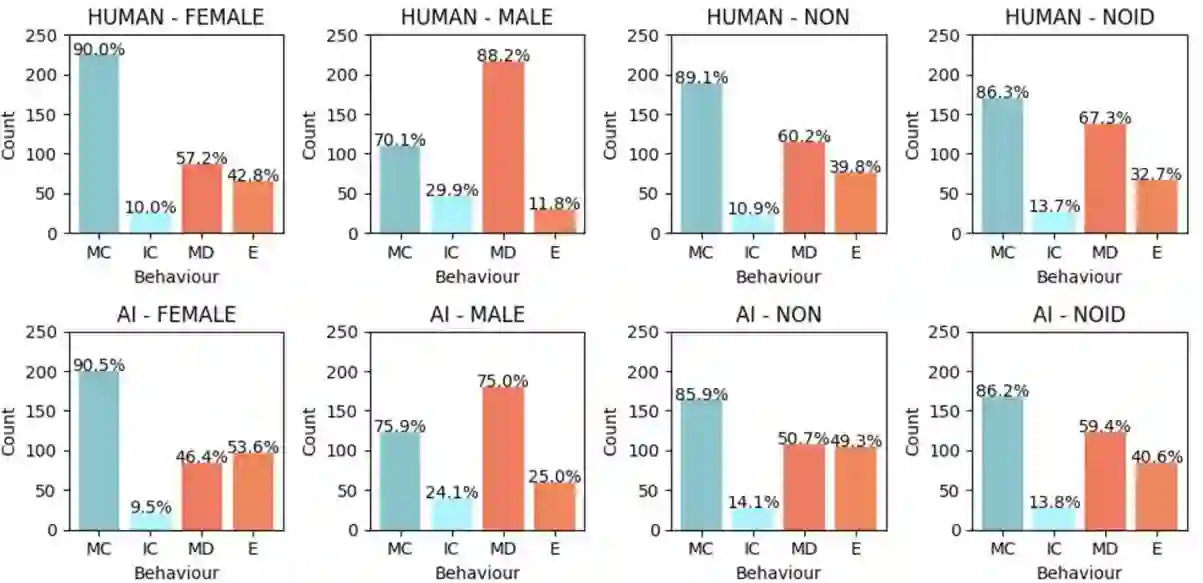

Cooperation between humans and machines is increasingly vital as artificial intelligence (AI) becomes more integrated into daily life. Research indicates that people are often less willing to cooperate with AI agents than with humans, more readily exploiting AI for personal gain. While prior studies have shown that giving AI agents human-like features influences people's cooperation with them, the impact of AI's assigned gender remains underexplored. This study investigates how human cooperation varies based on gender labels assigned to AI agents with which they interact. In the Prisoner's Dilemma game, 402 participants interacted with partners labelled as AI (bot) or humans. The partners were also labelled male, female, non-binary, or gender-neutral. Results revealed that participants tended to exploit female-labelled and distrust male-labelled AI agents more than their human counterparts, reflecting gender biases similar to those in human-human interactions. These findings highlight the significance of gender biases in human-AI interactions that must be considered in future policy, design of interactive AI systems, and regulation of their use.

翻译:随着人工智能(AI)日益融入日常生活,人类与机器之间的协作变得愈发重要。研究表明,人们通常更不愿意与AI智能体合作,而更倾向于利用AI谋取个人利益。尽管先前研究已表明,赋予AI智能体类人特征会影响人们与之合作的意愿,但AI指定性别的影响尚未得到充分探索。本研究探讨了人类如何根据与AI智能体互动时指定的性别标签调整合作行为。在囚徒困境博弈中,402名参与者与标记为AI(机器人)或人类的伙伴进行互动。这些伙伴同时被标记为男性、女性、非二元性别或性别中立。结果显示,参与者倾向于更频繁地剥削标记为女性的AI智能体,并对标记为男性的AI智能体表现出更强的不信任感,这种性别偏见与人类间互动中的偏见相似。这些发现凸显了人机交互中性别偏见的重要性,必须在未来政策、交互式AI系统设计及其使用规范中予以充分考虑。