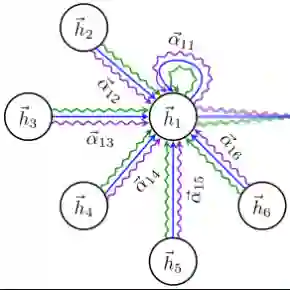

Large language models are extensively applied across a wide range of tasks, such as customer support, content creation, educational tutoring, and providing financial guidance. However, a well-known drawback is their predisposition to generate hallucinations. This damages the trustworthiness of the information these models provide, impacting decision-making and user confidence. We propose a method to detect hallucinations by looking at the structure of the latent space and finding associations within hallucinated and non-hallucinated generations. We create a graph structure that connects generations that lie closely in the embedding space. Moreover, we employ a Graph Attention Network which utilizes message passing to aggregate information from neighboring nodes and assigns varying degrees of importance to each neighbor based on their relevance. Our findings show that 1) there exists a structure in the latent space that differentiates between hallucinated and non-hallucinated generations, 2) Graph Attention Networks can learn this structure and generalize it to unseen generations, and 3) the robustness of our method is enhanced when incorporating contrastive learning. When evaluated against evidence-based benchmarks, our model performs similarly without access to search-based methods.

翻译:大型语言模型被广泛应用于多种任务,例如客户支持、内容创作、教育辅导和提供金融指导。然而,一个众所周知的缺陷是它们倾向于产生幻觉。这损害了这些模型所提供信息的可信度,影响了决策制定和用户信心。我们提出了一种通过观察潜在空间结构并发现幻觉生成与非幻觉生成之间关联的方法来检测幻觉。我们创建了一种图结构,用于连接嵌入空间中位置相近的生成结果。此外,我们采用了图注意力网络,该网络利用消息传递来聚合来自相邻节点的信息,并根据每个邻居的相关性为其分配不同的重要性权重。我们的研究结果表明:1)潜在空间中存在一种能够区分幻觉生成与非幻觉生成的结构;2)图注意力网络能够学习这种结构并将其推广到未见过的生成结果上;3)当结合对比学习时,我们方法的鲁棒性得到了增强。在基于证据的基准测试中评估时,我们的模型在没有使用基于搜索方法的情况下取得了相似的性能。