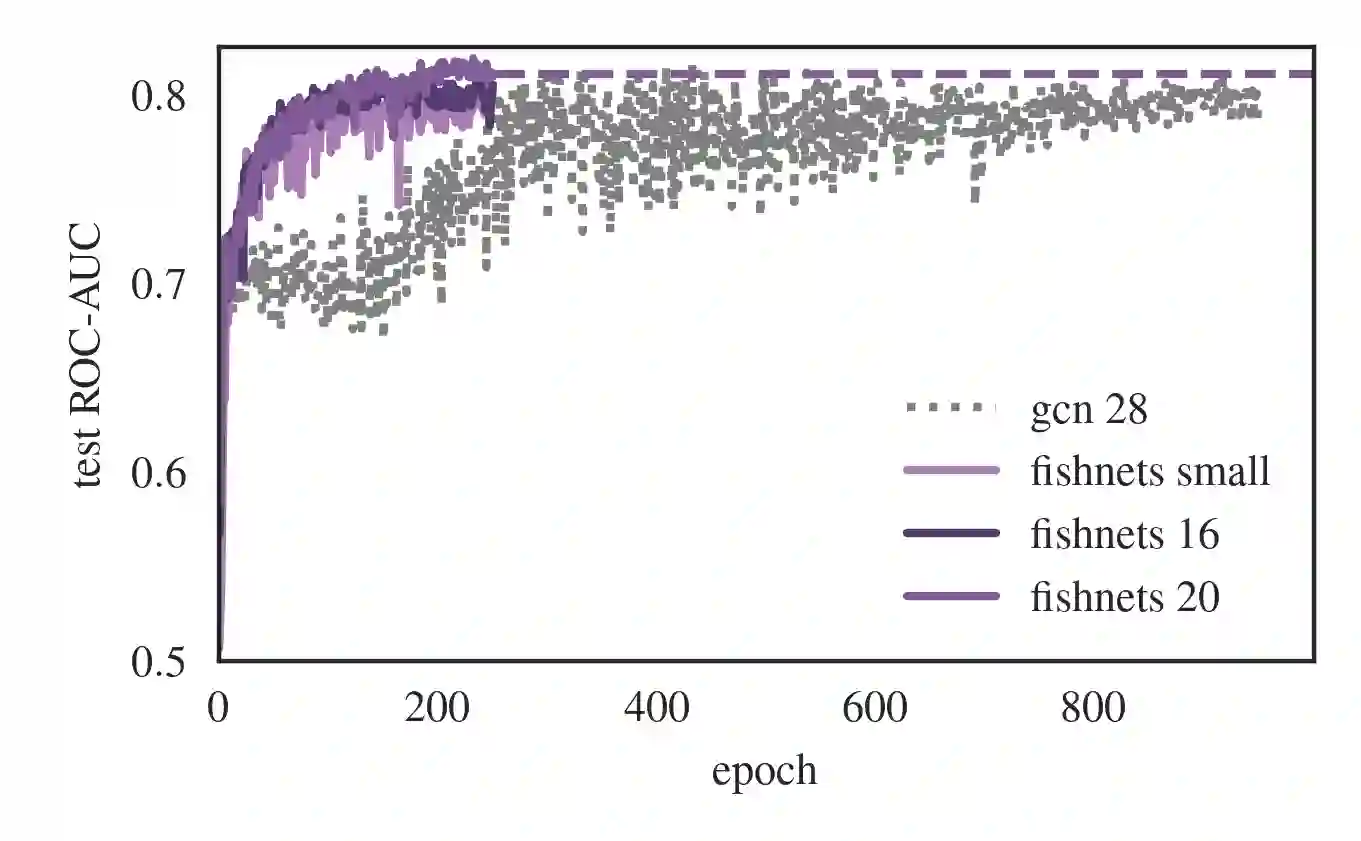

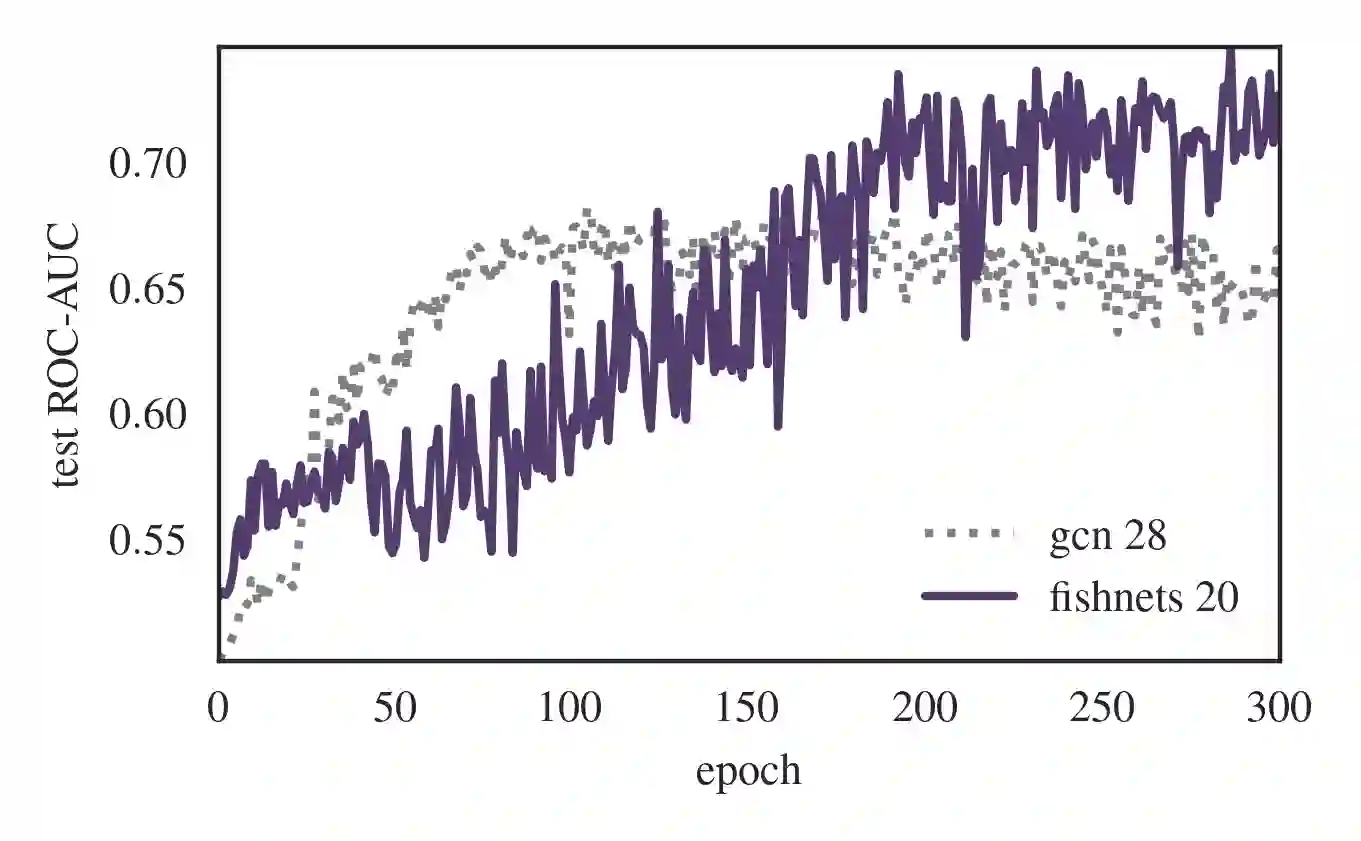

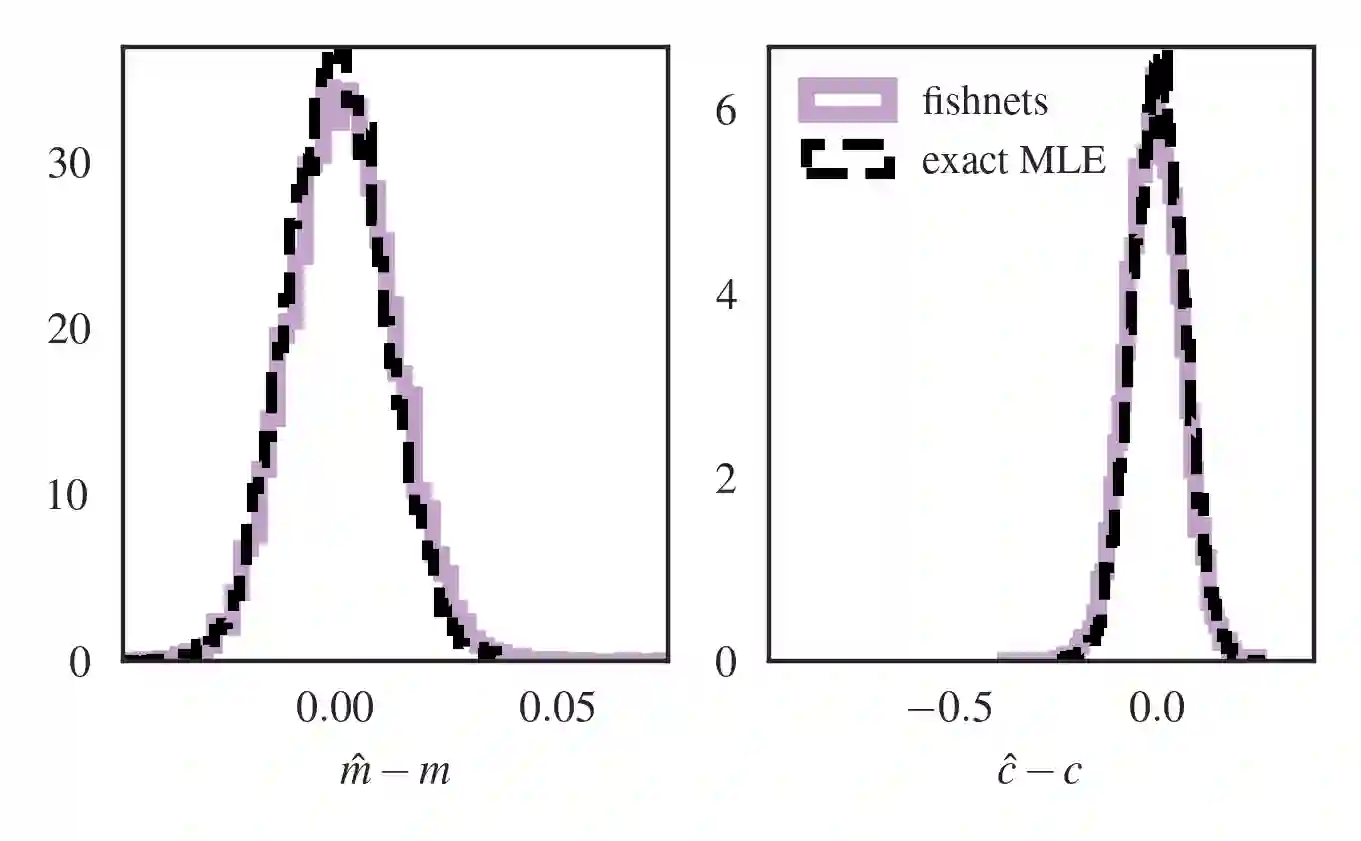

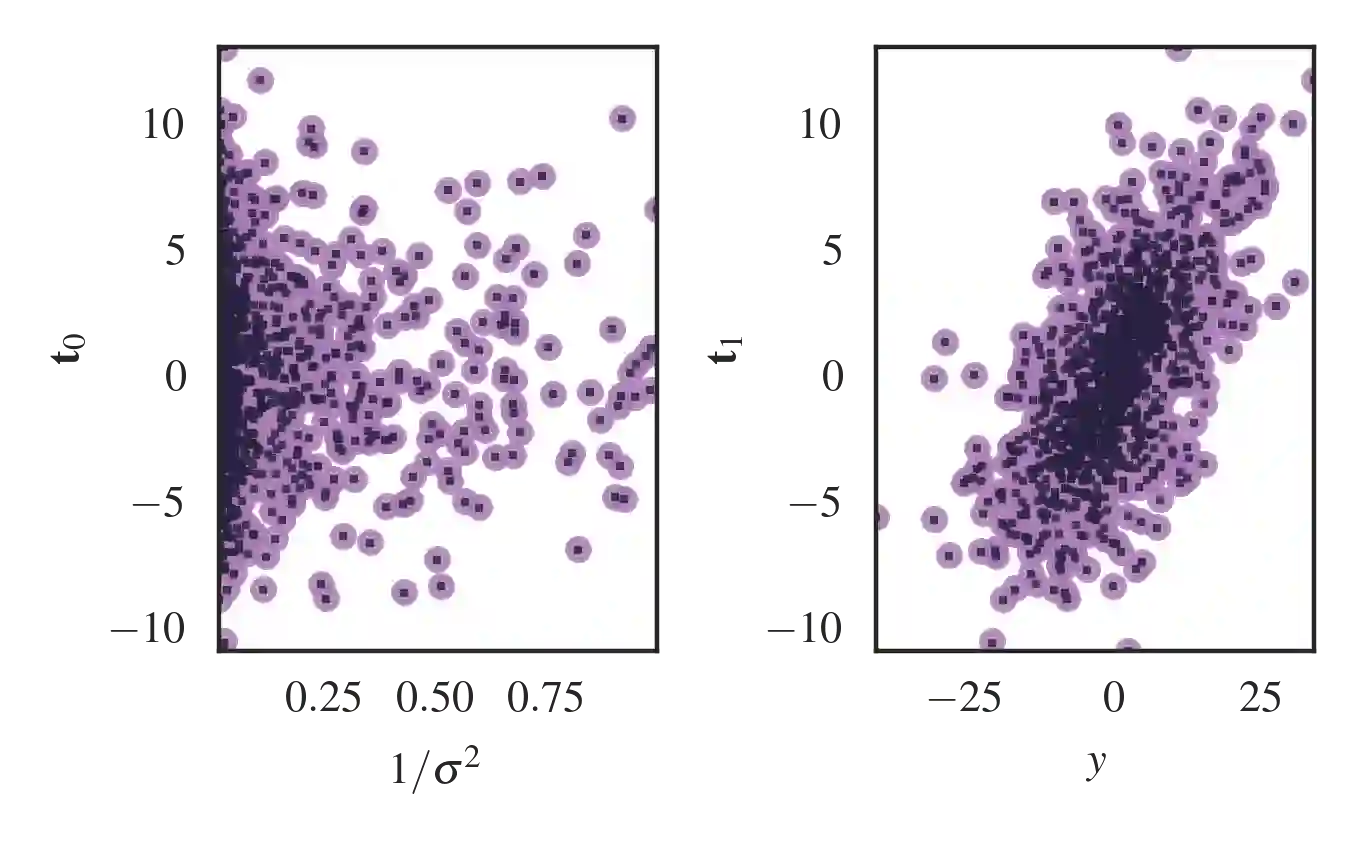

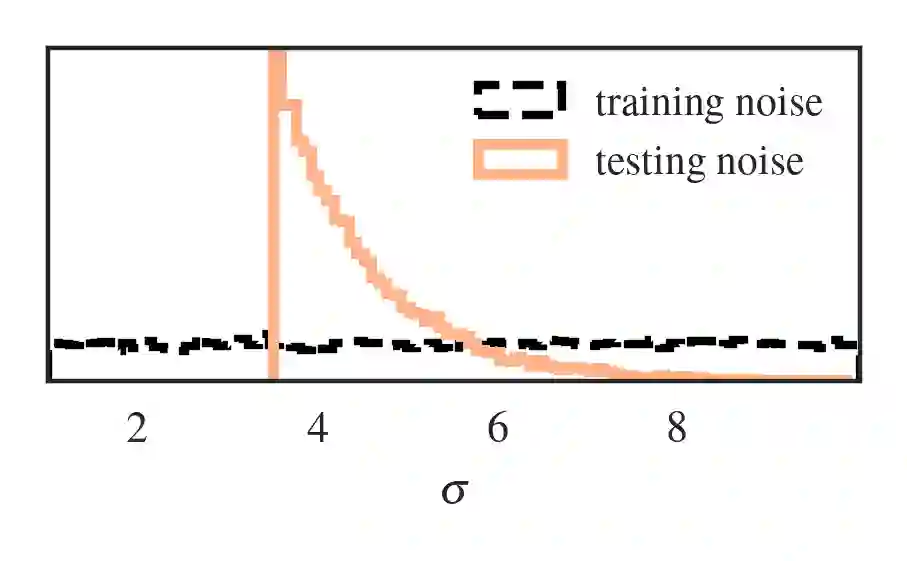

Set-based learning is an essential component of modern deep learning and network science. Graph Neural Networks (GNNs) and their edge-free counterparts Deepsets have proven remarkably useful on ragged and topologically challenging datasets. The key to learning informative embeddings for set members is a specified aggregation function, usually a sum, max, or mean. We propose Fishnets, an aggregation strategy for learning information-optimal embeddings for sets of data for both Bayesian inference and graph aggregation. We demonstrate that i) Fishnets neural summaries can be scaled optimally to an arbitrary number of data objects, ii) Fishnets aggregations are robust to changes in data distribution, unlike standard deepsets, iii) Fishnets saturate Bayesian information content and extend to regimes where MCMC techniques fail and iv) Fishnets can be used as a drop-in aggregation scheme within GNNs. We show that by adopting a Fishnets aggregation scheme for message passing, GNNs can achieve state-of-the-art performance versus architecture size on ogbn-protein data over existing benchmarks with a fraction of learnable parameters and faster training time.

翻译:集合学习是现代深度学习和网络科学的重要组成部分。图神经网络(GNNs)及其无边缘对应方法DeepSets已在非规则和拓扑结构复杂的数据集上展现出显著效用。学习集合成员信息化嵌入的关键在于特定的聚合函数,通常采用求和、最大值或均值运算。本文提出Fishnets——一种面向贝叶斯推断和图聚合任务的集合数据信息最优嵌入学习聚合策略。我们证明:i) Fishnets神经摘要可最优扩展至任意数量的数据对象;ii) 与标准DeepSets不同,Fishnets聚合对数据分布变化具有鲁棒性;iii) Fishnets能够饱和贝叶斯信息容量,并扩展至马尔可夫链蒙特卡罗方法失效的领域;iv) Fishnets可作为即插即用聚合方案集成于GNNs中。通过在消息传递中采用Fishnets聚合方案,我们展示了GNNs在ogbn-protein数据集上能以更少的可学习参数和更快的训练时间,在现有基准测试中实现相对于架构规模的最先进性能。