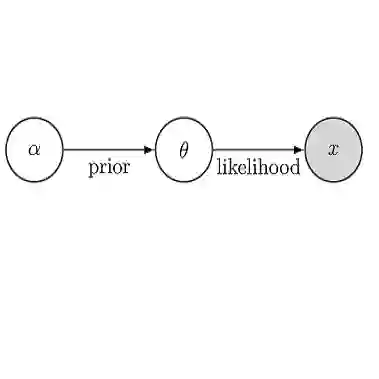

This paper introduces posterior mean matching (PMM), a new method for generative modeling that is grounded in Bayesian inference. PMM uses conjugate pairs of distributions to model complex data of various modalities like images and text, offering a flexible alternative to existing methods like diffusion models. PMM models iteratively refine noisy approximations of the target distribution using updates from online Bayesian inference. PMM is flexible because its mechanics are based on general Bayesian models. We demonstrate this flexibility by developing specialized examples: a generative PMM model of real-valued data using the Normal-Normal model, a generative PMM model of count data using a Gamma-Poisson model, and a generative PMM model of discrete data using a Dirichlet-Categorical model. For the Normal-Normal PMM model, we establish a direct connection to diffusion models by showing that its continuous-time formulation converges to a stochastic differential equation (SDE). Additionally, for the Gamma-Poisson PMM, we derive a novel SDE driven by a Cox process, which is a significant departure from traditional Brownian motion-based generative models. PMMs achieve performance that is competitive with generative models for language modeling and image generation.

翻译:本文提出后验均值匹配(PMM),一种基于贝叶斯推断的新型生成式建模方法。PMM利用共轭分布对图像和文本等多种模态的复杂数据进行建模,为扩散模型等现有方法提供了灵活的替代方案。PMM模型通过在线贝叶斯推断的更新迭代优化目标分布的噪声近似。由于PMM的机制建立在通用贝叶斯模型基础上,因此具有高度灵活性。我们通过构建以下特例模型展示这种灵活性:基于正态-正态模型的实值数据生成PMM模型、基于伽马-泊松模型的计数数据生成PMM模型,以及基于狄利克雷-分类模型的离散数据生成PMM模型。对于正态-正态PMM模型,我们通过证明其连续时间公式收敛于随机微分方程(SDE),建立了与扩散模型的直接联系。此外,针对伽马-泊松PMM模型,我们推导出由Cox过程驱动的新型SDE,这显著区别于传统的基于布朗运动的生成模型。PMM在语言建模和图像生成任务中展现出与现有生成模型相竞争的性能。