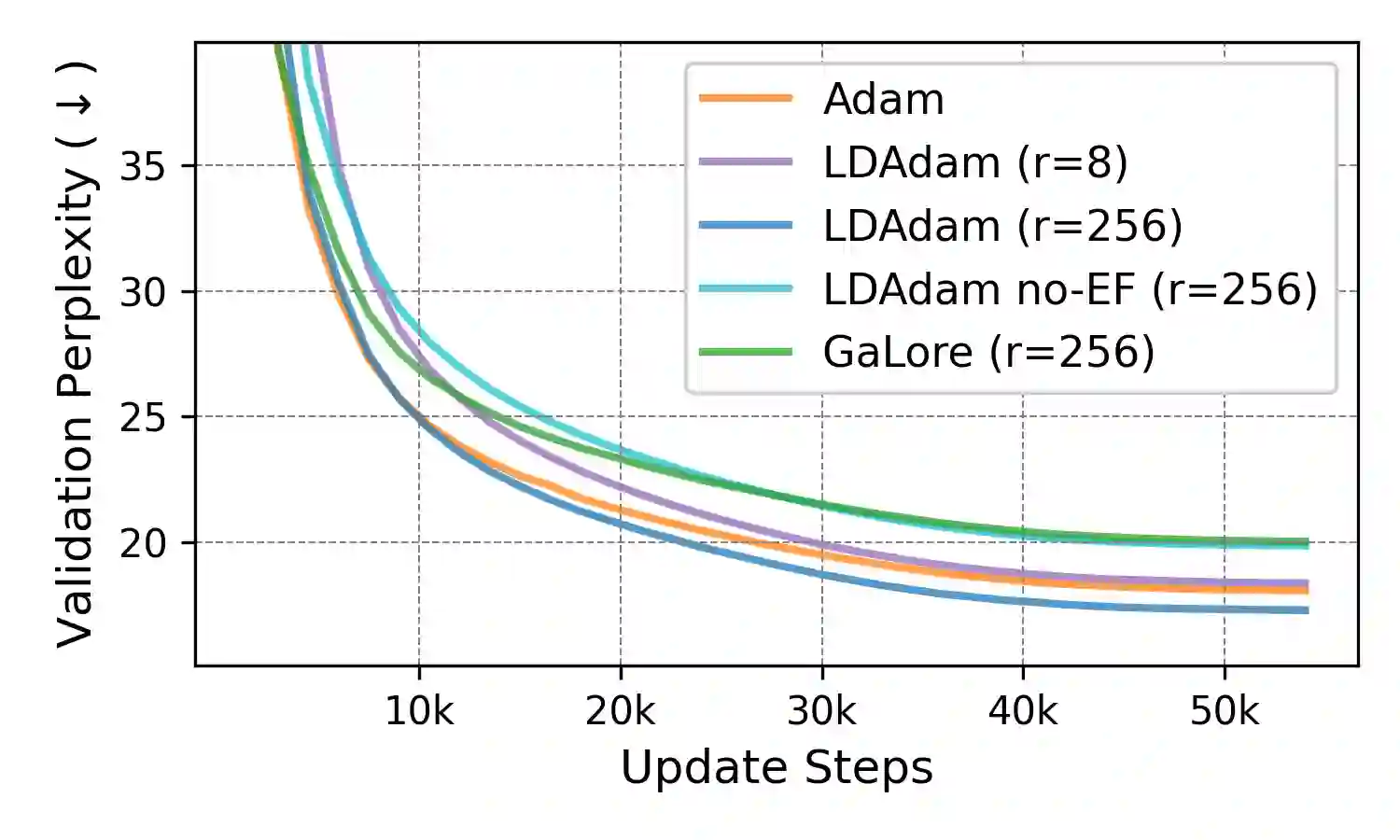

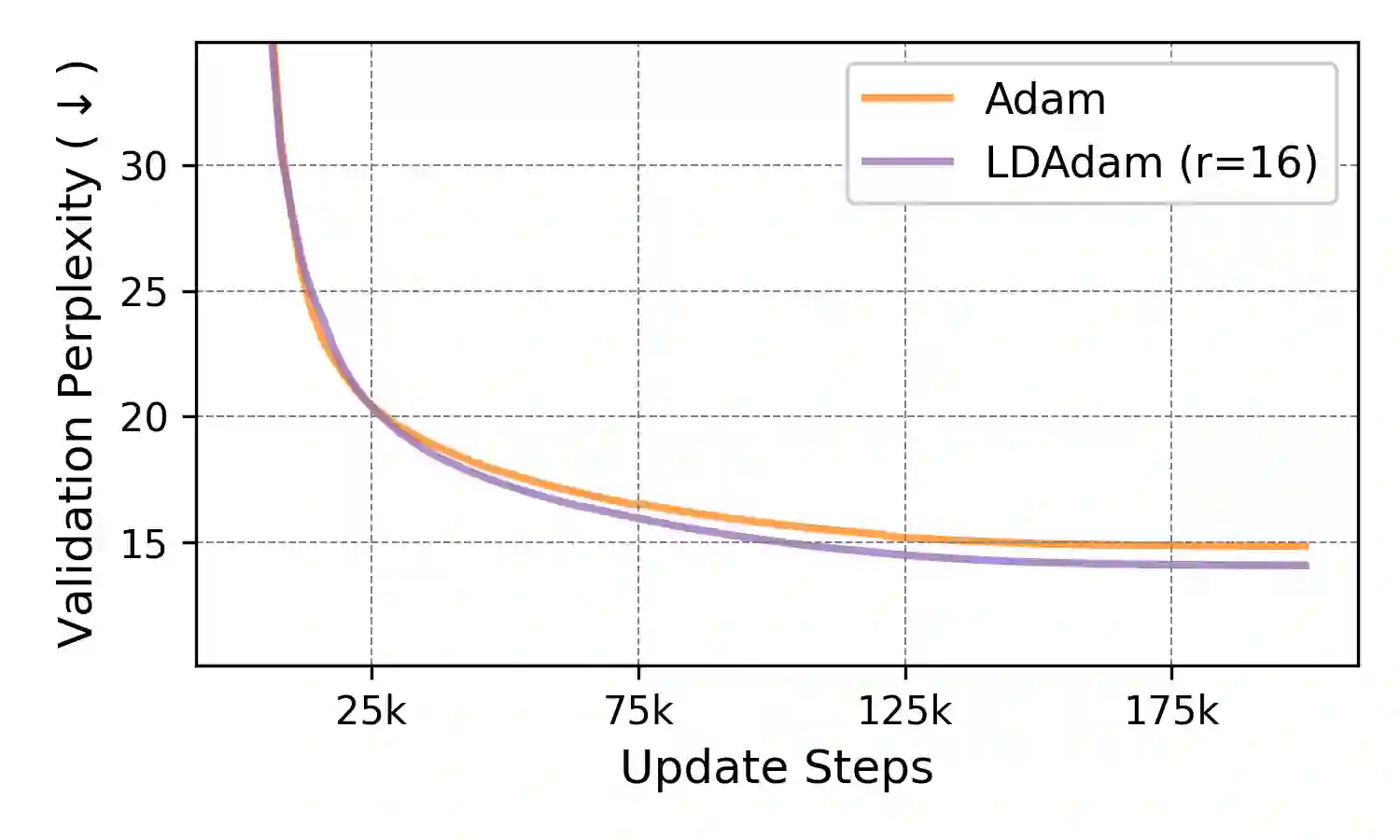

We introduce LDAdam, a memory-efficient optimizer for training large models, that performs adaptive optimization steps within lower dimensional subspaces, while consistently exploring the full parameter space during training. This strategy keeps the optimizer's memory footprint to a fraction of the model size. LDAdam relies on a new projection-aware update rule for the optimizer states that allows for transitioning between subspaces, i.e., estimation of the statistics of the projected gradients. To mitigate the errors due to low-rank projection, LDAdam integrates a new generalized error feedback mechanism, which explicitly accounts for both gradient and optimizer state compression. We prove the convergence of LDAdam under standard assumptions, and show that LDAdam allows for accurate and efficient fine-tuning and pre-training of language models.

翻译:本文提出LDAdam,一种用于训练大型模型的内存高效优化器,它在低维子空间内执行自适应优化步骤,同时在训练过程中持续探索完整的参数空间。该策略将优化器的内存占用保持在模型大小的一个分数范围内。LDAdam依赖于一种新的投影感知优化器状态更新规则,该规则允许在子空间之间进行转换,即估计投影梯度的统计量。为减轻低秩投影带来的误差,LDAdam集成了一种新的广义误差反馈机制,该机制明确考虑了梯度和优化器状态压缩。我们在标准假设下证明了LDAdam的收敛性,并表明LDAdam能够实现语言模型的精确高效微调与预训练。