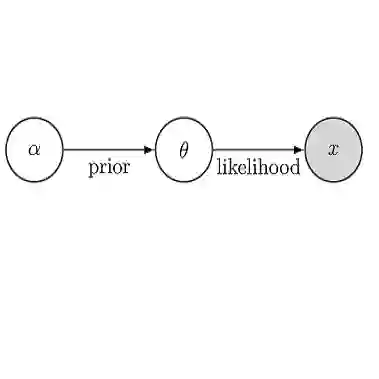

We theoretically justify the recent empirical finding of [Teh et al., 2025] that a transformer pretrained on synthetically generated data achieves strong performance on empirical Bayes (EB) problems. We take an indirect approach to this question: rather than analyzing the model architecture or training dynamics, we ask why a pretrained Bayes estimator, trained under a prespecified training distribution, can adapt to arbitrary test distributions. Focusing on Poisson EB problems, we identify the existence of universal priors such that training under these priors yields a near-optimal regret bound of $\widetilde{O}(\frac{1}{n})$ uniformly over all test distributions. Our analysis leverages the classical phenomenon of posterior contraction in Bayesian statistics, showing that the pretrained transformer adapts to unknown test distributions precisely through posterior contraction. This perspective also explains the phenomenon of length generalization, in which the test sequence length exceeds the training length, as the model performs Bayesian inference using a generalized posterior.

翻译:我们从理论上验证了[Teh等人,2025]的最新实证发现:在合成生成数据上预训练的Transformer模型在经验贝叶斯问题上表现出优异性能。我们采用间接方法探讨该问题:不分析模型架构或训练动态,转而探究在预设训练分布下训练的预训练贝叶斯估计器为何能适应任意测试分布。聚焦于泊松经验贝叶斯问题,我们证明了存在通用先验,使得在这些先验下训练能获得$\widetilde{O}(\frac{1}{n})$的近似最优遗憾界,且该界对所有测试分布具有一致性。我们的分析利用了贝叶斯统计中的经典后验收缩现象,表明预训练Transformer正是通过后验收缩机制适应未知测试分布。该视角同时解释了长度泛化现象(即测试序列长度超过训练长度)——模型通过广义后验执行贝叶斯推断。