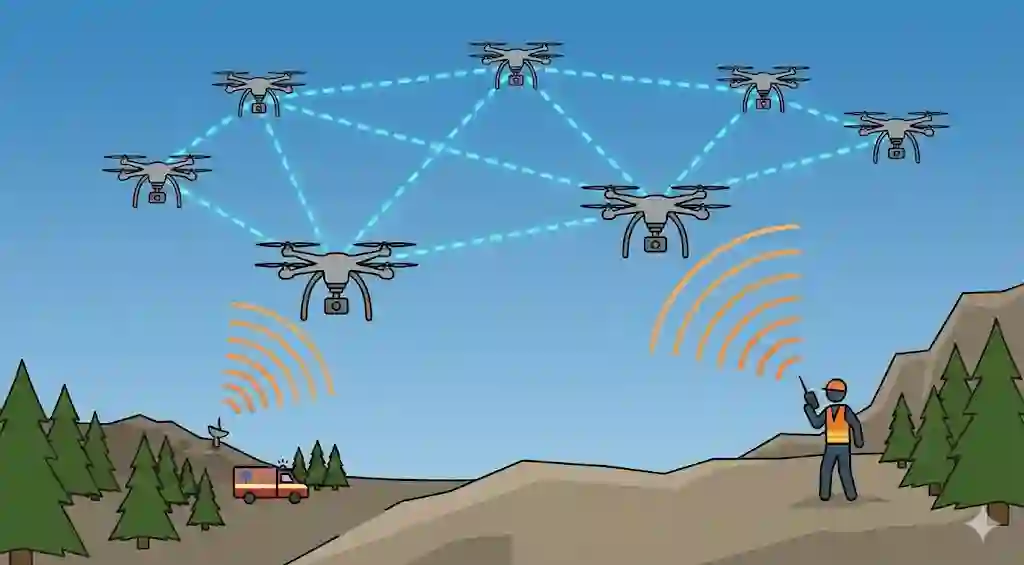

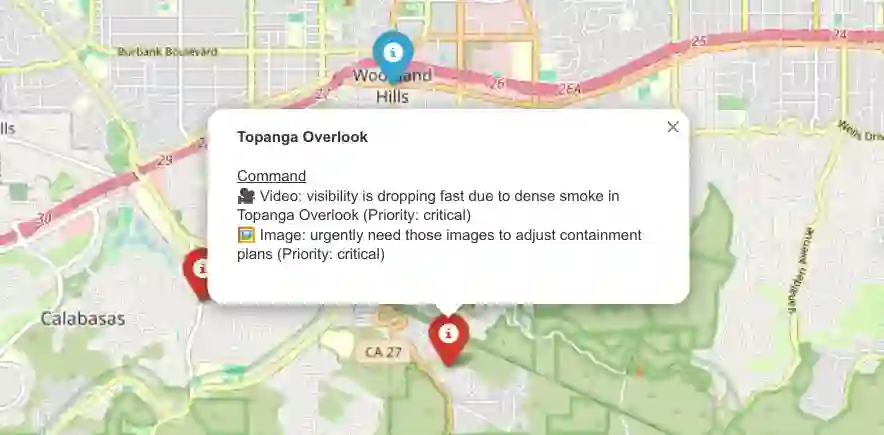

Unmanned Aerial Vehicle (UAV)-assisted networks are increasingly foreseen as a promising approach for emergency response, providing rapid, flexible, and resilient communications in environments where terrestrial infrastructure is degraded or unavailable. In such scenarios, voice radio communications remain essential for first responders due to their robustness; however, their unstructured nature prevents direct integration with automated UAV-assisted network management. This paper proposes SIREN, an AI-driven framework that enables voice-driven perception for UAV-assisted networks. By integrating Automatic Speech Recognition (ASR) with Large Language Model (LLM)-based semantic extraction and Natural Language Processing (NLP) validation, SIREN converts emergency voice traffic into structured, machine-readable information, including responding units, location references, emergency severity, and Quality-of-Service (QoS) requirements. SIREN is evaluated using synthetic emergency scenarios with controlled variations in language, speaker count, background noise, and message complexity. The results demonstrate robust transcription and reliable semantic extraction across diverse operating conditions, while highlighting speaker diarization and geographic ambiguity as the main limiting factors. These findings establish the feasibility of voice-driven situational awareness for UAV-assisted networks and show a practical foundation for human-in-the-loop decision support and adaptive network management in emergency response operations.

翻译:无人机辅助网络日益被视为应急响应的有效途径,可在陆地基础设施受损或缺失的环境中提供快速、灵活且具备韧性的通信。在此类场景中,语音无线电通信因其鲁棒性对一线救援人员仍至关重要;然而,其非结构化特性阻碍了其与自动化无人机辅助网络管理的直接集成。本文提出SIREN,一种支持无人机辅助网络实现语音驱动感知的人工智能框架。通过将自动语音识别与基于大语言模型的语义提取及自然语言处理验证相结合,SIREN将应急语音通信转换为结构化的机器可读信息,包括响应单位、位置参照、紧急程度以及服务质量需求。SIREN在包含语言、说话者数量、背景噪声及消息复杂度受控变化的合成应急场景中进行了评估。结果表明,该系统能在多样化的操作条件下实现稳健的转录与可靠的语义提取,同时凸显出说话人日志解析与地理歧义是主要的限制因素。这些发现证实了面向无人机辅助网络的语音驱动态势感知的可行性,并为应急响应操作中人在回路的决策支持与自适应网络管理奠定了实践基础。