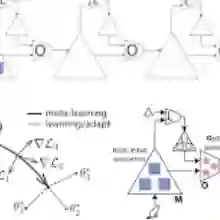

Minimum attention applies the least action principle in the changes of control concerning state and time, first proposed by Brockett. The involved regularization is highly relevant in emulating biological control, such as motor learning. We apply minimum attention in reinforcement learning (RL) as part of the rewards and investigate its connection to meta-learning and stabilization. Specifically, model-based meta-learning with minimum attention is explored in high-dimensional nonlinear dynamics. Ensemble-based model learning and gradient-based meta-policy learning are alternately performed. Empirically, the minimum attention does show outperforming competence in comparison to the state-of-the-art algorithms of model-free and model-based RL, i.e., fast adaptation in few shots and variance reduction from the perturbations of the model and environment. Furthermore, the minimum attention demonstrates an improvement in energy efficiency.

翻译:最小注意力将控制关于状态和时间变化的最小作用量原理应用于强化学习,该原理最初由Brockett提出。其中涉及的正则化方法在模拟生物控制(如运动学习)方面具有高度相关性。我们将最小注意力作为奖励函数的一部分应用于强化学习,并探究其与元学习及稳定性的关联。具体而言,在高维非线性动力学系统中探索了基于模型的最小注意力元学习方法,交替执行基于集成的模型学习与基于梯度的元策略学习。实验表明,与当前最先进的无模型及基于模型的强化学习算法相比,最小注意力确实展现出更卓越的性能,即在少量样本中实现快速适应,并降低模型与环境扰动带来的方差。此外,最小注意力还表现出能量效率的提升。