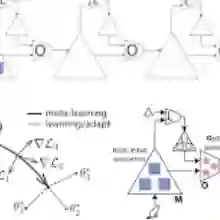

One of the core facets of Bayesianism is in the updating of prior beliefs in light of new evidence$\text{ -- }$so how can we maintain a Bayesian approach if we have no prior beliefs in the first place? This is one of the central challenges in the field of Bayesian deep learning, where it is not clear how to represent beliefs about a prediction task by prior distributions over model parameters. Bridging the fields of Bayesian deep learning and probabilistic meta-learning, we introduce a way to $\textit{learn}$ a weights prior from a collection of datasets by introducing a way to perform per-dataset amortised variational inference. The model we develop can be viewed as a neural process whose latent variable is the set of weights of a BNN and whose decoder is the neural network parameterised by a sample of the latent variable itself. This unique model allows us to study the behaviour of Bayesian neural networks under well-specified priors, use Bayesian neural networks as flexible generative models, and perform desirable but previously elusive feats in neural processes such as within-task minibatching or meta-learning under extreme data-starvation.

翻译:贝叶斯主义的核心特征之一在于根据新证据更新先验信念——那么如果我们最初就缺乏先验信念,该如何保持贝叶斯方法呢?这是贝叶斯深度学习领域的核心挑战之一,因为如何通过模型参数的先验分布来表达对预测任务的信念尚不明确。通过桥接贝叶斯深度学习与概率元学习领域,我们提出一种从数据集集合中学习权重先验的方法,该方法通过实现基于数据集的摊销变分推断来实现。我们开发的模型可视为一种神经过程,其潜在变量是贝叶斯神经网络的权重集合,其解码器则由潜在变量样本本身参数化的神经网络构成。这一独特模型使我们能够研究具有良好设定先验的贝叶斯神经网络的行为,将贝叶斯神经网络用作灵活的生成模型,并实现神经过程中理想但先前难以达成的特性,例如任务内小批量处理或在极端数据稀缺条件下的元学习。