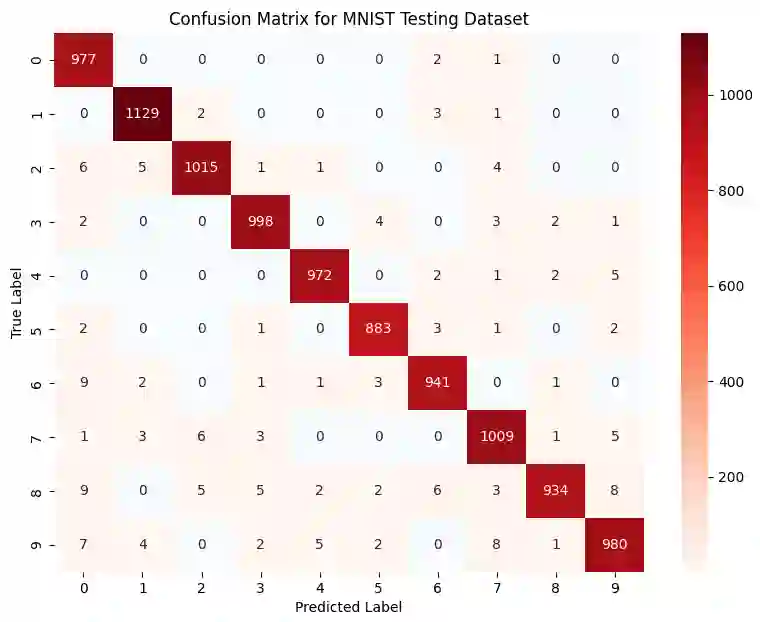

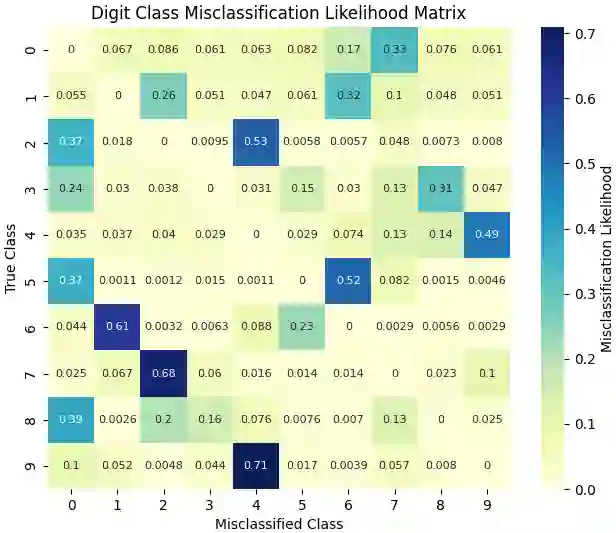

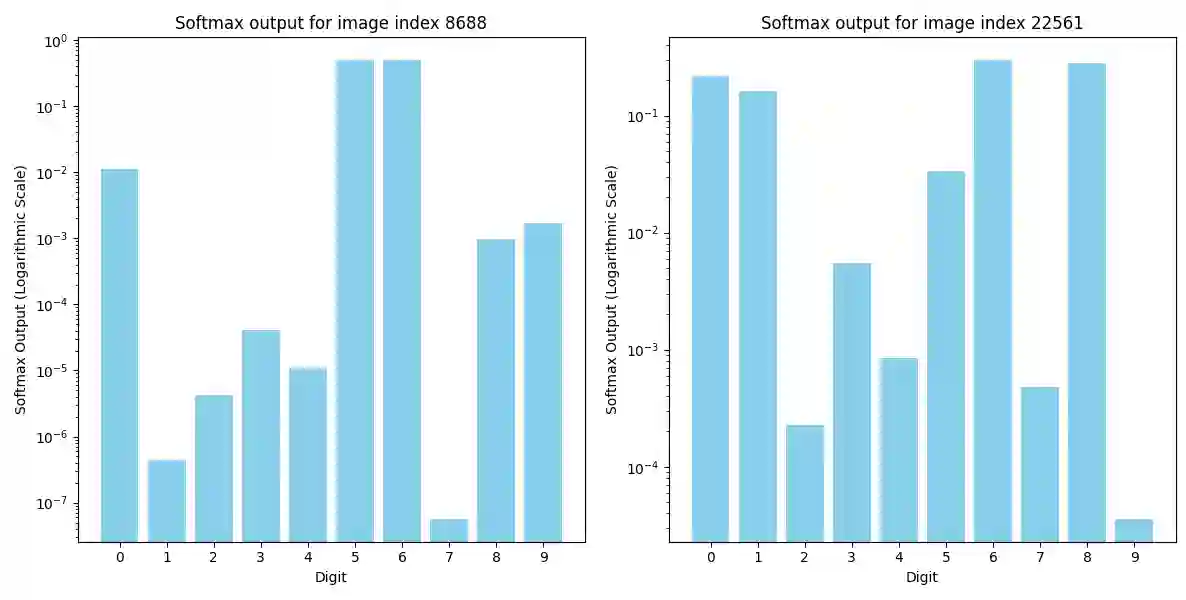

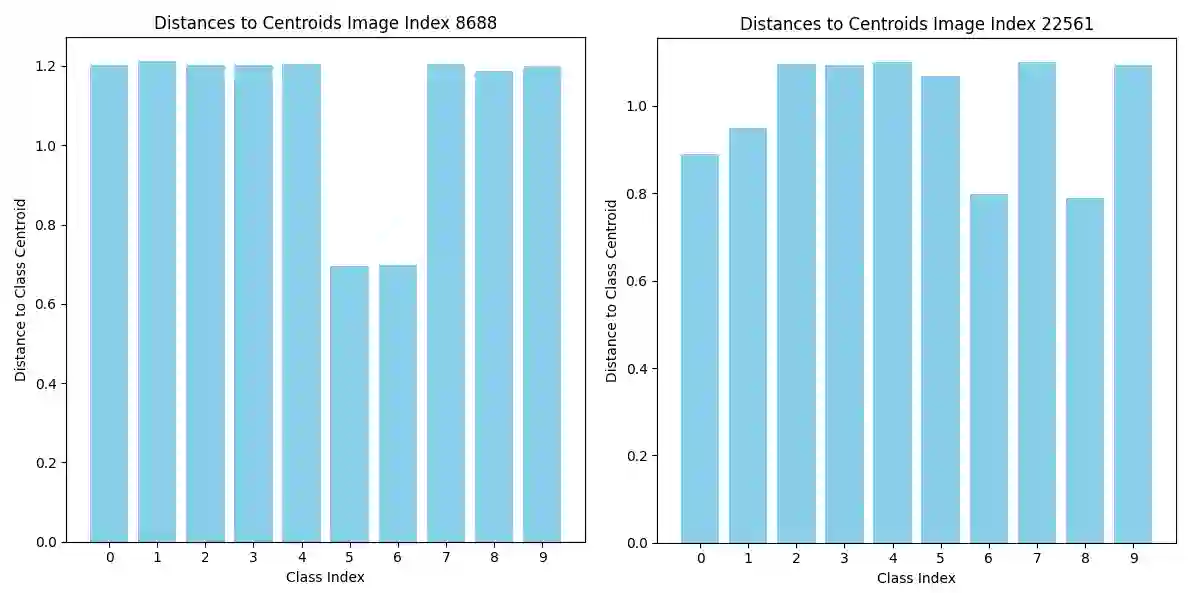

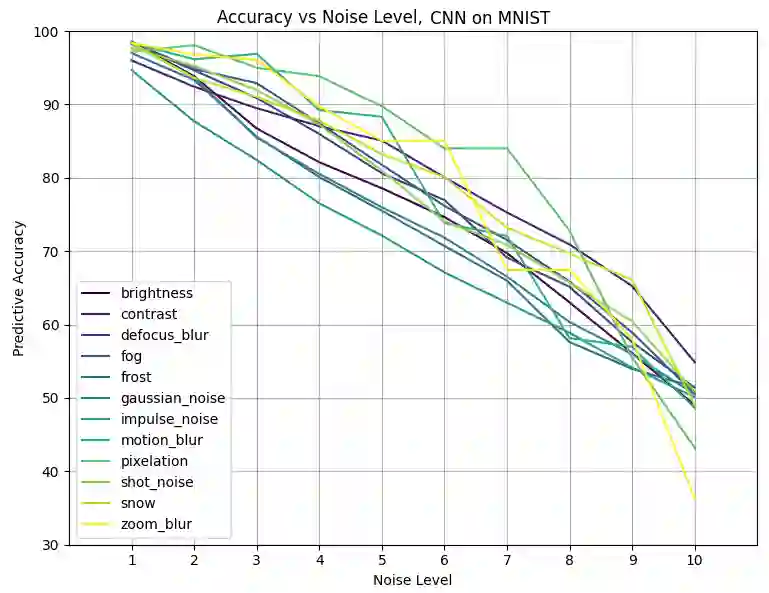

This study introduces the Misclassification Likelihood Matrix (MLM) as a novel tool for quantifying the reliability of neural network predictions under distribution shifts. The MLM is obtained by leveraging softmax outputs and clustering techniques to measure the distances between the predictions of a trained neural network and class centroids. By analyzing these distances, the MLM provides a comprehensive view of the model's misclassification tendencies, enabling decision-makers to identify the most common and critical sources of errors. The MLM allows for the prioritization of model improvements and the establishment of decision thresholds based on acceptable risk levels. The approach is evaluated on the MNIST dataset using a Convolutional Neural Network (CNN) and a perturbed version of the dataset to simulate distribution shifts. The results demonstrate the effectiveness of the MLM in assessing the reliability of predictions and highlight its potential in enhancing the interpretability and risk mitigation capabilities of neural networks. The implications of this work extend beyond image classification, with ongoing applications in autonomous systems, such as self-driving cars, to improve the safety and reliability of decision-making in complex, real-world environments.

翻译:本研究提出误分类似然矩阵(MLM)作为一种量化神经网络在分布偏移下预测可靠性的新工具。MLM通过利用softmax输出和聚类技术,测量训练后神经网络的预测结果与类别质心之间的距离而获得。通过分析这些距离,MLM提供了模型误分类倾向的全面视图,使决策者能够识别最常见且最关键的错误来源。MLM允许基于可接受的风险水平,对模型改进进行优先级排序并建立决策阈值。该方法在MNIST数据集上使用卷积神经网络(CNN)及其扰动版本(用于模拟分布偏移)进行评估。结果表明,MLM在评估预测可靠性方面具有显著效果,并突显了其在增强神经网络可解释性与风险缓解能力方面的潜力。本工作的意义超越了图像分类领域,目前正应用于自动驾驶汽车等自主系统中,以提高复杂现实环境下决策的安全性与可靠性。