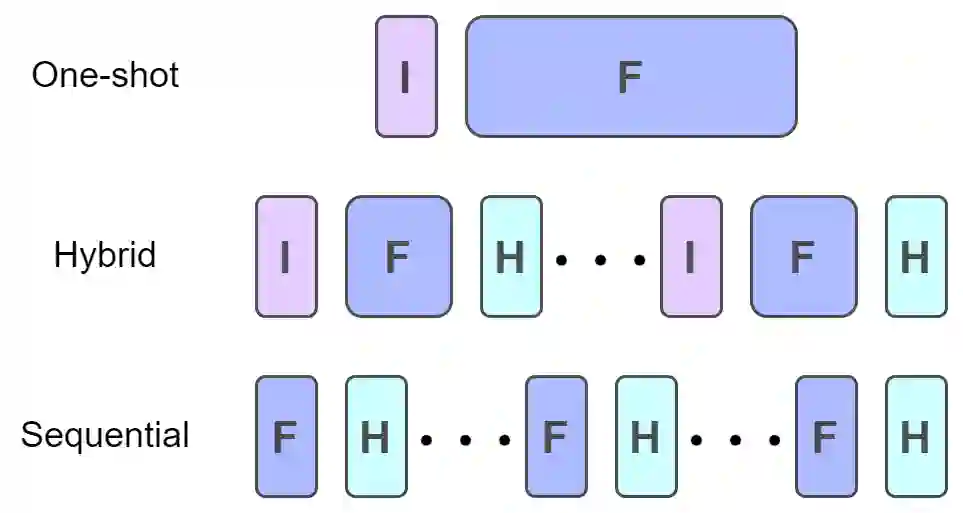

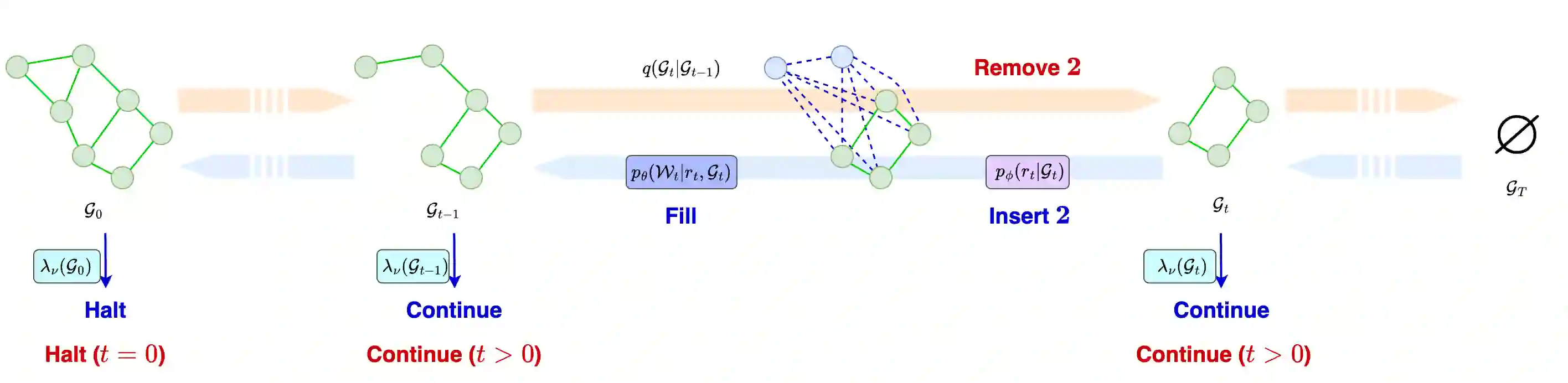

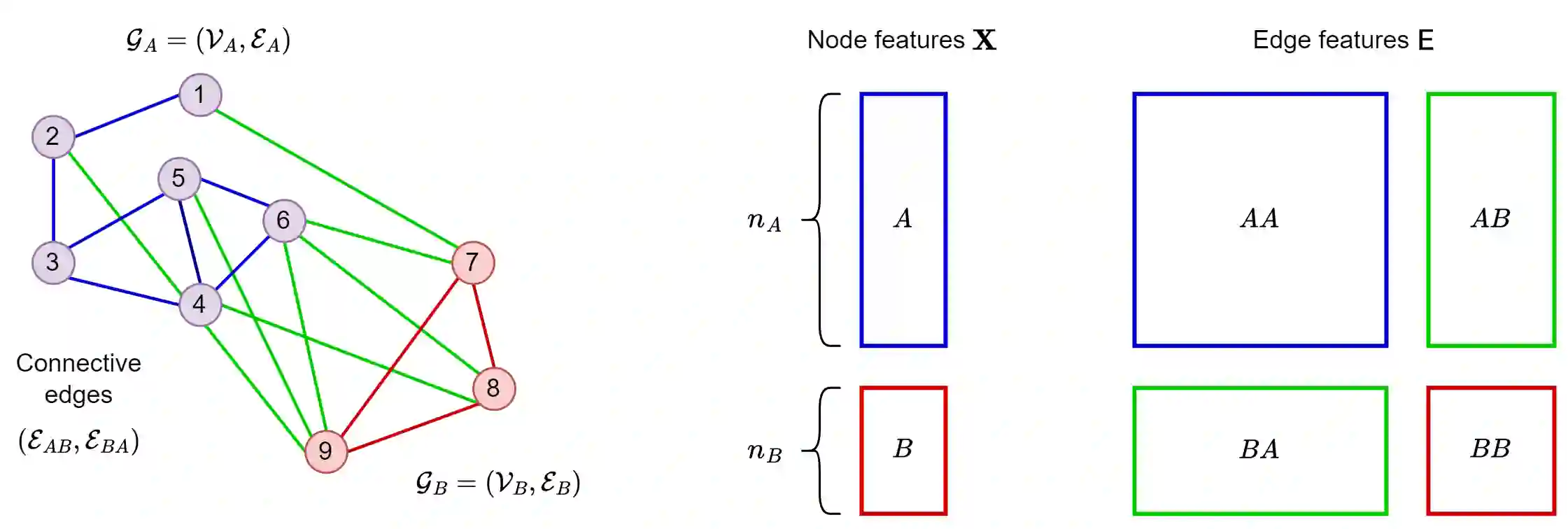

Graph generative models can be classified into two prominent families: one-shot models, which generate a graph in one go, and sequential models, which generate a graph by successive additions of nodes and edges. Ideally, between these two extreme models lies a continuous range of models that adopt different levels of sequentiality. This paper proposes a graph generative model, called Insert-Fill-Halt (IFH), that supports the specification of a sequentiality degree. IFH is based upon the theory of Denoising Diffusion Probabilistic Models (DDPM), designing a node removal process that gradually destroys a graph. An insertion process learns to reverse this removal process by inserting arcs and nodes according to the specified sequentiality degree. We evaluate the performance of IFH in terms of quality, run time, and memory, depending on different sequentiality degrees. We also show that using DiGress, a diffusion-based one-shot model, as a generative step in IFH leads to improvement to the model itself, and is competitive with the current state-of-the-art.

翻译:图生成模型可分为两大主要类别:一次性模型(一次性生成整个图)和序列模型(通过连续添加节点和边来生成图)。理想情况下,在这两种极端模型之间存在一个连续谱系,涵盖不同序列化程度的模型。本文提出了一种称为插入-填充-停止(IFH)的图生成模型,该模型支持指定序列化程度。IFH基于去噪扩散概率模型(DDPM)理论,设计了一个逐步破坏图的节点移除过程。插入过程通过学习逆转该移除过程,根据指定的序列化程度插入弧和节点。我们评估了IFH在不同序列化程度下的生成质量、运行时间和内存消耗方面的性能。我们还证明,将基于扩散的一次性模型DiGress作为IFH的生成步骤,能够提升模型自身的性能,并与当前最先进技术保持竞争力。