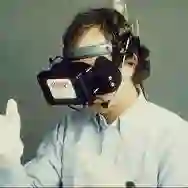

Static information presentation in VR cultural heritage often causes cognitive overload or under-stimulation. We introduce a closed-loop adaptive interface that tailors content depth to real-time visitor behavior through implicit multimodal sensing. Our approach continuously monitors gaze dwell, head kinematics, and locomotion to infer engagement via a transparent rule-based classifier, which drives a Large Language Model to dynamically modulate explanation complexity without interrupting exploration. We implemented a proof-of-concept in the Berat Ethnographic Museum and conducted a preliminary evaluation (N=16) comparing adaptive versus static content. Results indicate that adaptive participants demonstrated 2-3x increases in reading engagement and exploration time while maintaining high usability (SUS = 84.3). Technical validation confirmed sub-millisecond engagement inference latency on consumer VR hardware. These preliminary findings warrant larger-scale investigation and raise questions about engagement validation, AI transparency, and generative models in heritage contexts. We present this work-in-progress to spark discussion about implicit AI-driven adaptation in immersive cultural experiences.

翻译:暂无翻译