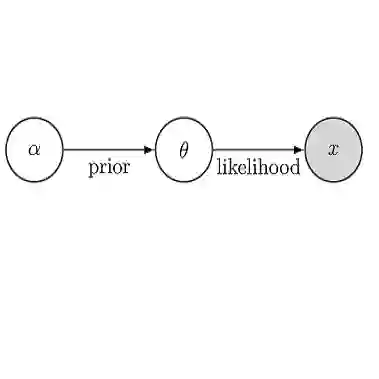

We consider the problem of sampling from an unknown distribution for which only a sufficiently large number of training samples are available. Such settings have recently drawn considerable interest in the context of generative modelling and Bayesian inference. In this paper, we propose a generative model combining Schr\"odinger bridges and Langevin dynamics. Schr\"odinger bridges over an appropriate reversible reference process are used to approximate the conditional transition probability from the available training samples, which is then implemented in a discrete-time reversible Langevin sampler to generate new samples. By setting the kernel bandwidth in the reference process to match the time step size used in the unadjusted Langevin algorithm, our method effectively circumvents any stability issues typically associated with the time-stepping of stiff stochastic differential equations. Moreover, we introduce a novel split-step scheme, ensuring that the generated samples remain within the convex hull of the training samples. Our framework can be naturally extended to generate conditional samples and to Bayesian inference problems. We demonstrate the performance of our proposed scheme through experiments on synthetic datasets with increasing dimensions and on a stochastic subgrid-scale parametrization conditional sampling problem.

翻译:我们考虑从仅拥有足够数量训练样本的未知分布中进行采样的问题。此类设定近年来在生成建模与贝叶斯推断领域引起了广泛关注。本文提出一种结合薛定谔桥与朗之万动力学的生成模型。通过适当可逆参考过程上的薛定谔桥来逼近从可用训练样本出发的条件转移概率,随后在离散时间可逆朗之万采样器中实现该概率以生成新样本。通过将参考过程中的核带宽设置为与非调整朗之万算法的时间步长相匹配,我们的方法有效规避了刚性随机微分方程时间步进中常见的稳定性问题。此外,我们提出了一种新颖的分步格式,确保生成样本始终位于训练样本的凸包内。该框架可自然扩展至条件样本生成及贝叶斯推断问题。我们通过在维度递增的合成数据集及随机亚网格尺度参数化条件采样问题上的实验,验证了所提方案的性能。