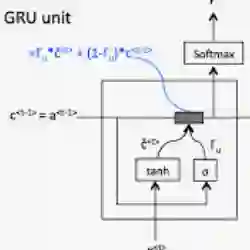

While reasoning over long context is crucial for various real-world applications, it remains challenging for large language models (LLMs) as they suffer from performance degradation as the context length grows. Recent work MemAgent has tried to tackle this by processing context chunk-by-chunk in an RNN-like loop and updating a textual memory for final answering. However, this naive recurrent memory update faces two crucial drawbacks: (i) memory can quickly explode because it can update indiscriminately, even on evidence-free chunks; and (ii) the loop lacks an exit mechanism, leading to unnecessary computation after even sufficient evidence is collected. To address these issues, we propose GRU-Mem, which incorporates two text-controlled gates for more stable and efficient long-context reasoning. Specifically, in GRU-Mem, the memory only updates when the update gate is open and the recurrent loop will exit immediately once the exit gate is open. To endow the model with such capabilities, we introduce two reward signals $r^{\text{update}}$ and $r^{\text{exit}}$ within end-to-end RL, rewarding the correct updating and exiting behaviors respectively. Experiments on various long-context reasoning tasks demonstrate the effectiveness and efficiency of GRU-Mem, which generally outperforms the vanilla MemAgent with up to 400\% times inference speed acceleration.

翻译:尽管长上下文推理对于各类现实应用至关重要,但大型语言模型(LLMs)在此方面仍面临挑战,其性能会随着上下文长度的增加而下降。近期工作 MemAgent 尝试通过类 RNN 循环逐块处理上下文并更新文本记忆以进行最终回答来解决此问题。然而,这种简单的循环记忆更新存在两个关键缺陷:(i)记忆可能迅速膨胀,因为即使在没有证据的文本块上,它也可能不加区分地更新;(ii)循环缺乏退出机制,导致即使在收集到充分证据后仍进行不必要的计算。为解决这些问题,我们提出了 GRU-Mem,它引入了两个文本控制门以实现更稳定高效的长上下文推理。具体而言,在 GRU-Mem 中,记忆仅在更新门开启时更新,且循环会在退出门开启时立即终止。为赋予模型此类能力,我们在端到端强化学习中引入了两个奖励信号 $r^{\text{update}}$ 和 $r^{\text{exit}}$,分别对正确的更新和退出行为进行奖励。在多种长上下文推理任务上的实验证明了 GRU-Mem 的有效性和高效性,其通常优于原始 MemAgent,推理速度最高可提升 400%。