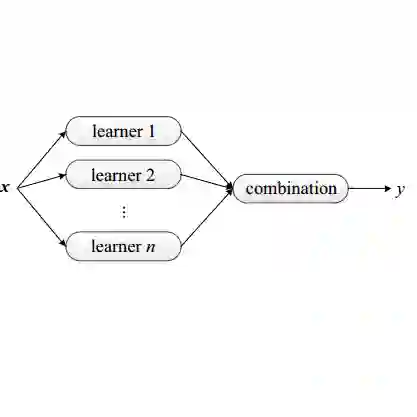

This paper presents the experiments and results for the CheckThat! Lab at CLEF 2024 Task 6: Robustness of Credibility Assessment with Adversarial Examples (InCrediblAE). The primary objective of this task was to generate adversarial examples in five problem domains in order to evaluate the robustness of widely used text classification methods (fine-tuned BERT, BiLSTM, and RoBERTa) when applied to credibility assessment issues. This study explores the application of ensemble learning to enhance adversarial attacks on natural language processing (NLP) models. We systematically tested and refined several adversarial attack methods, including BERT-Attack, Genetic algorithms, TextFooler, and CLARE, on five datasets across various misinformation tasks. By developing modified versions of BERT-Attack and hybrid methods, we achieved significant improvements in attack effectiveness. Our results demonstrate the potential of modification and combining multiple methods to create more sophisticated and effective adversarial attack strategies, contributing to the development of more robust and secure systems.

翻译:本文介绍了在CLEF 2024 CheckThat!实验室任务6“对抗样本下的可信度评估鲁棒性(InCrediblAE)”中的实验与结果。该任务的主要目标是在五个问题领域中生成对抗样本,以评估广泛使用的文本分类方法(包括微调的BERT、BiLSTM和RoBERTa)应用于可信度评估问题时的鲁棒性。本研究探索了应用集成学习来增强对自然语言处理模型的对抗攻击。我们在涵盖各类虚假信息任务的五个数据集上,系统性地测试并改进了多种对抗攻击方法,包括BERT-Attack、遗传算法、TextFooler和CLARE。通过开发BERT-Attack的改进版本及混合方法,我们在攻击效果上取得了显著提升。我们的结果表明,通过改进和组合多种方法能够构建更复杂且有效的对抗攻击策略,从而为开发更鲁棒、更安全的系统作出贡献。