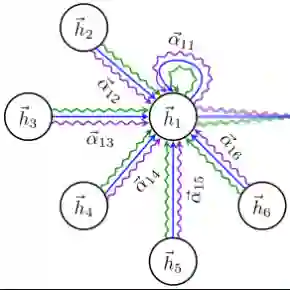

Recent advancements in text-to-speech and speech conversion technologies have enabled the creation of highly convincing synthetic speech. While these innovations offer numerous practical benefits, they also cause significant security challenges when maliciously misused. Therefore, there is an urgent need to detect these synthetic speech signals. Phoneme features provide a powerful speech representation for deepfake detection. However, previous phoneme-based detection approaches typically focused on specific phonemes, overlooking temporal inconsistencies across the entire phoneme sequence. In this paper, we develop a new mechanism for detecting speech deepfakes by identifying the inconsistencies of phoneme-level speech features. We design an adaptive phoneme pooling technique that extracts sample-specific phoneme-level features from frame-level speech data. By applying this technique to features extracted by pre-trained audio models on previously unseen deepfake datasets, we demonstrate that deepfake samples often exhibit phoneme-level inconsistencies when compared to genuine speech. To further enhance detection accuracy, we propose a deepfake detector that uses a graph attention network to model the temporal dependencies of phoneme-level features. Additionally, we introduce a random phoneme substitution augmentation technique to increase feature diversity during training. Extensive experiments on four benchmark datasets demonstrate the superior performance of our method over existing state-of-the-art detection methods.

翻译:近年来,文本到语音和语音转换技术的进步使得生成高度逼真的合成语音成为可能。尽管这些创新带来了诸多实际益处,但当其被恶意滥用时,也会引发严重的安全挑战。因此,迫切需要检测这些合成语音信号。音素特征为深度伪造检测提供了强大的语音表征。然而,以往基于音素的检测方法通常关注特定音素,忽略了整个音素序列中的时序不一致性。本文通过识别音素级语音特征的不一致性,开发了一种新的语音深度伪造检测机制。我们设计了一种自适应音素池化技术,从帧级语音数据中提取样本特定的音素级特征。通过将该技术应用于预训练音频模型在先前未见过的深度伪造数据集上提取的特征,我们证明了与真实语音相比,深度伪造样本常表现出音素级不一致性。为进一步提升检测精度,我们提出了一种采用图注意力网络建模音素级特征时序依赖性的深度伪造检测器。此外,我们引入了一种随机音素替换增强技术,以增加训练过程中的特征多样性。在四个基准数据集上的大量实验表明,我们的方法优于现有的最先进检测方法。