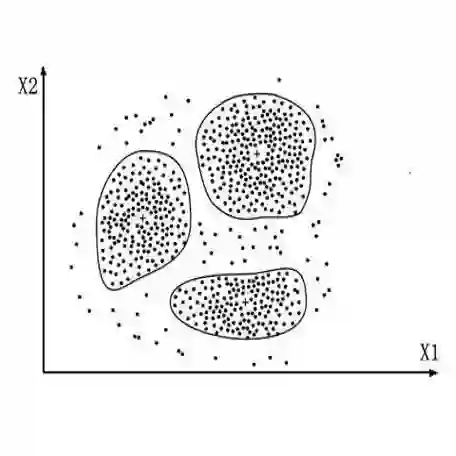

Text clustering remains valuable in real-world applications where manual labeling is cost-prohibitive. It facilitates efficient organization and analysis of information by grouping similar texts based on their representations. However, implementing this approach necessitates fine-tuned embedders for downstream data and sophisticated similarity metrics. To address this issue, this study presents a novel framework for text clustering that effectively leverages the in-context learning capacity of Large Language Models (LLMs). Instead of fine-tuning embedders, we propose to transform the text clustering into a classification task via LLM. First, we prompt LLM to generate potential labels for a given dataset. Second, after integrating similar labels generated by the LLM, we prompt the LLM to assign the most appropriate label to each sample in the dataset. Our framework has been experimentally proven to achieve comparable or superior performance to state-of-the-art clustering methods that employ embeddings, without requiring complex fine-tuning or clustering algorithms. We make our code available to the public for utilization at https://github.com/ECNU-Text-Computing/Text-Clustering-via-LLM.

翻译:文本聚类在人工标注成本高昂的实际应用中仍具有重要价值。它通过基于文本表示对相似文本进行分组,促进信息的有效组织与分析。然而,实施该方法需要针对下游数据微调嵌入模型并采用复杂的相似性度量。为解决此问题,本研究提出了一种新颖的文本聚类框架,有效利用大语言模型(LLMs)的上下文学习能力。我们提出通过LLM将文本聚类转化为分类任务,而非微调嵌入模型。首先,我们提示LLM为给定数据集生成潜在标签;其次,在整合LLM生成的相似标签后,我们提示LLM为数据集中的每个样本分配最合适的标签。实验证明,我们的框架在不需复杂微调或聚类算法的情况下,实现了与当前最先进的基于嵌入的聚类方法相当或更优的性能。我们已在https://github.com/ECNU-Text-Computing/Text-Clustering-via-LLM公开代码以供使用。