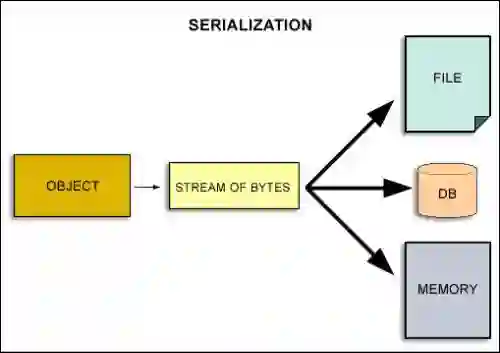

Code reasoning tasks are becoming prevalent in large language model (LLM) assessments. Yet, there is a dearth of studies on the impact of real-world complexities on code reasoning, e.g., inter- or intra-procedural dependencies, API calls, deeply nested constructs, and non-primitive complex types. Evaluating LLMs under such a simplistic setting poses a significant threat to assumptions about their generalizability in practice. To enable a more realistic evaluation of code reasoning, we construct a dataset of 1200 reasoning problems from two sources: existing code reasoning benchmarks and popular GitHub Python repositories. Our pipeline leverages static and dynamic program analysis to automatically serialize/deserialize compound, complex, and custom types galore in real-world code, going far beyond only primitive types used in prior studies. A key feature of our dataset is categorizing each reasoning problem as Lower Complexity (LC) or Higher Complexity (HC) via a principled majority-vote mechanism over nine diverse and interpretable code-complexity metrics, yielding two well-separated, semantically meaningful categories of problem difficulty suitable for precise calibration of LLM reasoning ability. This categorization shows that the problems used in existing code-reasoning evaluation mostly belong to the LC category, failing to represent real-world complexity.

翻译:代码推理任务正日益成为大型语言模型评估中的常见内容。然而,目前鲜有研究探讨真实世界复杂性对代码推理的影响,例如过程间或过程内依赖、API调用、深层嵌套结构以及非原始复杂类型。在此类简化设定下评估大型语言模型,对其在实际应用中的泛化能力假设构成了重大威胁。为实现更贴近现实的代码推理评估,我们从两个来源构建了包含1200个推理问题的数据集:现有代码推理基准测试和流行的GitHub Python代码库。我们的处理流程利用静态与动态程序分析技术,自动序列化/反序列化真实代码中大量存在的复合型、复杂型及自定义类型,远超先前研究仅使用原始类型的范畴。本数据集的关键特征在于:通过基于九种多样化且可解释的代码复杂度指标的多数投票机制,将每个推理问题系统性地归类为低复杂度或高复杂度,从而形成两个区分明确、语义清晰的问题难度类别,适用于精确校准大型语言模型的推理能力。该分类结果表明,现有代码推理评估所使用的问题大多属于低复杂度类别,未能充分体现真实世界的复杂性。