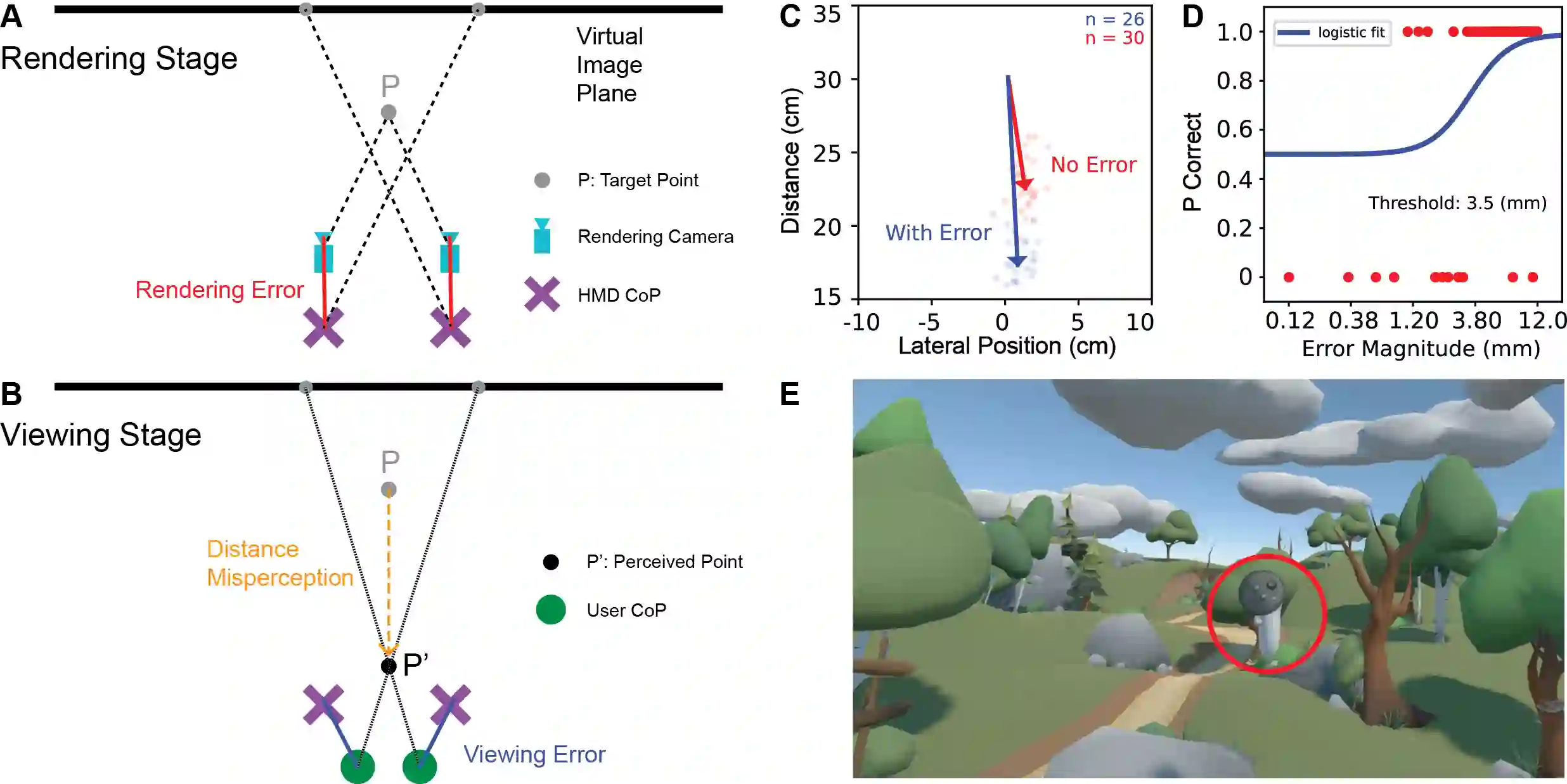

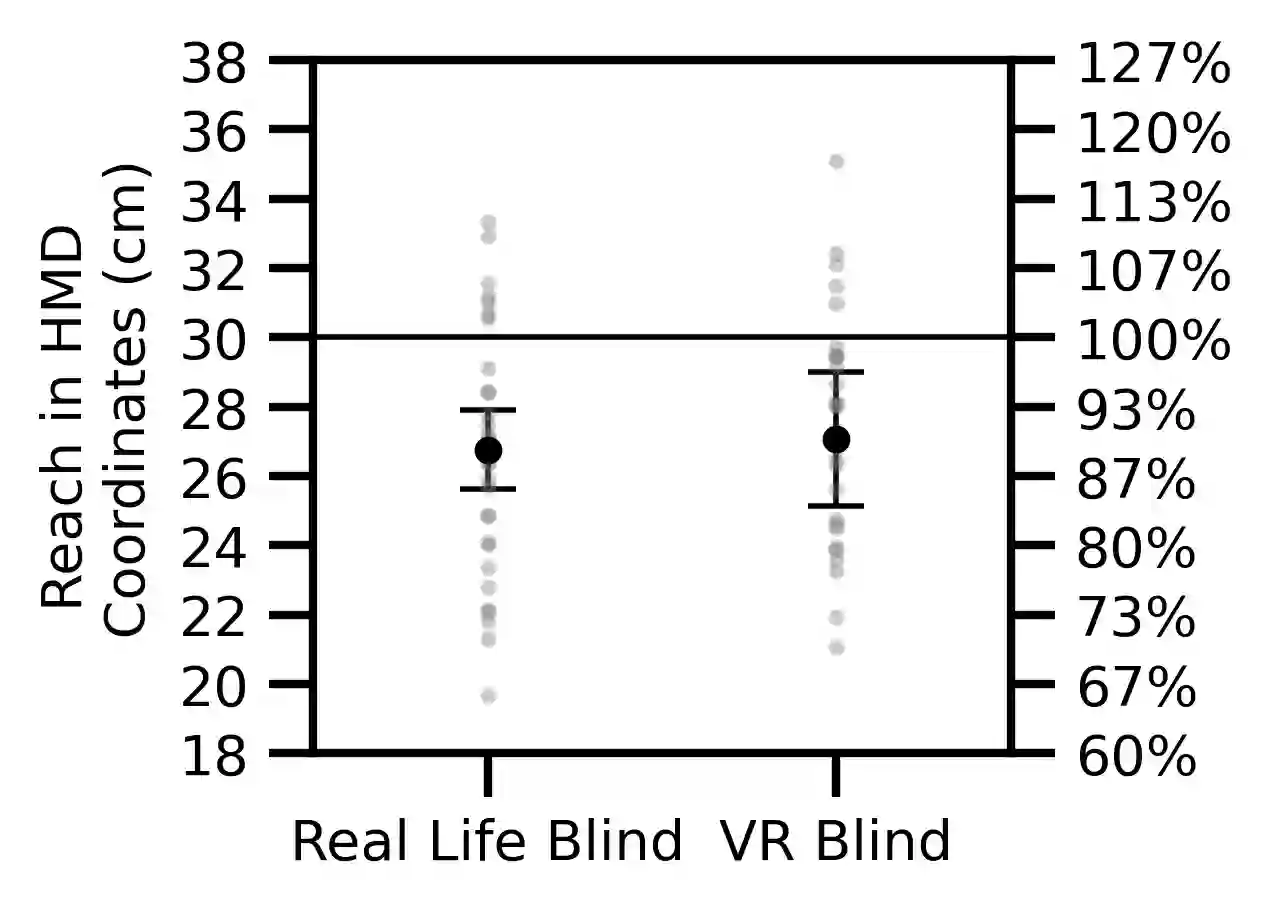

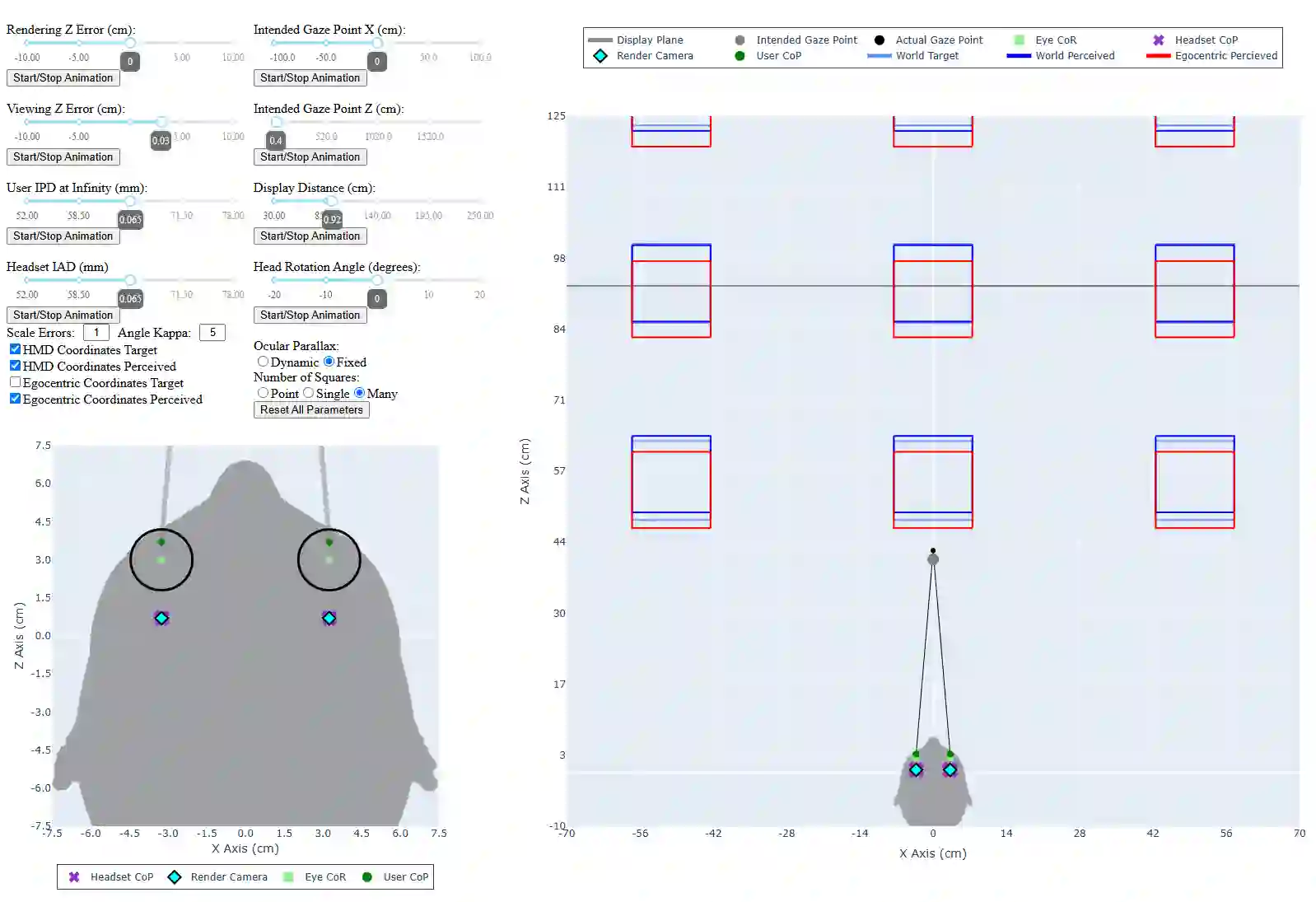

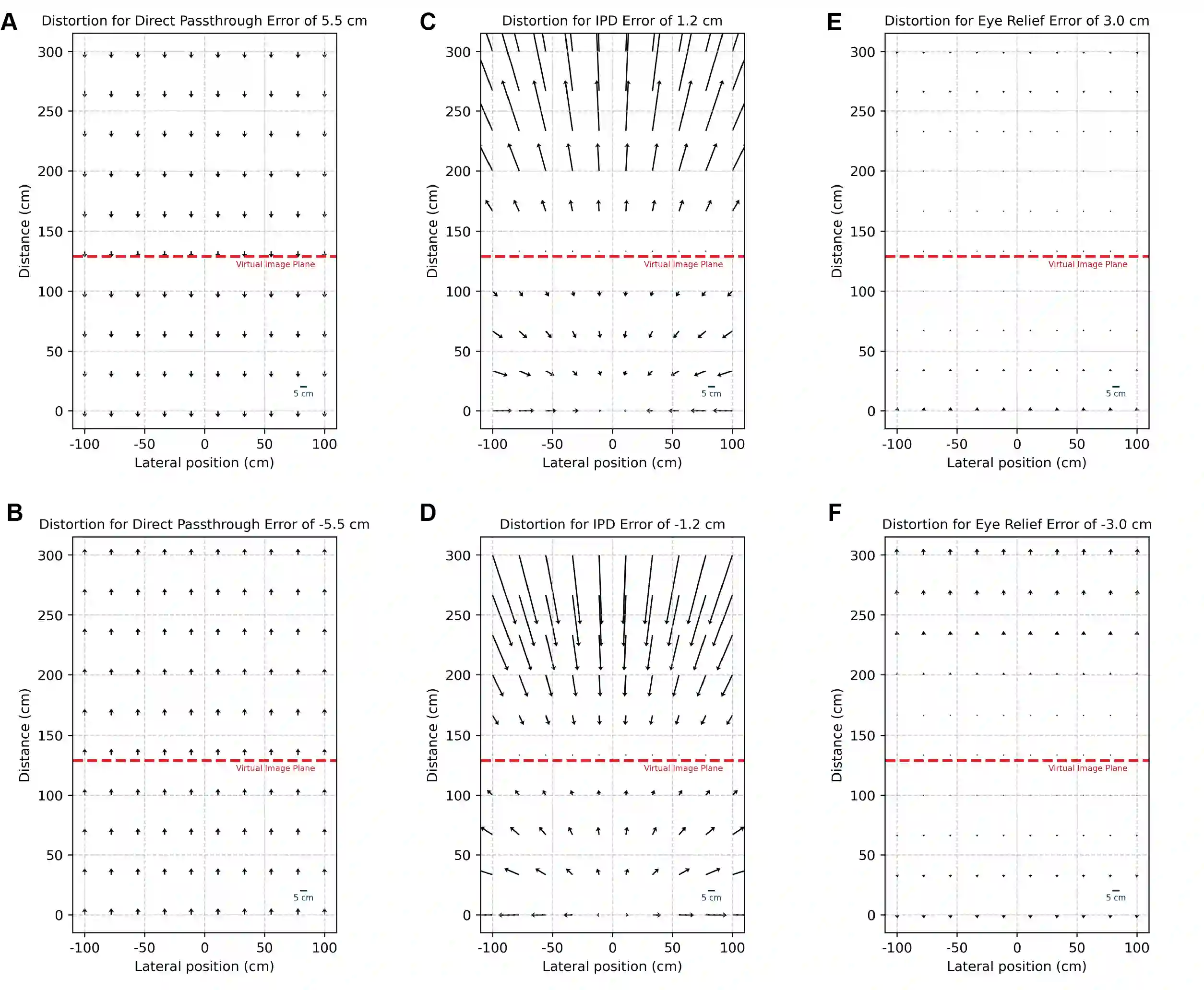

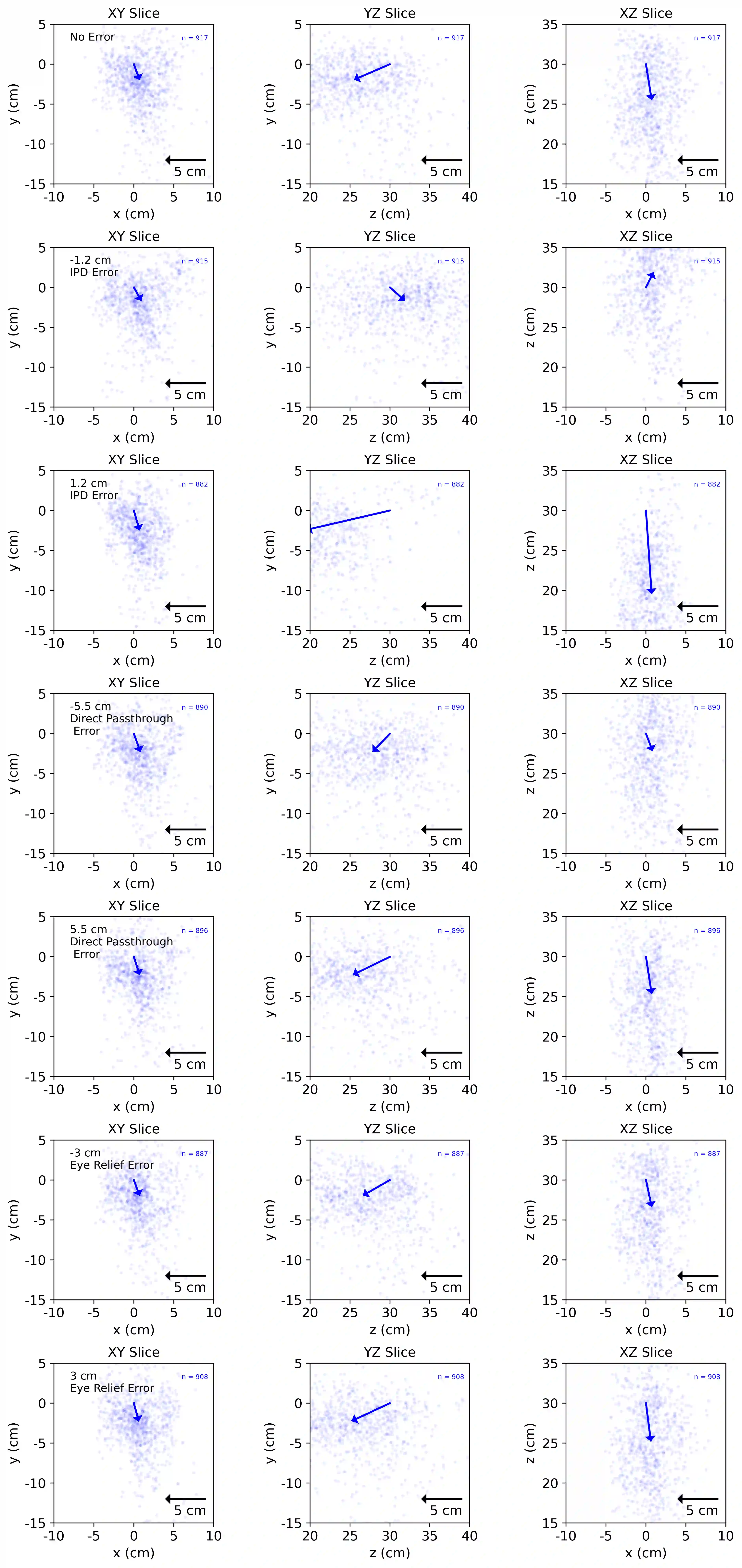

Stereoscopic head-mounted displays (HMDs) render and present binocular images to create an egocentric, 3D percept to the HMD user. Within this render and presentation pipeline there are potential rendering camera and viewing position errors that can induce deviations in the depth and distance that a user perceives compared to the underlying intended geometry. For example, rendering errors can arise when HMD render cameras are incorrectly positioned relative to the assumed centers of projections of the HMD displays and viewing errors can arise when users view stereo geometry from the incorrect location in the HMD eyebox. In this work we present a geometric framework that predicts errors in distance perception arising from inaccurate HMD perspective geometry and build an HMD platform to reliably simulate render and viewing error in a Quest 3 HMD with eye tracking to experimentally test these predictions. We present a series of five experiments to explore the efficacy of this geometric framework and show that errors in perspective geometry can induce both under- and over-estimations in perceived distance. We further demonstrate how real-time visual feedback can be used to dynamically recalibrate visuomotor mapping so that an accurate reach distance is achieved even if the perceived visual distance is negatively impacted by geometric error.

翻译:立体头戴式显示器(HMD)通过渲染并呈现双目图像,为使用者营造以自我为中心的三维感知。在该渲染与呈现流程中,潜在的渲染相机误差与观察位置误差可能导致用户感知到的深度和距离与底层预期几何形态产生偏差。例如,当HMD渲染相机相对于预设的HMD显示投影中心定位不准确时会产生渲染误差;而当用户在HMD眼动框内从错误位置观察立体几何时则会产生观察误差。本研究提出一种几何框架,用于预测由HMD透视几何不准确引起的距离感知误差,并构建了一个集成眼动追踪功能的Quest 3 HMD实验平台,以可靠模拟渲染与观察误差并实证检验这些预测。我们通过五项系列实验验证该几何框架的有效性,结果表明透视几何误差会导致感知距离的低估与高估。进一步研究证明,实时视觉反馈可用于动态重校准视觉-运动映射,即使在感知视觉距离受几何误差负面影响时,仍能实现精确的触及距离。