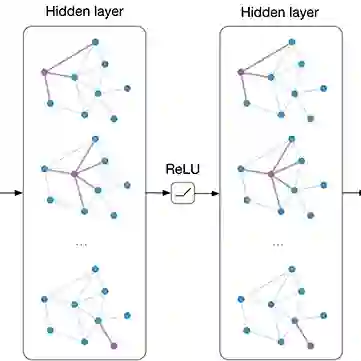

Backdoor attacks pose a critical threat to the security of deep neural networks, yet existing efforts on universal backdoors often rely on visually salient patterns, making them easier to detect and less practical at scale. In this work, we introduce a novel imperceptible universal backdoor attack that simultaneously controls all target classes with minimal poisoning while preserving stealth. Our key idea is to leverage graph convolutional networks (GCNs) to model inter-class relationships and generate class-specific perturbations that are both effective and visually invisible. The proposed framework optimizes a dual-objective loss that balances stealthiness (measured by perceptual similarity metrics such as PSNR) and attack success rate (ASR), enabling scalable, multi-target backdoor injection. Extensive experiments on ImageNet-1K with ResNet architectures demonstrate that our method achieves high ASR (up to 91.3%) under poisoning rates as low as 0.16%, while maintaining benign accuracy and evading state-of-the-art defenses. These results highlight the emerging risks of invisible universal backdoors and call for more robust detection and mitigation strategies.

翻译:后门攻击对深度神经网络的安全构成严重威胁,然而现有关于通用后门的研究通常依赖于视觉上显著的图案,这使其更易被检测且在大规模应用中实用性较低。本文提出一种新颖的不可感知通用后门攻击方法,该方法能够在最小化投毒量的同时控制所有目标类别,并保持隐蔽性。我们的核心思想是利用图卷积网络(GCN)建模类别间关系,并生成既高效又视觉不可见的类别特定扰动。所提出的框架优化了一个双目标损失函数,该函数平衡了隐蔽性(通过PSNR等感知相似性度量评估)与攻击成功率(ASR),从而实现可扩展的多目标后门注入。在ImageNet-1K数据集上使用ResNet架构进行的大量实验表明,我们的方法在投毒率低至0.16%的情况下仍能实现高ASR(最高达91.3%),同时保持良性样本的准确率并规避现有先进防御机制。这些结果揭示了不可见通用后门的新兴风险,呼吁开发更鲁棒的检测与缓解策略。