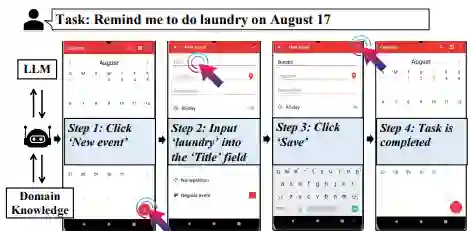

Recent advances in foundation models, particularly Large Language Models (LLMs) and Multimodal Large Language Models (MLLMs), facilitate intelligent agents being capable of performing complex tasks. By leveraging the ability of (M)LLMs to process and interpret Graphical User Interfaces (GUIs), these agents can autonomously execute user instructions by simulating human-like interactions such as clicking and typing. This survey consolidates recent research on (M)LLM-based GUI agents, highlighting key innovations in data, frameworks, and applications. We begin by discussing representative datasets and benchmarks. Next, we summarize a unified framework that captures the essential components used in prior research, accompanied by a taxonomy. Additionally, we explore commercial applications of (M)LLM-based GUI agents. Drawing from existing work, we identify several key challenges and propose future research directions. We hope this paper will inspire further developments in the field of (M)LLM-based GUI agents.

翻译:近年来,基础模型特别是大语言模型(LLMs)与多模态大语言模型(MLLMs)的进展,推动了能够执行复杂任务的智能体的发展。通过利用(M)LLMs处理与解析图形用户界面(GUIs)的能力,这类智能体可通过模拟人类交互行为(如点击与输入)自主执行用户指令。本文整合了近期基于(M)LLM的GUI智能体研究,重点阐述了其在数据、框架与应用方面的关键创新。首先,我们讨论了代表性数据集与基准测试。其次,我们总结了一个涵盖现有研究核心组件的统一框架,并附以分类体系。此外,本文探讨了基于(M)LLM的GUI智能体在商业领域的应用。基于现有工作,我们识别了若干关键挑战并提出了未来研究方向。我们希望本文能进一步激发基于(M)LLM的GUI智能体领域的发展。