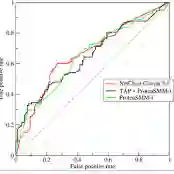

With large language models (LLMs) poised to become embedded in our daily lives, questions are starting to be raised about the data they learned from. These questions range from potential bias or misinformation LLMs could retain from their training data to questions of copyright and fair use of human-generated text. However, while these questions emerge, developers of the recent state-of-the-art LLMs become increasingly reluctant to disclose details on their training corpus. We here introduce the task of document-level membership inference for real-world LLMs, i.e. inferring whether the LLM has seen a given document during training or not. First, we propose a procedure for the development and evaluation of document-level membership inference for LLMs by leveraging commonly used data sources for training and the model release date. We then propose a practical, black-box method to predict document-level membership and instantiate it on OpenLLaMA-7B with both books and academic papers. We show our methodology to perform very well, reaching an AUC of 0.856 for books and 0.678 for papers. We then show our approach to outperform the sentence-level membership inference attacks used in the privacy literature for the document-level membership task. We further evaluate whether smaller models might be less sensitive to document-level inference and show OpenLLaMA-3B to be approximately as sensitive as OpenLLaMA-7B to our approach. Finally, we consider two mitigation strategies and find the AUC to slowly decrease when only partial documents are considered but to remain fairly high when the model precision is reduced. Taken together, our results show that accurate document-level membership can be inferred for LLMs, increasing the transparency of technology poised to change our lives.

翻译:随着大型语言模型(LLMs)即将融入我们的日常生活,人们开始关注其训练数据的来源。这些问题既涉及LLMs可能从训练数据中保留的潜在偏见或错误信息,也涉及对人类生成文本的版权与合理使用问题。然而,尽管这些问题日益凸显,当前最先进LLMs的开发者却越来越不愿披露其训练语料库的细节。本文针对现实世界中的LLMs提出了文档级成员推理任务,即推断给定文档是否曾被LLM在训练过程中学习过。首先,我们通过利用常见的训练数据源和模型发布日期,提出了面向LLMs的文档级成员推理方法开发与评估流程。随后,我们提出了一种实用的黑盒预测方法,并在OpenLLaMA-7B模型上分别针对书籍和学术论文进行了实例验证。实验表明我们的方法性能优异,在书籍和论文数据上分别达到0.856和0.678的AUC值。进一步分析显示,在文档级成员推理任务上,我们的方法优于隐私文献中常用的句子级成员推理攻击。我们还评估了较小模型对文档级推理的敏感性,发现OpenLLaMA-3B模型与OpenLLaMA-7B模型对我们的方法具有相近的敏感性。最后,我们考察了两种缓解策略:当仅使用部分文档内容时,AUC值缓慢下降;而当降低模型精度时,AUC值仍保持较高水平。综合而言,我们的研究证明了对LLMs进行精确文档级成员推理的可行性,这为这项即将改变我们生活的技术提供了更高的透明度。