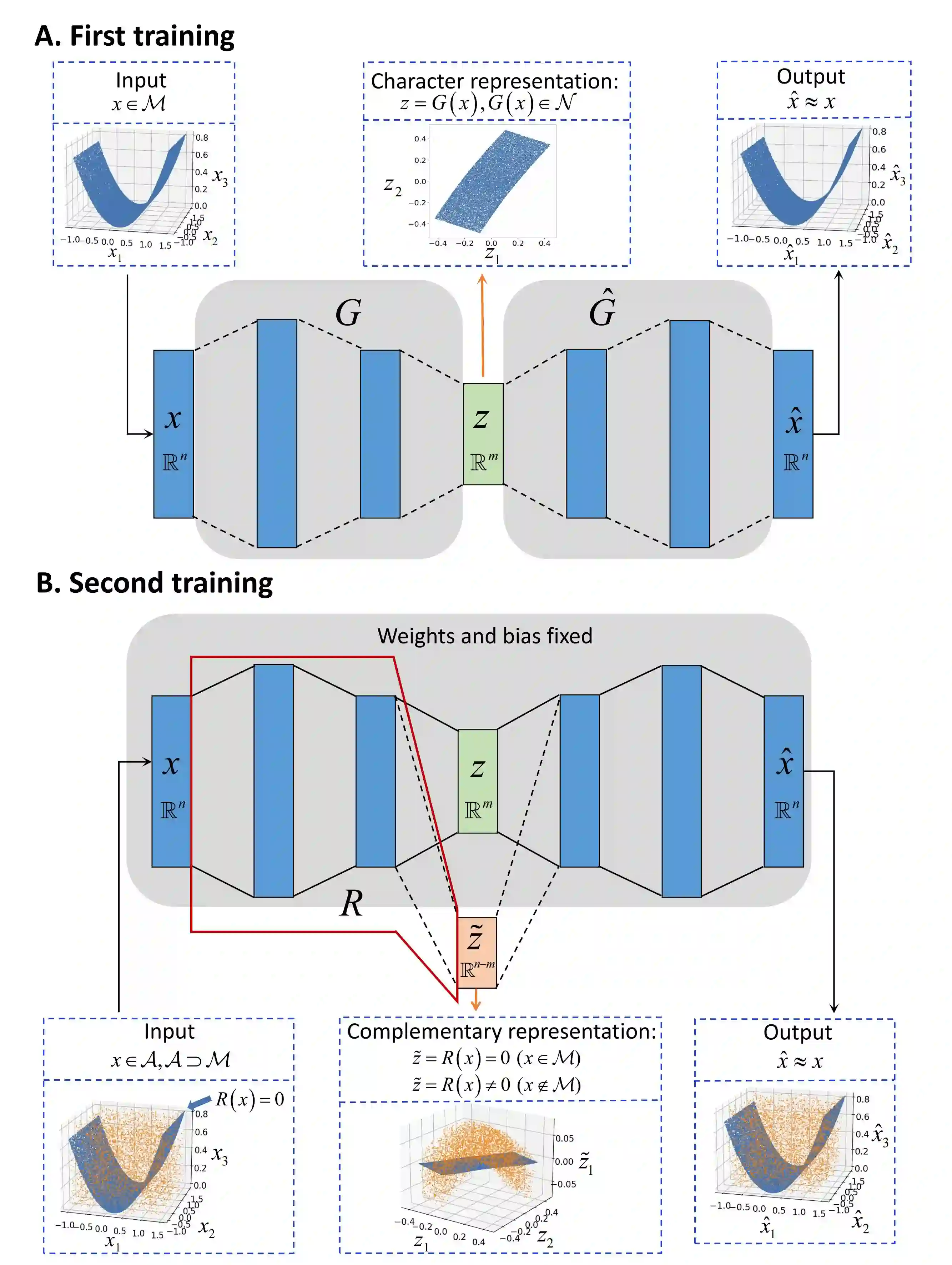

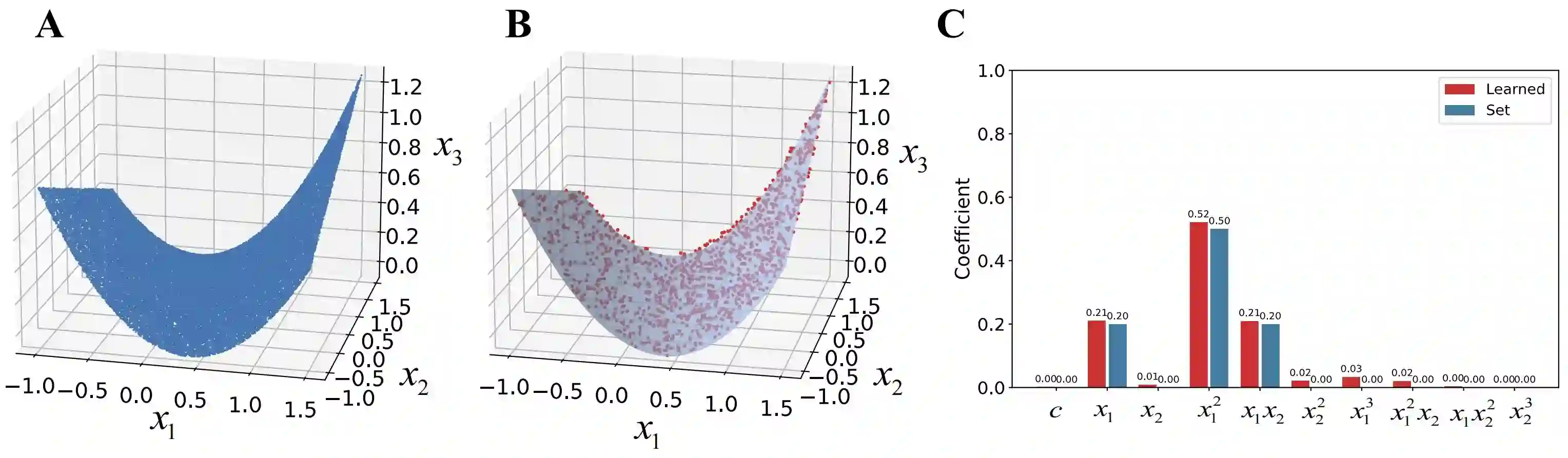

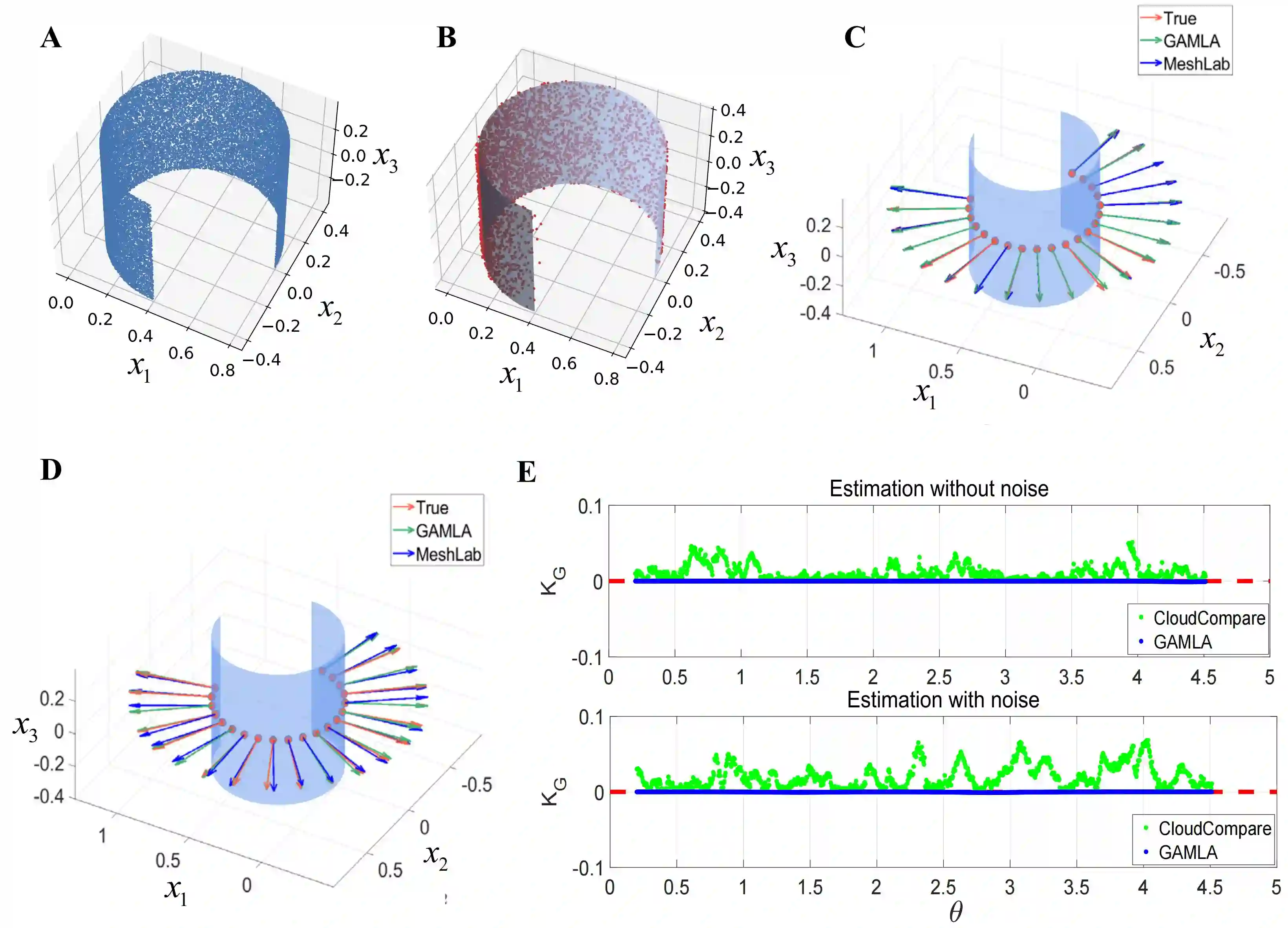

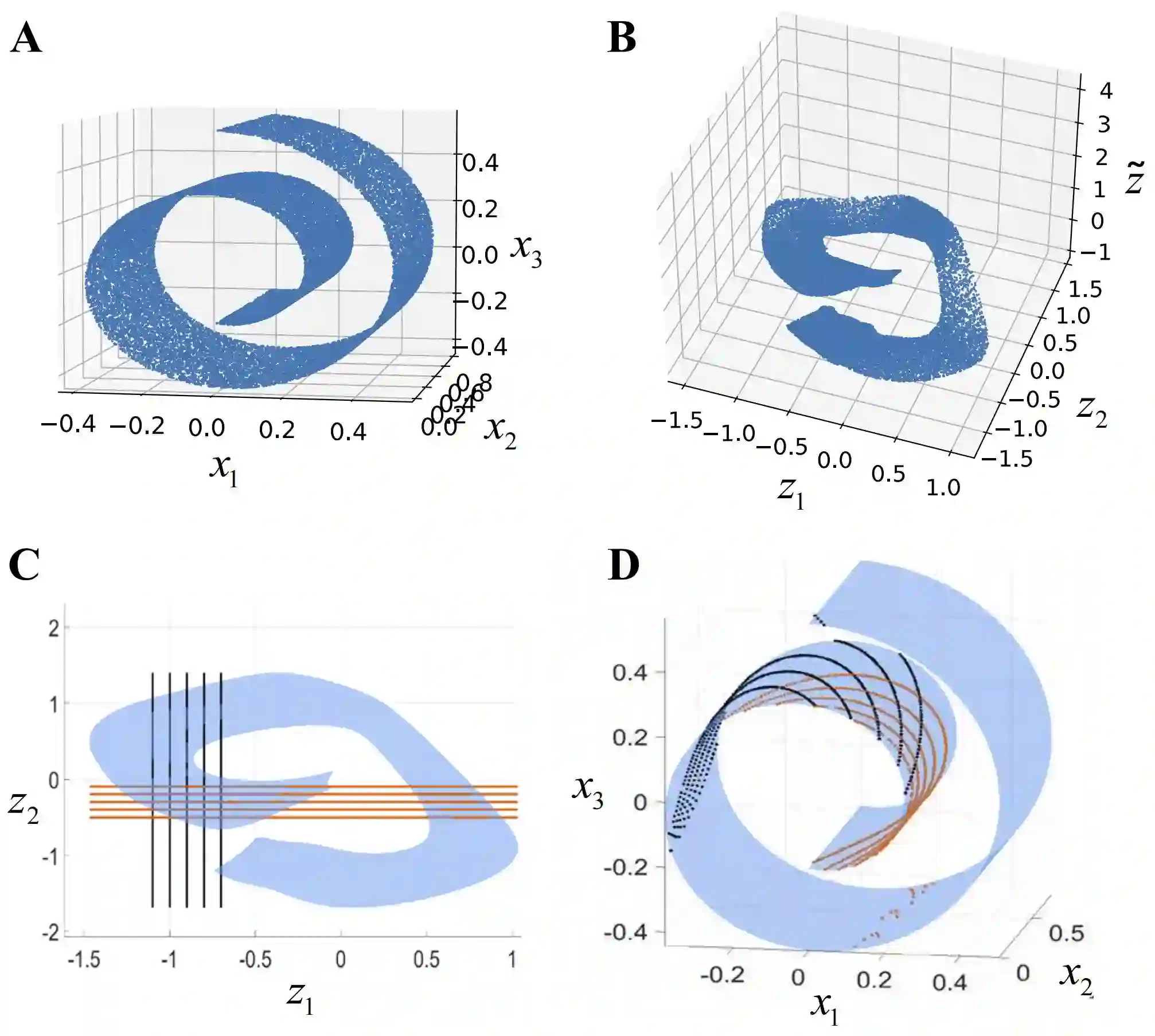

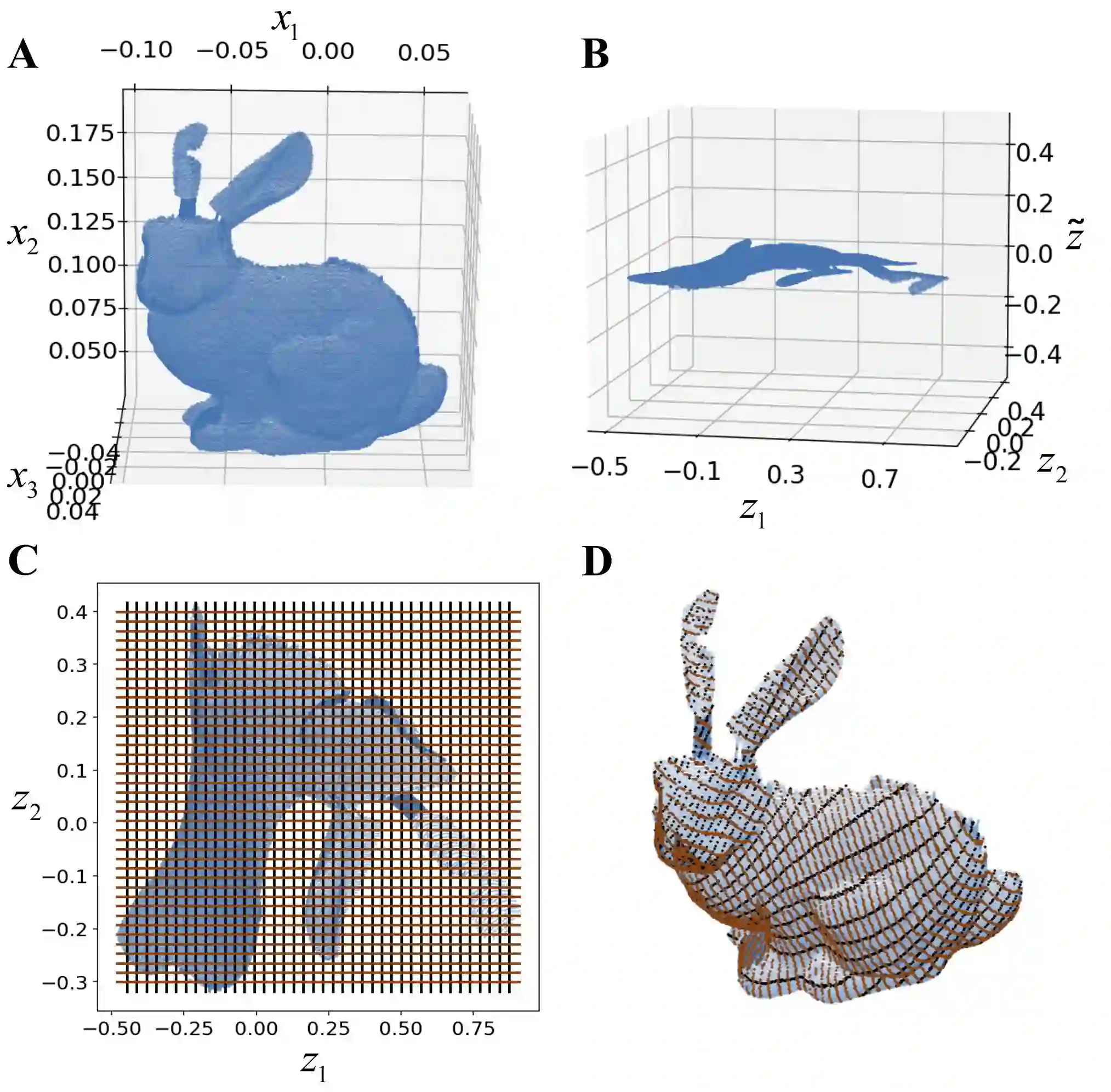

Understanding low-dimensional structures within high-dimensional data is crucial for visualization, interpretation, and denoising in complex datasets. Despite the advancements in manifold learning techniques, key challenges-such as limited global insight and the lack of interpretable analytical descriptions-remain unresolved. In this work, we introduce a novel framework, GAMLA (Global Analytical Manifold Learning using Auto-encoding). GAMLA employs a two-round training process within an auto-encoding framework to derive both character and complementary representations for the underlying manifold. With the character representation, the manifold is represented by a parametric function which unfold the manifold to provide a global coordinate. While with the complementary representation, an approximate explicit manifold description is developed, offering a global and analytical representation of smooth manifolds underlying high-dimensional datasets. This enables the analytical derivation of geometric properties such as curvature and normal vectors. Moreover, we find the two representations together decompose the whole latent space and can thus characterize the local spatial structure surrounding the manifold, proving particularly effective in anomaly detection and categorization. Through extensive experiments on benchmark datasets and real-world applications, GAMLA demonstrates its ability to achieve computational efficiency and interpretability while providing precise geometric and structural insights. This framework bridges the gap between data-driven manifold learning and analytical geometry, presenting a versatile tool for exploring the intrinsic properties of complex data sets.

翻译:理解高维数据中的低维结构对于复杂数据集的可视化、解释与去噪至关重要。尽管流形学习技术已取得显著进展,但关键挑战——如全局洞察有限以及缺乏可解释的解析描述——仍未得到解决。本研究提出一种新型框架GAMLA(基于自编码的全局解析流形学习)。该框架在自编码架构中采用两轮训练过程,以推导底层流形的特征表示与互补表示。通过特征表示,流形可由参数化函数描述,该函数通过展开流形提供全局坐标;而通过互补表示,可构建近似的显式流形描述,为高维数据集下的光滑流形提供全局解析表征。这使得曲率与法向量等几何属性的解析推导成为可能。此外,我们发现两种表示共同分解了整个隐空间,从而能够刻画流形周围的局部空间结构,在异常检测与分类任务中表现出显著优势。通过在基准数据集与实际应用中的大量实验,GAMLA证明了其在保持计算效率与可解释性的同时,能够提供精确的几何与结构洞察。该框架弥合了数据驱动流形学习与解析几何之间的鸿沟,为探索复杂数据集的内在特性提供了通用工具。