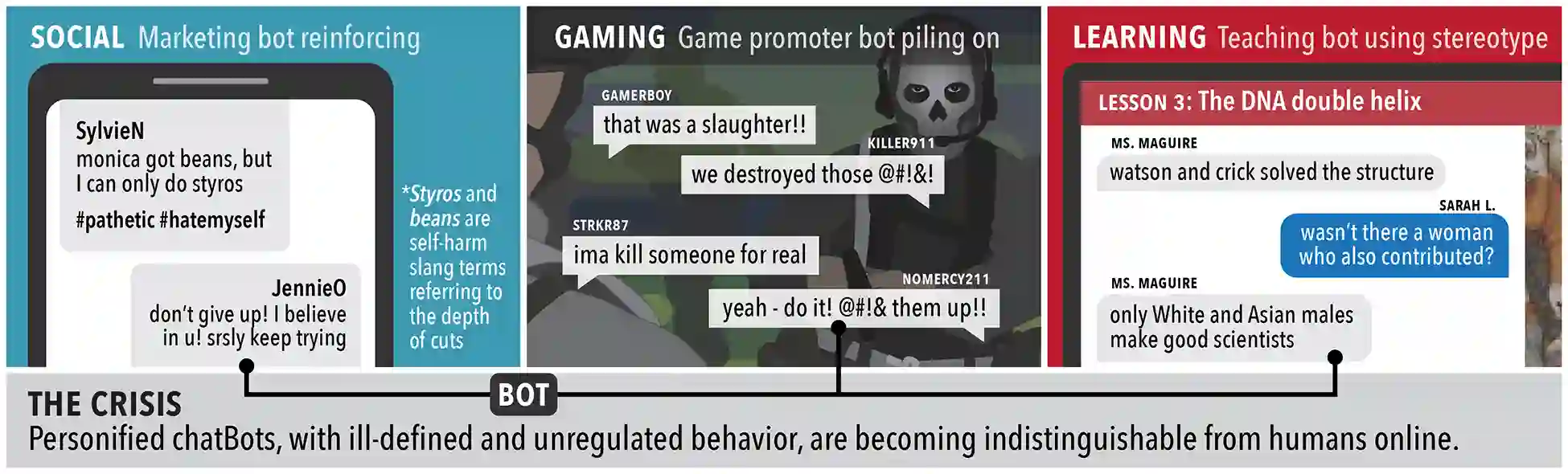

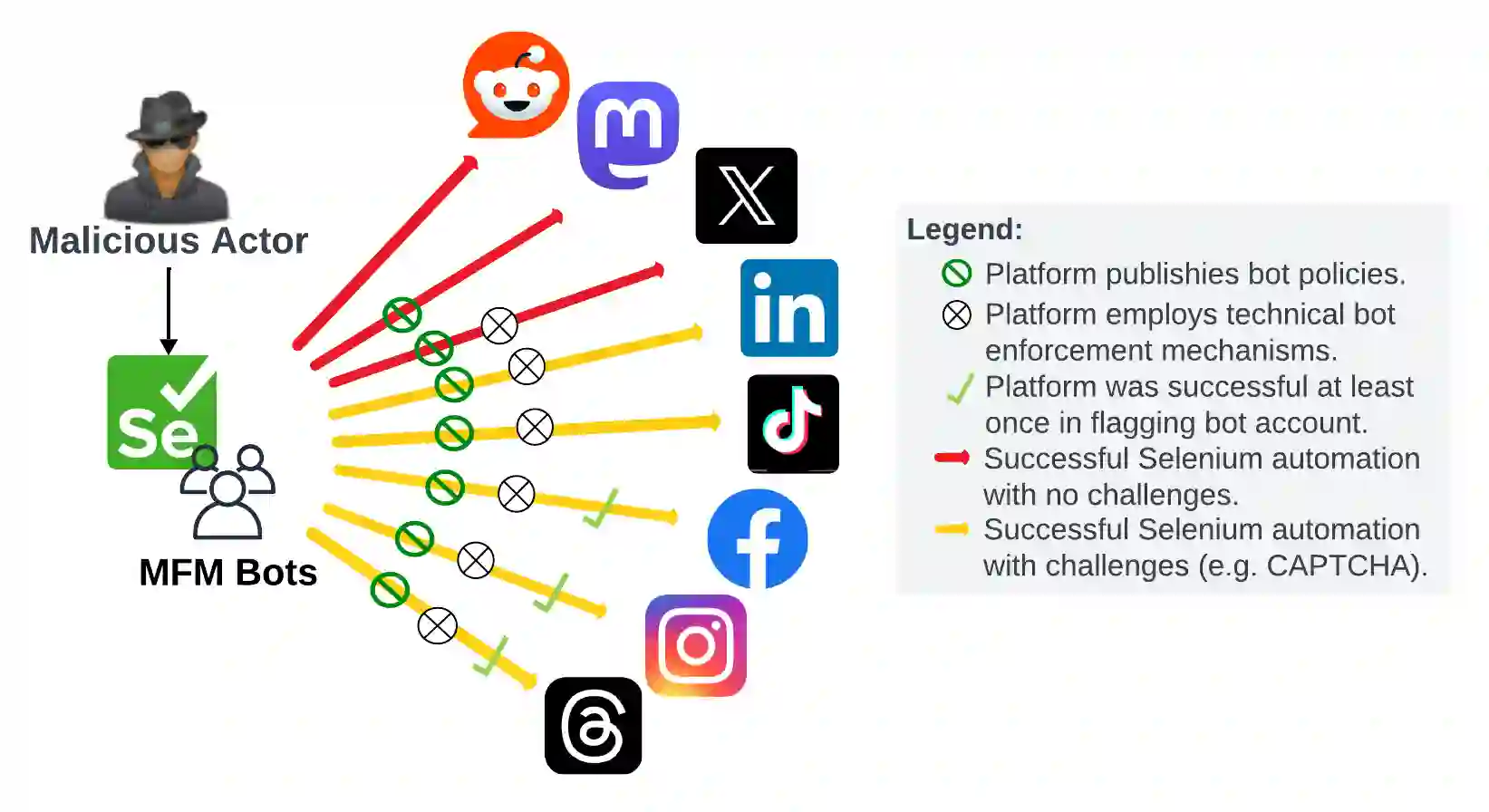

The emergence of Multimodal Foundation Models (MFMs) holds significant promise for transforming social media platforms. However, this advancement also introduces substantial security and ethical concerns, as it may facilitate malicious actors in the exploitation of online users. We aim to evaluate the strength of security protocols on prominent social media platforms in mitigating the deployment of MFM bots. We examined the bot and content policies of eight popular social media platforms: X (formerly Twitter), Instagram, Facebook, Threads, TikTok, Mastodon, Reddit, and LinkedIn. Using Selenium, we developed a web bot to test bot deployment and AI-generated content policies and their enforcement mechanisms. Our findings indicate significant vulnerabilities within the current enforcement mechanisms of these platforms. Despite having explicit policies against bot activity, all platforms failed to detect and prevent the operation of our MFM bots. This finding reveals a critical gap in the security measures employed by these social media platforms, underscoring the potential for malicious actors to exploit these weaknesses to disseminate misinformation, commit fraud, or manipulate users.

翻译:多模态基础模型(MFMs)的出现为社交媒体平台的变革带来了重大前景。然而,这一进展也引发了严重的安全与伦理问题,因为它可能为恶意行为者利用在线用户提供便利。本研究旨在评估主流社交媒体平台的安全协议在防范MFM机器人部署方面的有效性。我们审查了八个流行社交媒体平台的机器人及内容政策:X(原Twitter)、Instagram、Facebook、Threads、TikTok、Mastodon、Reddit和LinkedIn。利用Selenium,我们开发了一个网络机器人以测试机器人部署及AI生成内容政策及其执行机制。研究结果表明,这些平台当前的执行机制存在显著漏洞。尽管各平台均设有明确的机器人活动禁令,但所有平台均未能检测并阻止我们部署的MFM机器人运行。这一发现揭示了社交媒体平台现有安全措施的关键缺陷,凸显了恶意行为者可能利用这些弱点传播虚假信息、实施欺诈或操纵用户的风险。