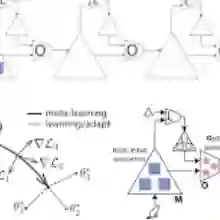

We study the problem of meta-learning several contextual stochastic bandits tasks by leveraging their concentration around a low-dimensional affine subspace, which we learn via online principal component analysis to reduce the expected regret over the encountered bandits. We propose and theoretically analyze two strategies that solve the problem: One based on the principle of optimism in the face of uncertainty and the other via Thompson sampling. Our framework is generic and includes previously proposed approaches as special cases. Besides, the empirical results show that our methods significantly reduce the regret on several bandit tasks.

翻译:我们研究通过利用多个上下文随机老虎机任务围绕低维仿射子空间的聚集性进行元学习的问题。我们通过在线主成分分析学习该子空间,以降低所遇到老虎机的期望遗憾值。我们提出并从理论上分析了两种解决该问题的策略:一种基于不确定性下的乐观主义原则,另一种通过汤普森采样实现。我们的框架具有通用性,并包含先前提出的方法作为特例。此外,实验结果表明,我们的方法在多个老虎机任务上显著降低了遗憾值。