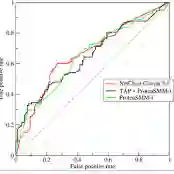

Hallucinations in Large Language Models (LLMs) -- generations that are plausible but factually unfaithful -- remain a critical barrier to high-stakes deployment. Current detection methods typically rely on computationally expensive external retrieval loops or opaque black-box LLM judges requiring 70B+ parameters. In this work, we introduce [Model Name], a hybrid detection framework that combines neuroscience-inspired signal design with supervised machine learning. We extract interpretable signals grounded in Predictive Coding (quantifying surprise against internal priors) and the Information Bottleneck (measuring signal retention under perturbation). Through systematic ablation, we demonstrate three key enhancements: Entity-Focused Uptake (concentrating on high-value tokens), Context Adherence (measuring grounding strength), and Falsifiability Score (detecting confident but contradictory claims). Evaluating on HaluBench (n=200, perfectly balanced), our theory-guided baseline achieves 0.8017 AUROC. BASE supervised models reach 0.8274 AUROC, while IMPROVED features boost performance to 0.8669 AUROC (4.95% gain), demonstrating consistent improvements across architectures. This competitive performance is achieved while using 75x less training data than Lynx (200 vs 15,000 samples), 1000x faster inference (5ms vs 5s), and remaining fully interpretable. Crucially, we report a negative result: the Rationalization signal fails to distinguish hallucinations, suggesting that LLMs generate coherent reasoning for false premises ("Sycophancy"). This work demonstrates that domain knowledge encoded in signal architecture provides superior data efficiency compared to scaling LLM judges, achieving strong performance with lightweight (less than 1M parameter), explainable models suitable for production deployment.

翻译:大语言模型中的幻觉——看似合理但事实上不忠实的生成内容——仍然是高风险部署的关键障碍。当前检测方法通常依赖于计算成本高昂的外部检索循环或需要700亿以上参数的不透明黑盒LLM评判器。本工作中,我们提出了[模型名称],一种融合神经科学启发的信号设计与监督机器学习的混合检测框架。我们提取基于预测编码(量化相对于内部先验的惊奇度)和信息瓶颈(测量扰动下的信号保留度)的可解释信号。通过系统消融实验,我们证明了三个关键增强:实体聚焦吸收(集中于高价值标记)、上下文依从性(测量接地强度)和可证伪性评分(检测自信但矛盾的声称)。在HaluBench(n=200,完全平衡)上评估,我们的理论指导基线达到0.8017 AUROC。基础监督模型达到0.8274 AUROC,而改进特征将性能提升至0.8669 AUROC(增益4.95%),展示了跨架构的一致性改进。这一竞争性性能是在使用比Lynx少75倍训练数据(200 vs 15,000样本)、推理速度快1000倍(5ms vs 5s)且保持完全可解释性的情况下实现的。关键的是,我们报告了一个负面结果:合理化信号无法区分幻觉,表明LLM会为错误前提生成连贯推理(“谄媚性”)。本工作证明,编码在信号架构中的领域知识相比扩展LLM评判器提供了更优的数据效率,通过适用于生产部署的轻量级(少于100万参数)、可解释模型实现了强大性能。