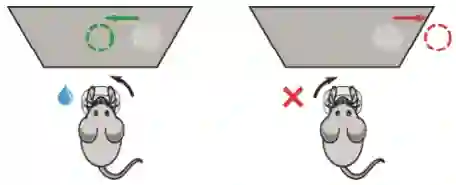

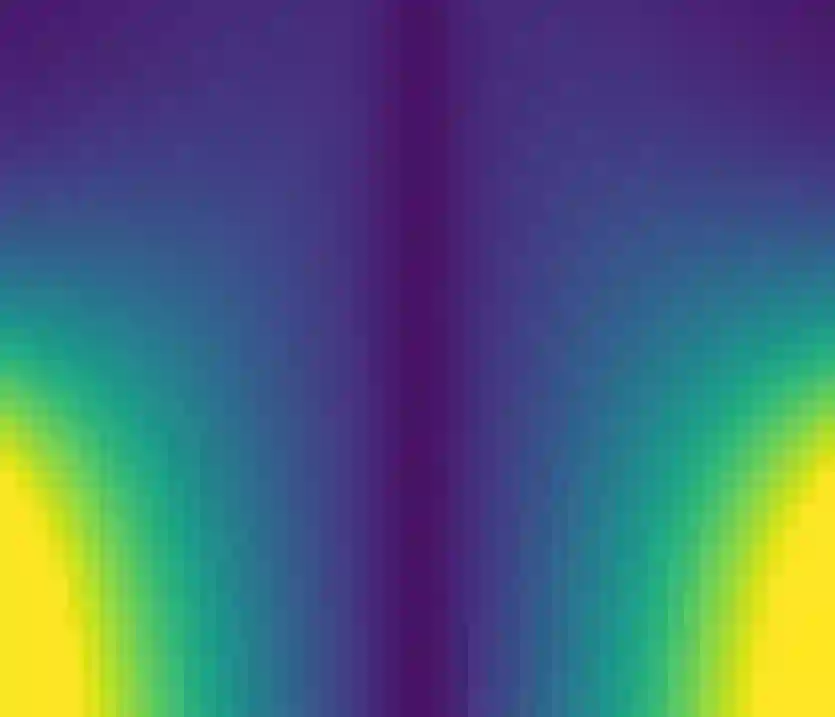

Understanding how animals learn is a central challenge in neuroscience, with growing relevance to the development of animal- or human-aligned artificial intelligence. However, existing approaches tend to assume fixed parametric forms for the learning rule (e.g., Q-learning, policy gradient), which may not accurately describe the complex forms of learning employed by animals in realistic settings. Here we address this gap by developing a framework to infer learning rules directly from behavioral data collected during de novo task learning. We assume that animals follow a decision policy parameterized by a generalized linear model (GLM), and we model their learning rule -- the mapping from task covariates to per-trial weight updates -- using a deep neural network (DNN). This formulation allows flexible, data-driven inference of learning rules while maintaining an interpretable form of the decision policy itself. To capture more complex learning dynamics, we introduce a recurrent neural network (RNN) variant that relaxes the Markovian assumption that learning depends solely on covariates of the current trial, allowing for learning rules that integrate information over multiple trials. Simulations demonstrate that the framework can recover ground-truth learning rules. We applied our DNN and RNN-based methods to a large behavioral dataset from mice learning to perform a sensory decision-making task and found that they outperformed traditional RL learning rules at predicting the learning trajectories of held-out mice. The inferred learning rules exhibited reward-history-dependent learning dynamics, with larger updates following sequences of rewarded trials. Overall, these methods provide a flexible framework for inferring learning rules from behavioral data in de novo learning tasks, setting the stage for improved animal training protocols and the development of behavioral digital twins.

翻译:理解动物如何学习是神经科学领域的核心挑战,其对开发动物或人类对齐的人工智能日益重要。然而,现有方法通常为学习规则(例如Q学习、策略梯度)假设固定的参数化形式,这可能无法准确描述动物在现实情境中采用的复杂学习形式。本文通过开发一个框架来填补这一空白,该框架能够直接从新任务学习过程中收集的行为数据推断学习规则。我们假设动物遵循由广义线性模型(GLM)参数化的决策策略,并使用深度神经网络(DNN)对其学习规则——即从任务协变量到每试次权重更新的映射——进行建模。这一表述允许对学习规则进行灵活、数据驱动的推断,同时保持决策策略本身的可解释形式。为了捕捉更复杂的学习动态,我们引入了一种循环神经网络(RNN)变体,它放宽了学习仅依赖于当前试次协变量的马尔可夫假设,从而允许学习规则能够整合多个试次的信息。仿真实验表明,该框架能够恢复真实的学习规则。我们将基于DNN和RNN的方法应用于小鼠学习执行感觉决策任务的大型行为数据集,发现它们在预测留出小鼠的学习轨迹方面优于传统的强化学习规则。推断出的学习规则表现出依赖于奖励历史的学习动态,在连续获得奖励的试次序列后会出现更大的权重更新。总体而言,这些方法为从新任务学习的行为数据中推断学习规则提供了一个灵活框架,为改进动物训练方案和开发行为数字孪生奠定了基础。