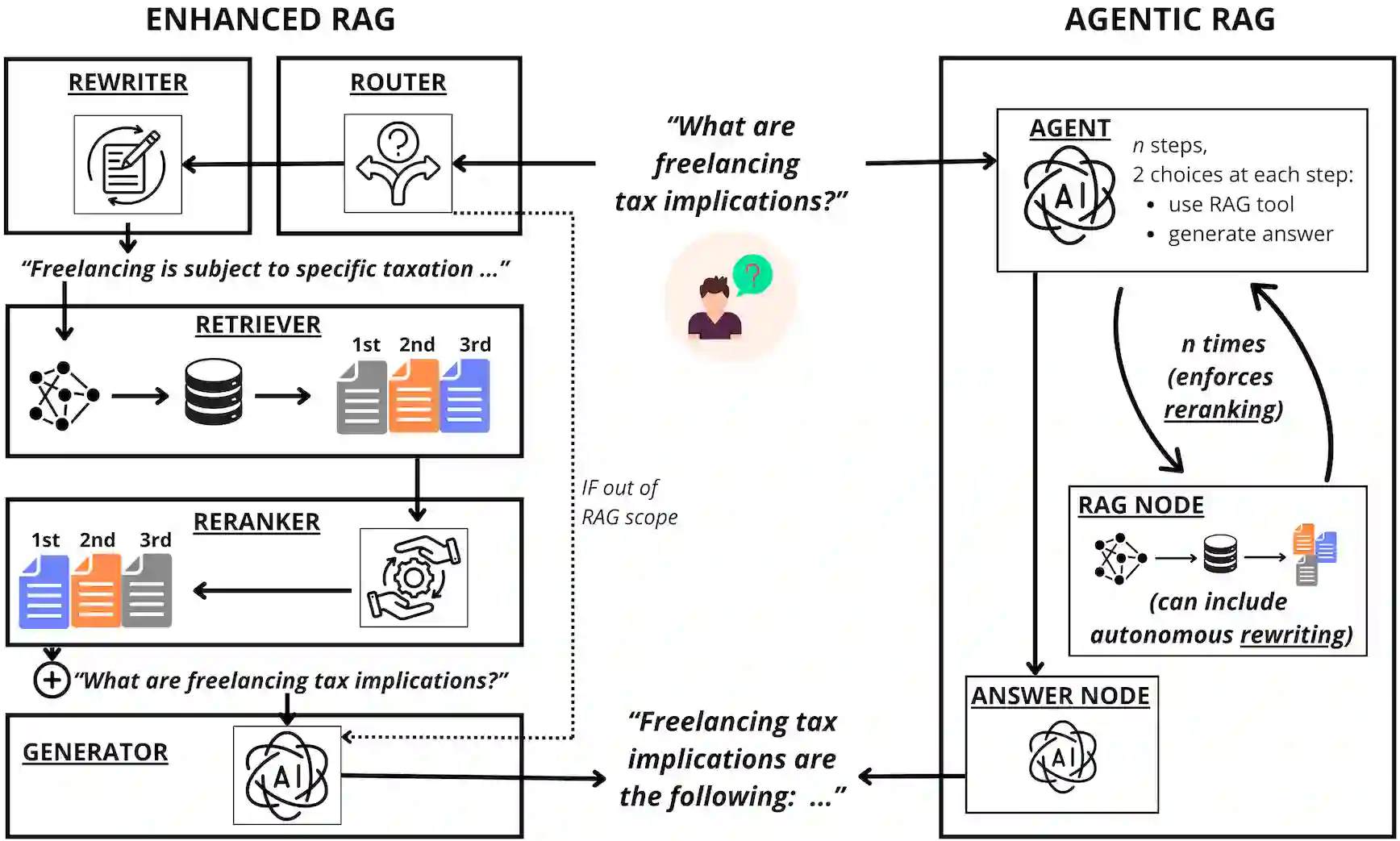

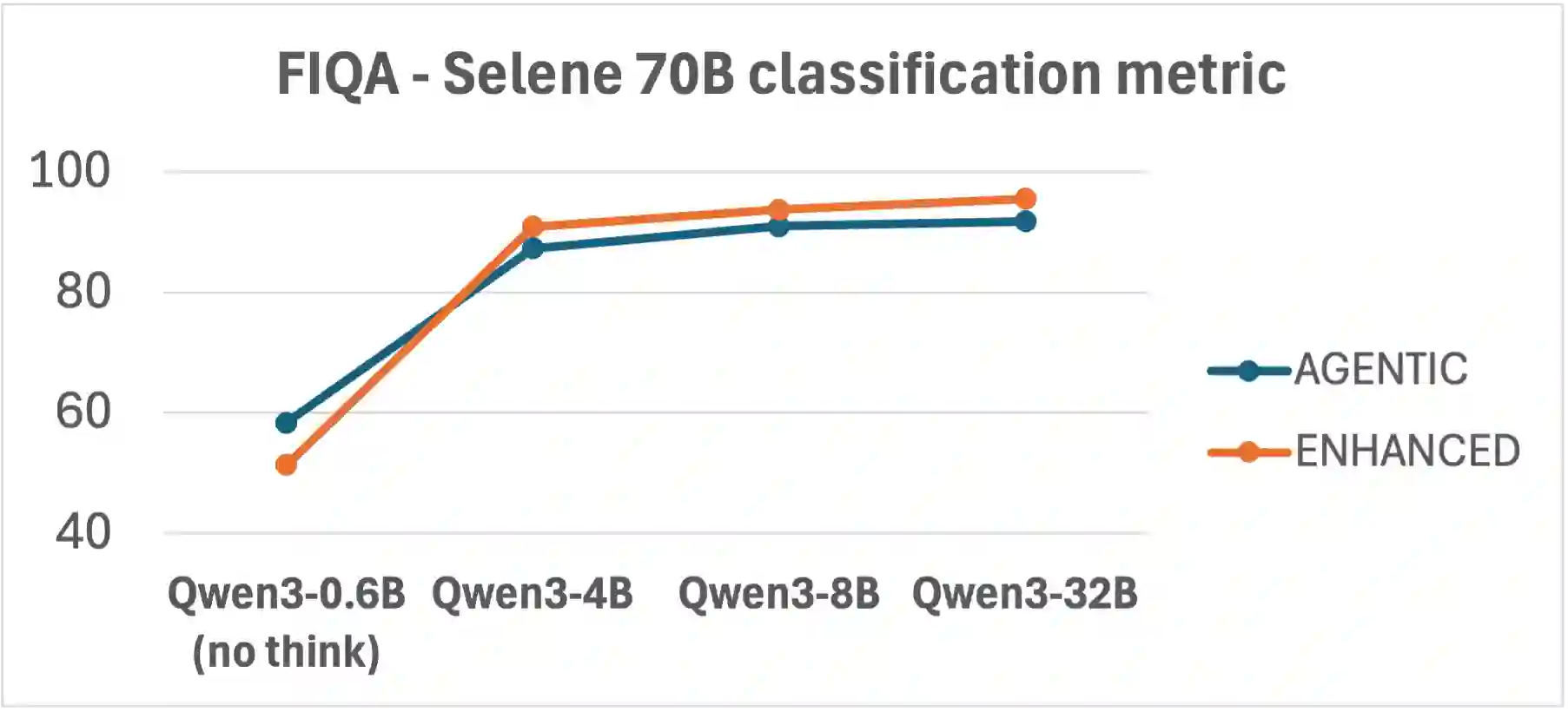

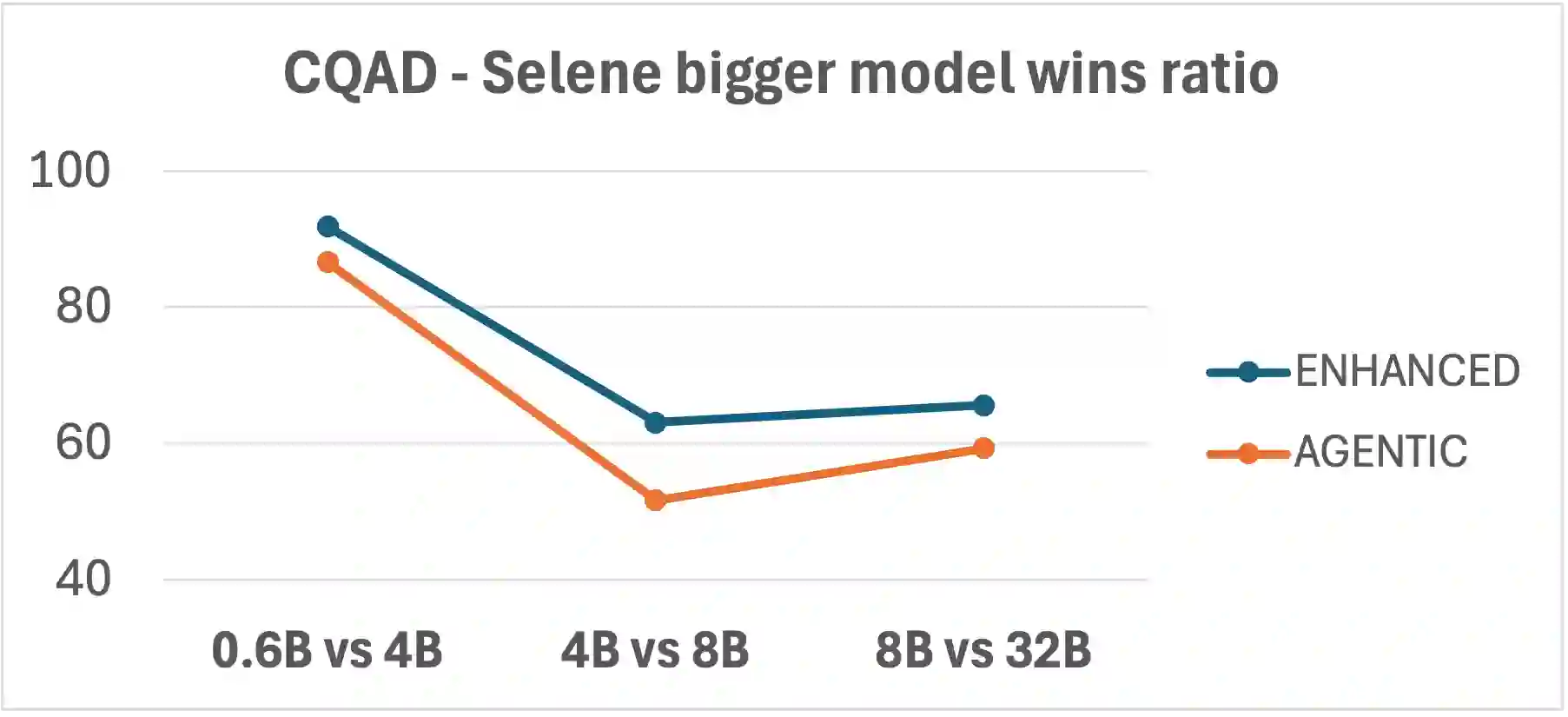

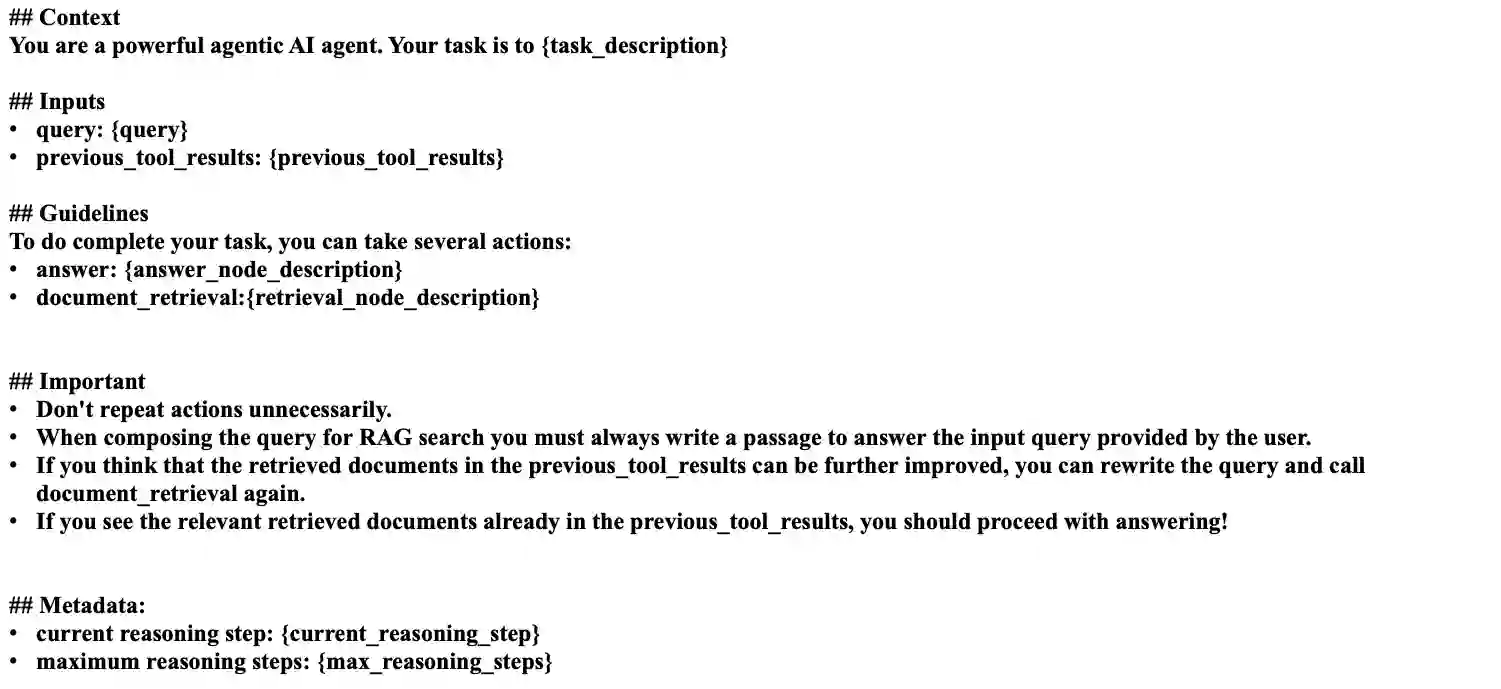

Retrieval-Augmented Generation (RAG) systems are usually defined by the combination of a generator and a retrieval component that extracts textual context from a knowledge base to answer user queries. However, such basic implementations exhibit several limitations, including noisy or suboptimal retrieval, misuse of retrieval for out-of-scope queries, weak query-document matching, and variability or cost associated with the generator. These shortcomings have motivated the development of "Enhanced" RAG, where dedicated modules are introduced to address specific weaknesses in the workflow. More recently, the growing self-reflective capabilities of Large Language Models (LLMs) have enabled a new paradigm, which we refer to as "Agentic" RAG. In this approach, the LLM orchestrates the entire process-deciding which actions to perform, when to perform them, and whether to iterate-thereby reducing reliance on fixed, manually engineered modules. Despite the rapid adoption of both paradigms, it remains unclear which approach is preferable under which conditions. In this work, we conduct an extensive, empirically driven evaluation of Enhanced and Agentic RAG across multiple scenarios and dimensions. Our results provide practical insights into the trade-offs between the two paradigms, offering guidance on selecting the most effective RAG design for real-world applications, considering both costs and performance.

翻译:检索增强生成(RAG)系统通常由生成器与检索组件结合定义,后者从知识库中提取文本上下文以回答用户查询。然而,此类基础实现存在若干局限,包括检索结果噪声大或次优、对超出范围查询的检索误用、查询与文档匹配能力弱,以及生成器本身的波动性或成本问题。这些缺陷推动了"增强型"RAG的发展,即通过引入专用模块来应对工作流中的特定弱点。近年来,大型语言模型(LLMs)日益增强的自反思能力催生了一种新范式,我们称之为"智能体式"RAG。该方法由LLM统筹整个流程——决定执行何种操作、何时执行以及是否迭代——从而减少对固定人工设计模块的依赖。尽管这两种范式已迅速得到应用,但在何种条件下何种方法更具优势仍不明确。本研究通过多场景、多维度的实证评估,对增强型与智能体式RAG进行了全面比较。实验结果揭示了两种范式间的权衡关系,为实际应用中综合考虑成本与性能选择最优RAG设计方案提供了实践指导。