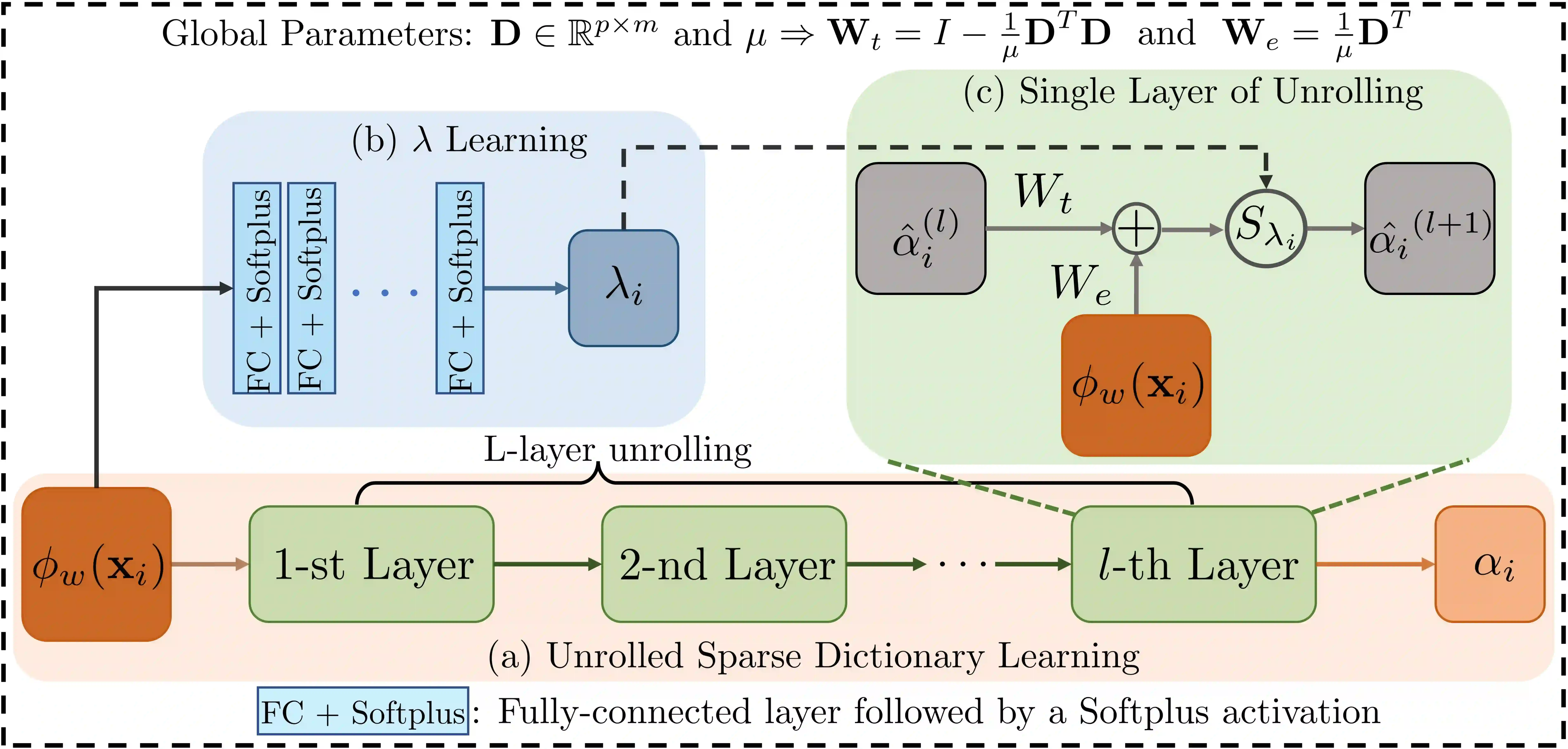

Multiple Instance Learning (MIL) has been widely used in weakly supervised whole slide image (WSI) classification. Typical MIL methods include a feature embedding part, which embeds the instances into features via a pre-trained feature extractor, and an MIL aggregator that combines instance embeddings into predictions. Most efforts have typically focused on improving these parts. This involves refining the feature embeddings through self-supervised pre-training as well as modeling the correlations between instances separately. In this paper, we proposed a sparsely coding MIL (SC-MIL) method that addresses those two aspects at the same time by leveraging sparse dictionary learning. The sparse dictionary learning captures the similarities of instances by expressing them as sparse linear combinations of atoms in an over-complete dictionary. In addition, imposing sparsity improves instance feature embeddings by suppressing irrelevant instances while retaining the most relevant ones. To make the conventional sparse coding algorithm compatible with deep learning, we unrolled it into a sparsely coded module leveraging deep unrolling. The proposed SC module can be incorporated into any existing MIL framework in a plug-and-play manner with an acceptable computational cost. The experimental results on multiple datasets demonstrated that the proposed SC module could substantially boost the performance of state-of-the-art MIL methods. The codes are available at \href{https://github.com/sotiraslab/SCMIL.git}{https://github.com/sotiraslab/SCMIL.git}.

翻译:多示例学习(MIL)已广泛应用于弱监督的全切片图像(WSI)分类。典型的MIL方法包含一个特征嵌入部分(通过预训练的特征提取器将示例嵌入为特征)和一个MIL聚合器(将示例嵌入组合为预测)。现有研究大多集中于改进这两个部分,包括通过自监督预训练优化特征嵌入,以及分别对示例间的相关性进行建模。本文提出了一种稀疏编码MIL(SC-MIL)方法,该方法利用稀疏字典学习同时解决上述两方面问题。稀疏字典学习通过将示例表示为过完备字典中原子的稀疏线性组合来捕获示例间的相似性。此外,施加稀疏性可通过抑制不相关示例同时保留最相关示例来改进示例特征嵌入。为使传统稀疏编码算法与深度学习兼容,我们利用深度展开技术将其展开为稀疏编码模块。所提出的SC模块能够以即插即用的方式集成到任何现有MIL框架中,且计算成本可控。在多个数据集上的实验结果表明,所提出的SC模块能够显著提升当前先进MIL方法的性能。代码发布于 \href{https://github.com/sotiraslab/SCMIL.git}{https://github.com/sotiraslab/SCMIL.git}。