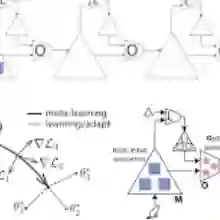

Multi-objective optimization aims to solve problems with competing objectives, often with only black-box access to a problem and a limited budget of measurements. In many applications, historical data from related optimization tasks is available, creating an opportunity for meta-learning to accelerate the optimization. Bayesian optimization, as a promising technique for black-box optimization, has been extended to meta-learning and multi-objective optimization independently, but methods that simultaneously address both settings - meta-learned priors for multi-objective Bayesian optimization - remain largely unexplored. We propose SMOG, a scalable and modular meta-learning model based on a multi-output Gaussian process that explicitly learns correlations between objectives. SMOG builds a structured joint Gaussian process prior across meta- and target tasks and, after conditioning on metadata, yields a closed-form target-task prior augmented by a flexible residual multi-output kernel. This construction propagates metadata uncertainty into the target surrogate in a principled way. SMOG supports hierarchical, parallel training: meta-task Gaussian processes are fit once and then cached, achieving linear scaling with the number of meta-tasks. The resulting surrogate integrates seamlessly with standard multi-objective Bayesian optimization acquisition functions.

翻译:多目标优化旨在解决具有竞争性目标的问题,通常仅能通过黑箱方式访问问题且测量预算有限。在许多应用中,存在来自相关优化任务的历史数据,这为利用元学习加速优化提供了机会。贝叶斯优化作为黑箱优化的有效技术,已分别扩展至元学习和多目标优化领域,但能同时处理两种场景的方法——即面向多目标贝叶斯优化的元学习先验——仍鲜有研究。本文提出SMOG,一种基于多输出高斯过程的可扩展模块化元学习模型,该模型能显式学习目标间的相关性。SMOG构建跨元任务与目标任务的结构化联合高斯过程先验,在基于元数据条件化后,可得到由灵活残差多输出核增强的闭式目标任务先验。该构建以理论完备的方式将元数据不确定性传递至目标代理模型中。SMOG支持分层并行训练:元任务高斯过程经单次拟合后即可缓存,实现与元任务数量的线性扩展。所得代理模型可与标准多目标贝叶斯优化采集函数无缝集成。