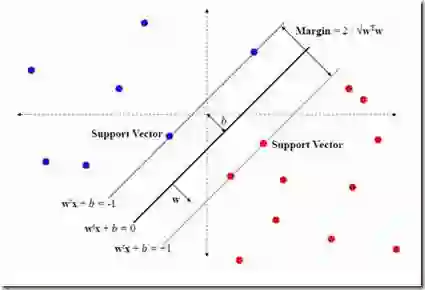

In supervised learning with distributional inputs in the two-stage sampling setup, relevant to applications like learning-based medical screening or causal learning, the inputs (which are probability distributions) are not accessible in the learning phase, but only samples thereof. This problem is particularly amenable to kernel-based learning methods, where the distributions or samples are first embedded into a Hilbert space, often using kernel mean embeddings (KMEs), and then a standard kernel method like Support Vector Machines (SVMs) is applied, using a kernel defined on the embedding Hilbert space. In this work, we contribute to the theoretical analysis of this latter approach, with a particular focus on classification with distributional inputs using SVMs. We establish a new oracle inequality and derive consistency and learning rate results. Furthermore, for SVMs using the hinge loss and Gaussian kernels, we formulate a novel variant of an established noise assumption from the binary classification literature, under which we can establish learning rates. Finally, some of our technical tools like a new feature space for Gaussian kernels on Hilbert spaces are of independent interest.

翻译:在基于学习的医学筛查或因果学习等应用相关的两阶段采样设置中,针对分布输入的监督学习问题,其输入(即概率分布)在学习阶段不可直接获取,仅能获得其样本。该问题特别适合基于核的学习方法:首先通过核均值嵌入(KMEs)将分布或样本嵌入希尔伯特空间,随后在嵌入希尔伯特空间上定义核函数,并应用支持向量机(SVMs)等标准核方法。本研究针对后一方法的理论分析作出贡献,重点关注使用支持向量机处理分布输入的分类问题。我们建立了新的Oracle不等式,并推导出一致性及学习速率结果。此外,对于使用合页损失与高斯核的支持向量机,我们在二元分类文献既有噪声假设的基础上提出了一种新颖的变体,据此可确定学习速率。最后,本文部分技术工具(如希尔伯特空间上高斯核的新特征空间构造)具有独立的理论价值。