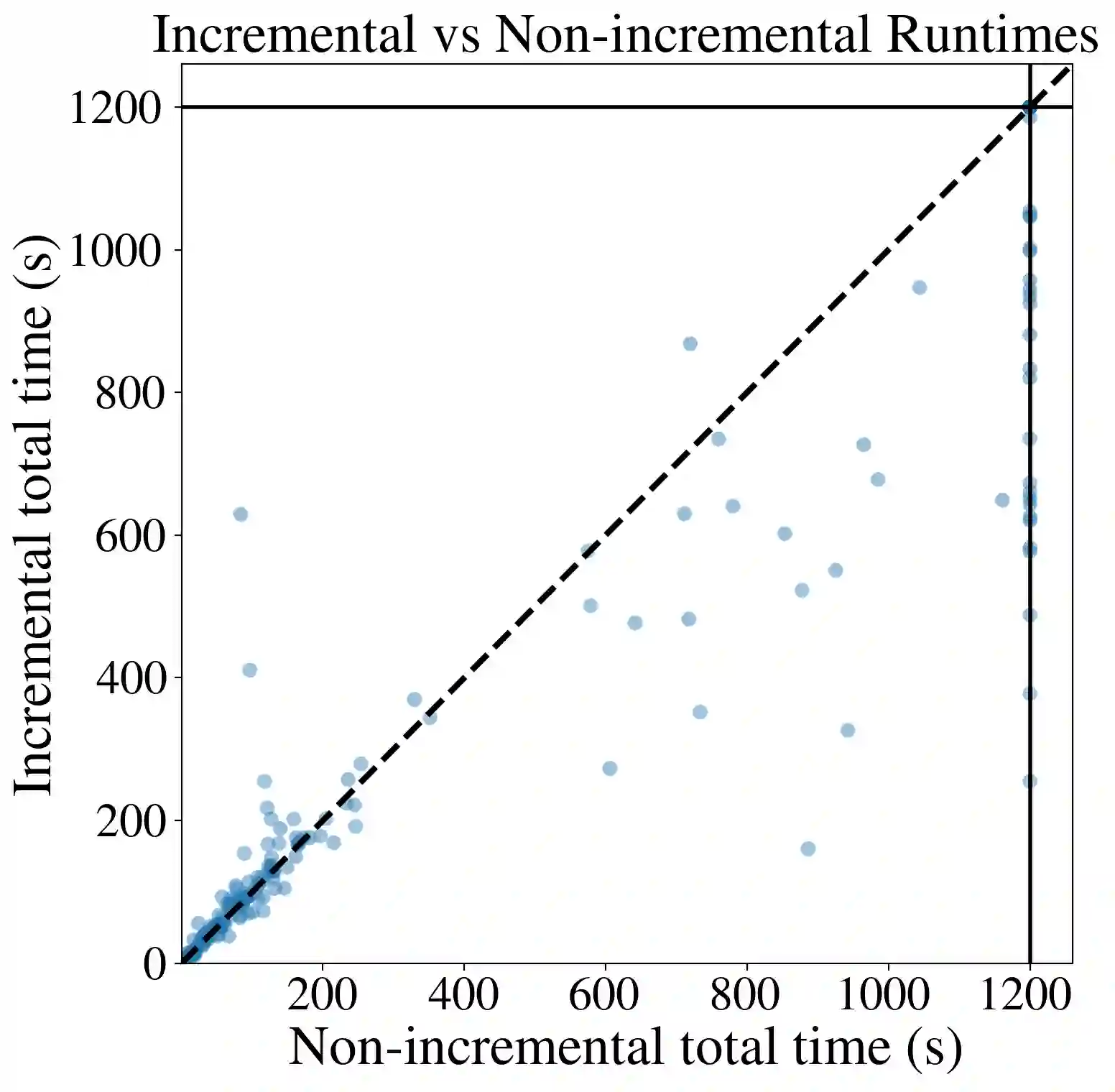

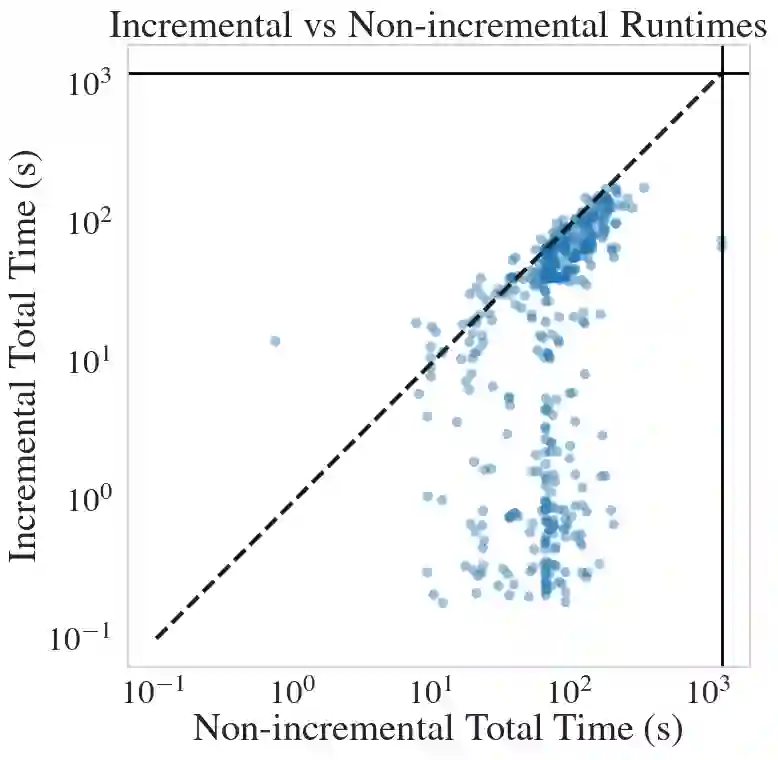

Neural network verification is often used as a core component within larger analysis procedures, which generate sequences of closely related verification queries over the same network. In existing neural network verifiers, each query is typically solved independently, and information learned during previous runs is discarded, leading to repeated exploration of the same infeasible regions of the search space. In this work, we aim to expedite verification by reducing this redundancy. We propose an incremental verification technique that reuses learned conflicts across related verification queries. The technique can be added on top of any branch-and-bound-based neural network verifier. During verification, the verifier records conflicts corresponding to learned infeasible combinations of activation phases, and retains them across runs. We formalize a refinement relation between verification queries and show that conflicts learned for a query remain valid under refinement, enabling sound conflict inheritance. Inherited conflicts are handled using a SAT solver to perform consistency checks and propagation, allowing infeasible subproblems to be detected and pruned early during search. We implement the proposed technique in the Marabou verifier and evaluate it on three verification tasks: local robustness radius determination, verification with input splitting, and minimal sufficient feature set extraction. Our experiments show that incremental conflict reuse reduces verification effort and yields speedups of up to $1.9\times$ over a non-incremental baseline.

翻译:神经网络验证常作为大型分析流程的核心组件,用于对同一网络生成一系列密切相关的验证查询。现有神经网络验证器通常独立求解每个查询,丢弃先前运行中获得的信息,导致搜索空间中相同不可行区域的重复探索。本研究旨在通过减少此类冗余来加速验证过程。我们提出一种增量验证技术,可在相关验证查询间复用已学习的冲突。该技术可集成于任何基于分支定界的神经网络验证器之上。在验证过程中,验证器记录与学习到的激活相位不可行组合相对应的冲突,并在多次运行间保留这些信息。我们形式化了验证查询间的精化关系,证明已学习冲突在精化条件下保持有效性,从而实现可靠的冲突继承。继承的冲突通过SAT求解器进行一致性检查与传播,使得不可行子问题能在搜索早期被检测并剪枝。我们在Marabou验证器中实现了该技术,并在三个验证任务中评估其性能:局部鲁棒性半径确定、输入分割验证及最小充分特征集提取。实验表明,增量式冲突复用能有效降低验证开销,相比非增量基线最高可获得$1.9\times$的加速比。