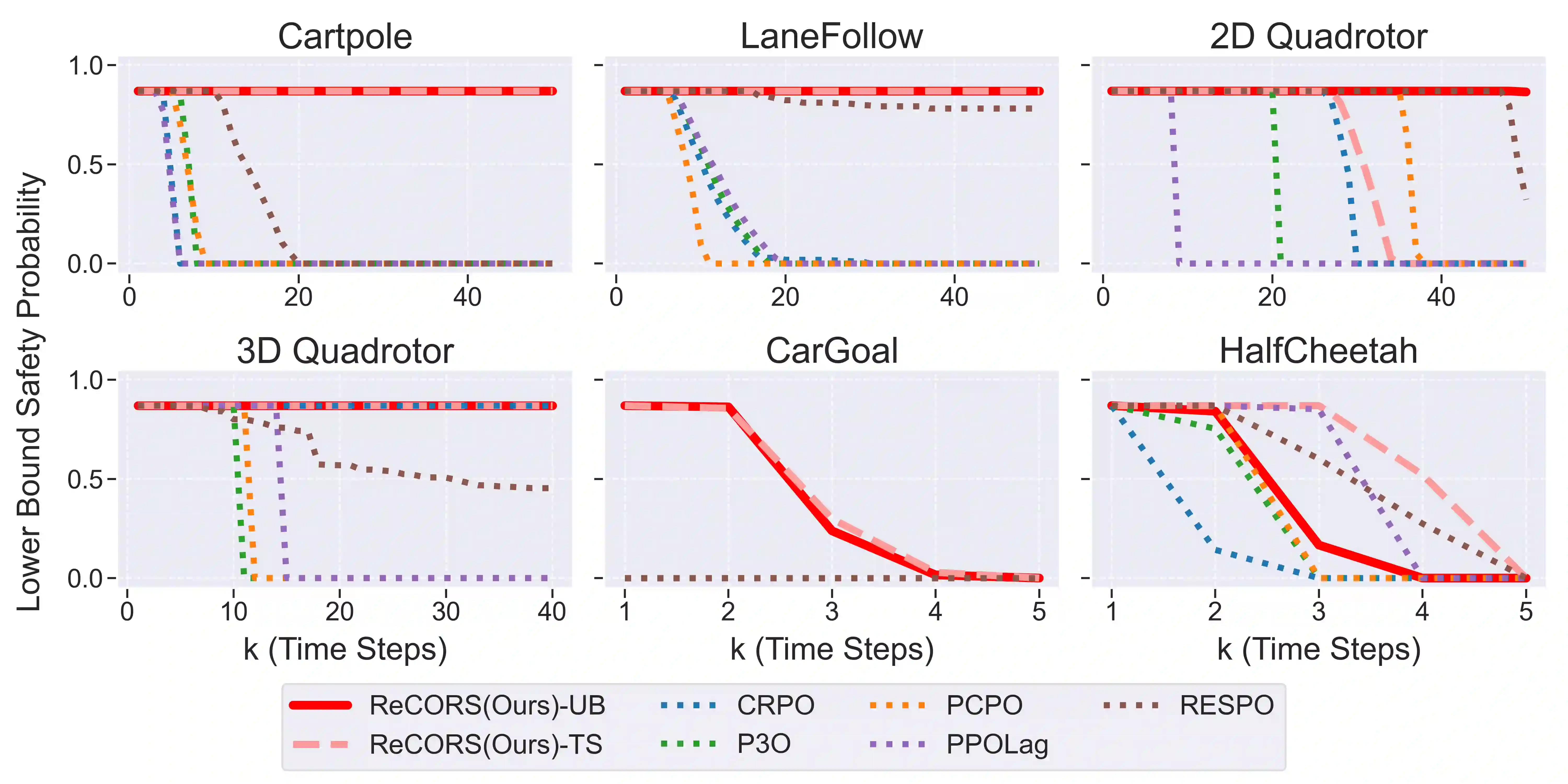

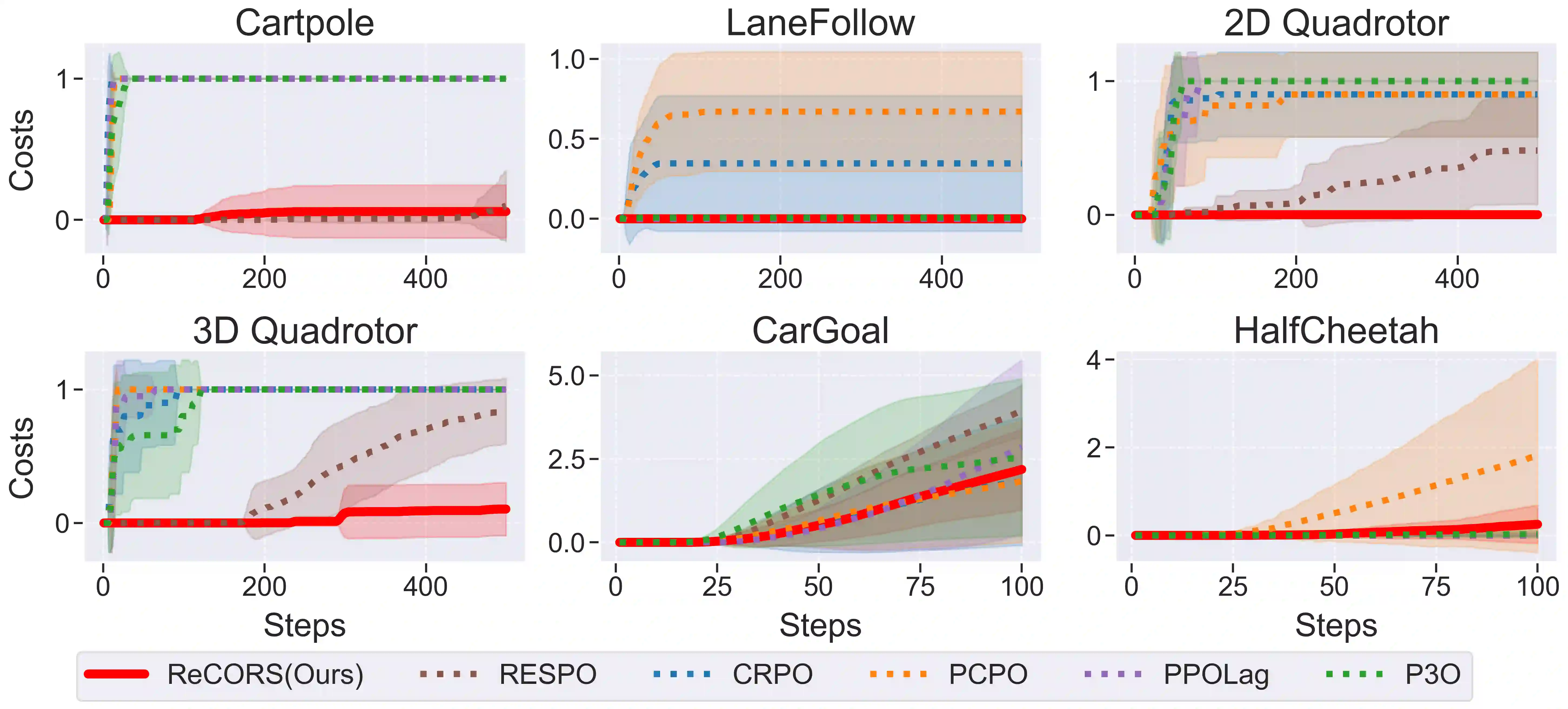

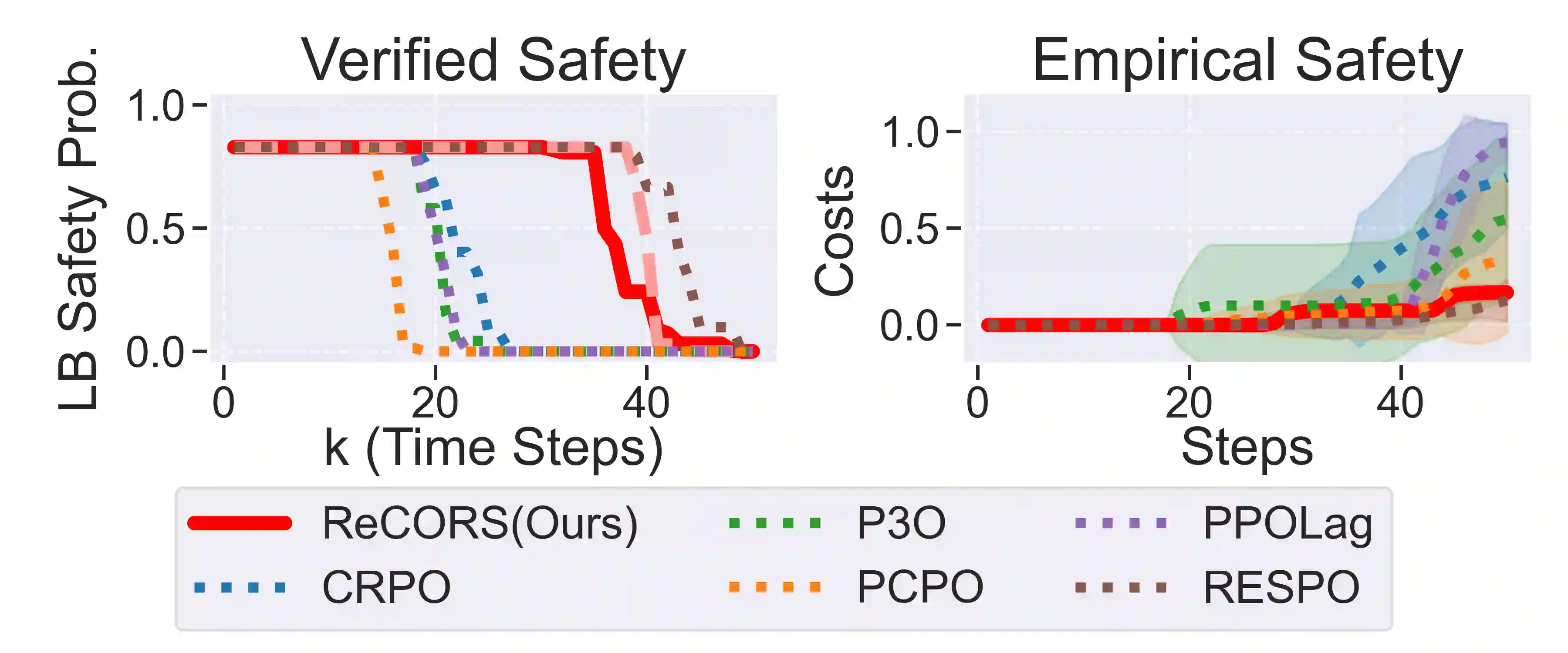

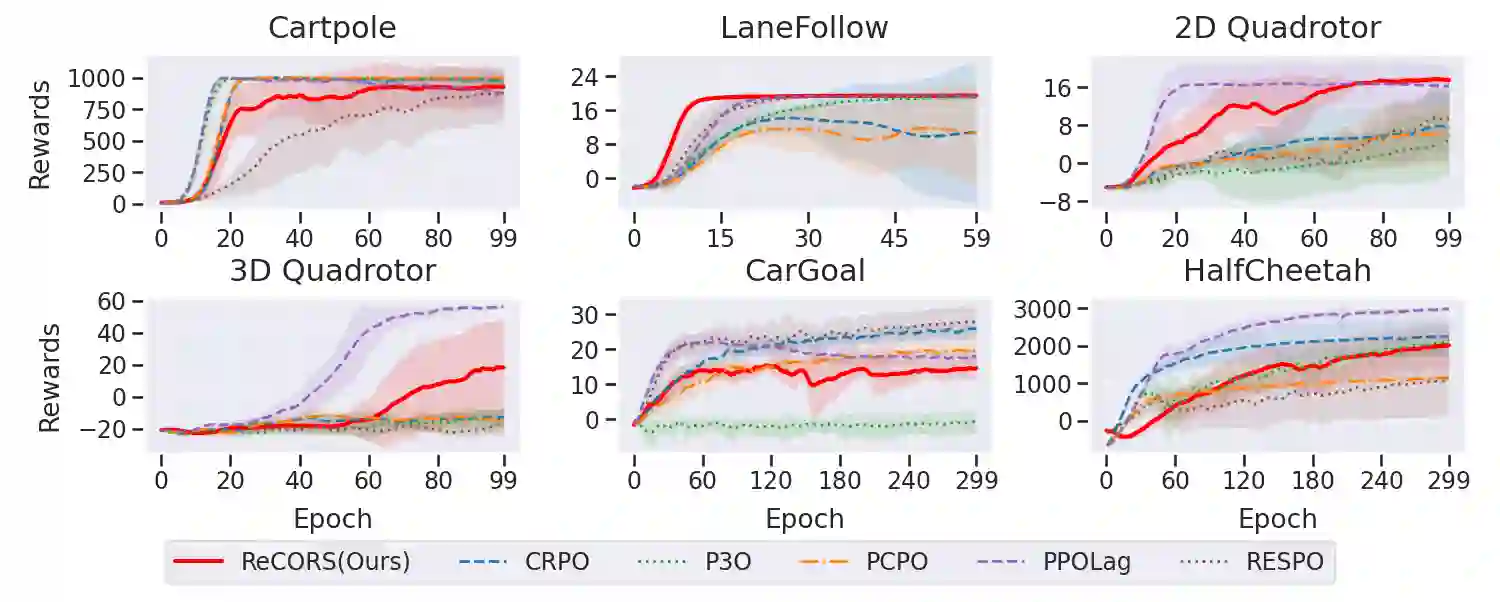

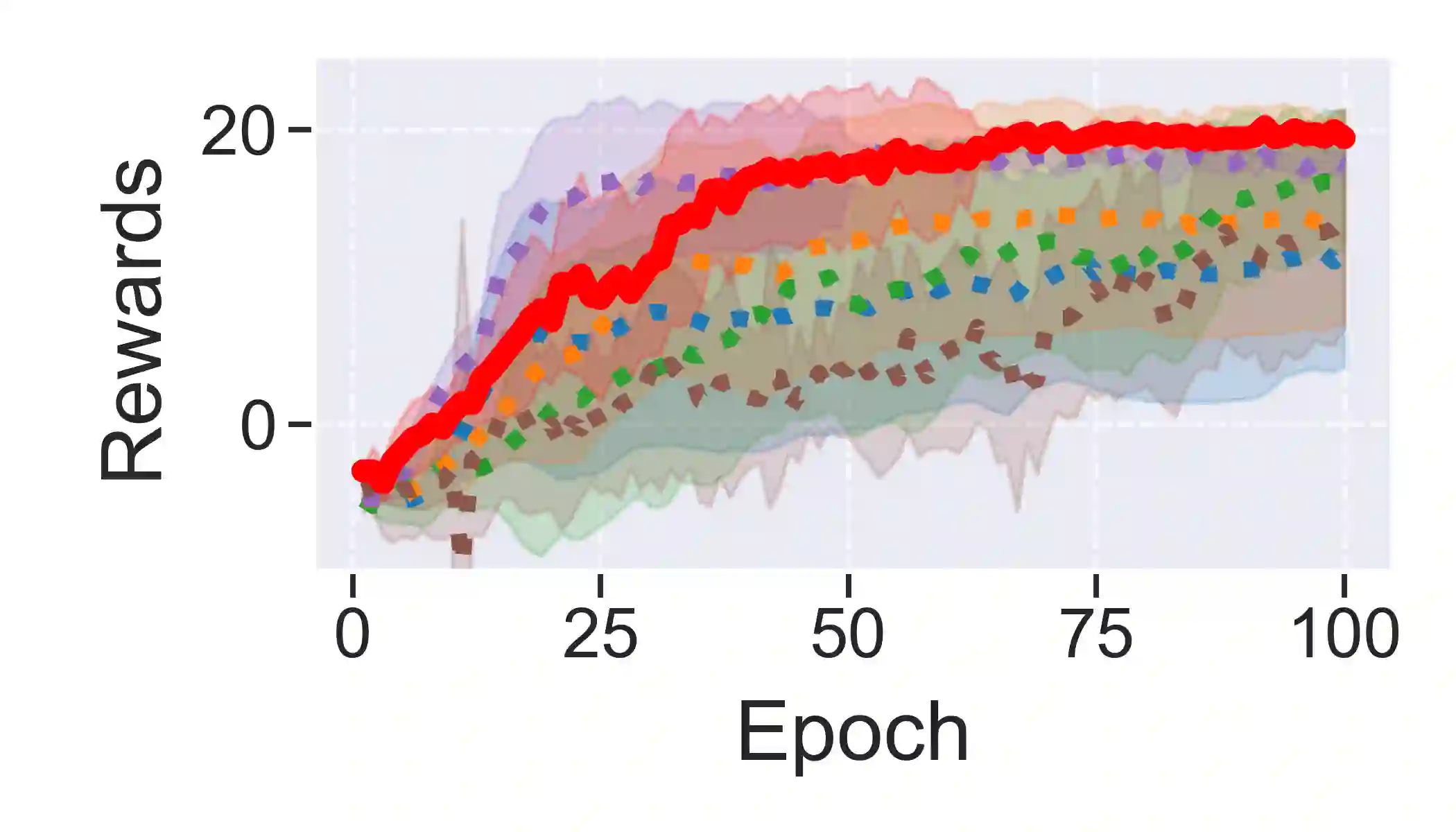

Designing provably safe control is a core problem in trustworthy autonomy. However, most prior work in this regard assumes either that the system dynamics are known or deterministic, or that the state and action space are finite, significantly limiting application scope. We address this limitation by developing a probabilistic verification framework for unknown dynamical systems which combines conformal prediction with reachability analysis. In particular, we use conformal prediction to obtain valid uncertainty intervals for the unknown dynamics at each time step, with reachability then verifying whether safety is maintained within the conformal uncertainty bounds. Next, we develop an algorithmic approach for training control policies that optimize nominal reward while also maximizing the planning horizon with sound probabilistic safety guarantees. We evaluate the proposed approach in seven safe control settings spanning four domains -- cartpole, lane following, drone control, and safe navigation -- for both affine and nonlinear safety specifications. Our experiments show that the policies we learn achieve the strongest provable safety guarantees while still maintaining high average reward.

翻译:设计可证明的安全控制是可信自主系统的核心问题。然而,该领域现有研究大多假设系统动力学已知或确定,或要求状态与动作空间有限,这严重限制了应用范围。为突破此局限,我们提出一种针对未知动态系统的概率验证框架,该框架将共形预测与可达性分析相结合。具体而言,我们利用共形预测在每个时间步为未知动力学获取有效的置信区间,随后通过可达性分析验证系统在共形不确定性边界内是否保持安全性。进一步,我们提出一种算法框架用于训练控制策略,该策略在优化名义奖励的同时,还能在严格概率安全保证下最大化规划时域。我们在涵盖四个领域(倒立摆、车道保持、无人机控制与安全导航)的七种安全控制场景中评估所提方法,覆盖仿射与非线性安全规范。实验表明,我们学习的控制策略在保持高平均奖励的同时,实现了当前最强的可证明安全保证。