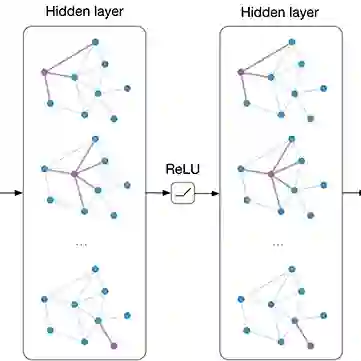

Relation extraction as an important natural Language processing (NLP) task is to identify relations between named entities in text. Recently, graph convolutional networks over dependency trees have been widely used to capture syntactic features and achieved attractive performance. However, most existing dependency-based approaches ignore the positive influence of the words outside the dependency trees, sometimes conveying rich and useful information on relation extraction. In this paper, we propose a novel model, Entity-aware Self-attention Contextualized GCN (ESC-GCN), which efficiently incorporates syntactic structure of input sentences and semantic context of sequences. To be specific, relative position self-attention obtains the overall semantic pairwise correlation related to word position, and contextualized graph convolutional networks capture rich intra-sentence dependencies between words by adequately pruning operations. Furthermore, entity-aware attention layer dynamically selects which token is more decisive to make final relation prediction. In this way, our proposed model not only reduces the noisy impact from dependency trees, but also obtains easily-ignored entity-related semantic representation. Extensive experiments on various tasks demonstrate that our model achieves encouraging performance as compared to existing dependency-based and sequence-based models. Specially, our model excels in extracting relations between entities of long sentences.

翻译:关系抽取作为自然语言处理(NLP)的一项重要任务,旨在识别文本中命名实体之间的关系。近年来,基于依存树的图卷积网络被广泛用于捕捉句法特征,并取得了引人注目的性能。然而,现有的大多数基于依存树的方法忽略了依存树外部词语的积极影响,这些词语有时蕴含着对关系抽取丰富且有用的信息。本文提出一种新颖的模型——实体感知自注意力上下文图卷积网络(ESC-GCN),该模型有效地融合了输入句子的句法结构与序列的语义上下文。具体而言,相对位置自注意力机制获取与词语位置相关的整体语义成对关联,而上下文图卷积网络通过充分的剪枝操作捕捉词语间丰富的句内依赖关系。此外,实体感知注意力层动态地选择哪些词元对最终关系预测更具决定性。通过这种方式,我们提出的模型不仅降低了依存树带来的噪声影响,还获得了容易被忽略的实体相关语义表示。在多种任务上的大量实验表明,与现有的基于依存树和基于序列的模型相比,我们的模型取得了令人鼓舞的性能。特别地,我们的模型在长句实体间关系抽取方面表现优异。