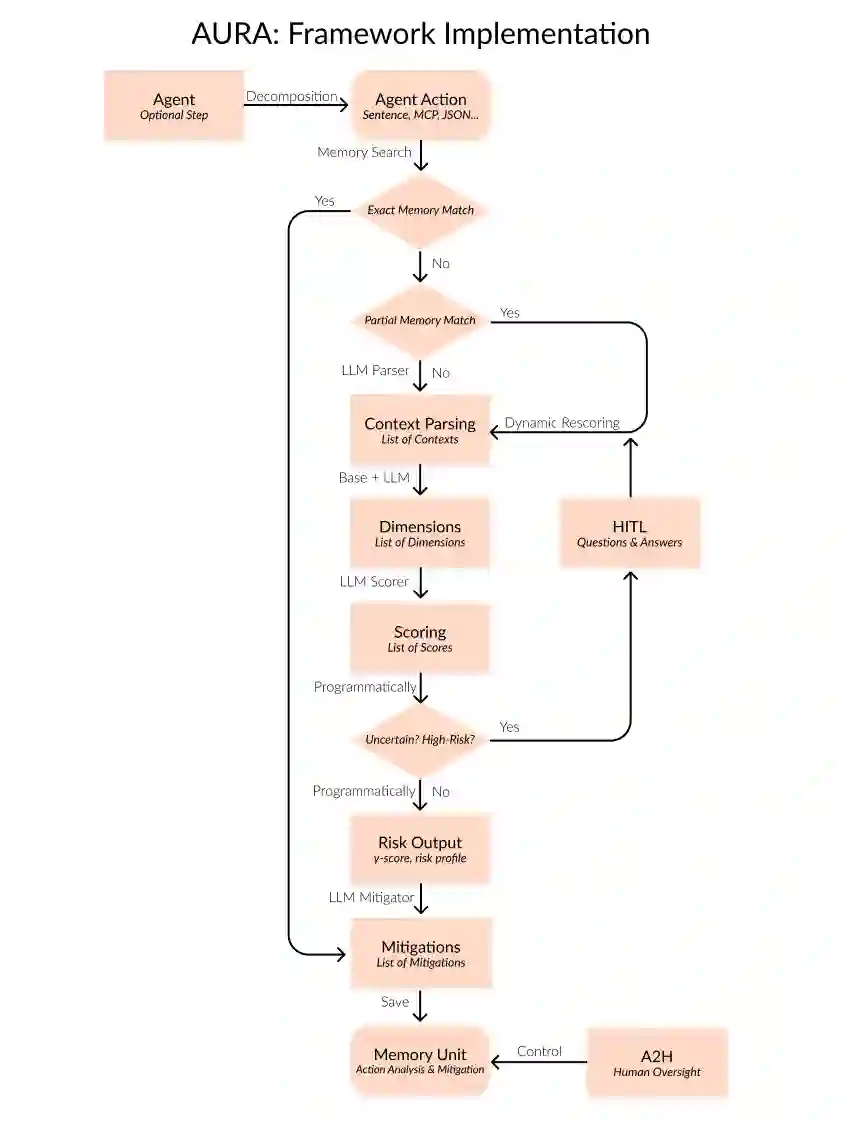

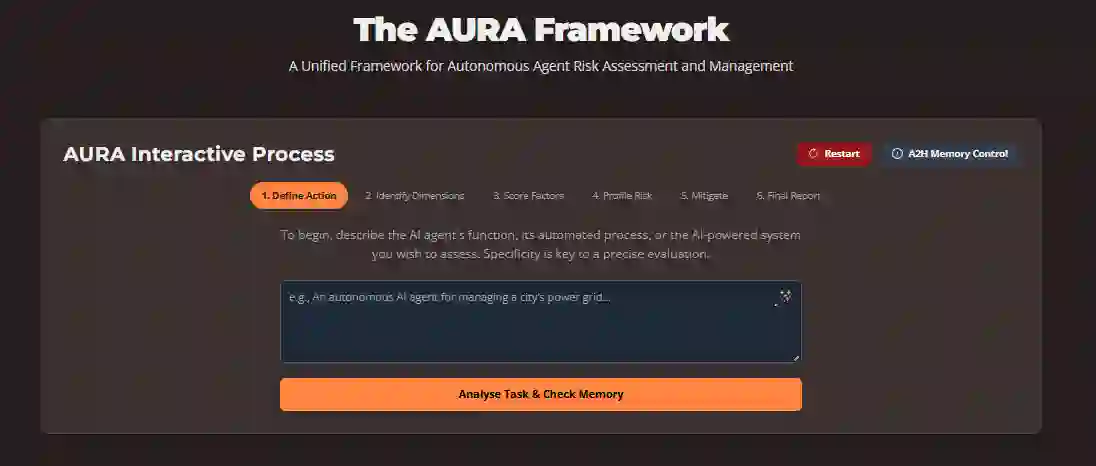

As autonomous agentic AI systems see increasing adoption across organisations, persistent challenges in alignment, governance, and risk management threaten to impede deployment at scale. We present AURA (Agent aUtonomy Risk Assessment), a unified framework designed to detect, quantify, and mitigate risks arising from agentic AI. Building on recent research and practical deployments, AURA introduces a gamma-based risk scoring methodology that balances risk assessment accuracy with computational efficiency and practical considerations. AURA provides an interactive process to score, evaluate and mitigate the risks of running one or multiple AI Agents, synchronously or asynchronously (autonomously). The framework is engineered for Human-in-the-Loop (HITL) oversight and presents Agent-to-Human (A2H) communication mechanisms, allowing for seamless integration with agentic systems for autonomous self-assessment, rendering it interoperable with established protocols (MCP and A2A) and tools. AURA supports a responsible and transparent adoption of agentic AI and provides robust risk detection and mitigation while balancing computational resources, positioning it as a critical enabler for large-scale, governable agentic AI in enterprise environments.

翻译:随着自主智能体AI系统在各组织中日益普及,对齐、治理和风险管理方面的持续挑战正阻碍其大规模部署。本文提出AURA(智能体自主性风险评估)框架,这是一个用于检测、量化和缓解智能体AI所产生风险的统一框架。基于最新研究和实际部署经验,AURA引入了一种基于伽马系数的风险评分方法,在风险评估准确性、计算效率与实践考量之间取得平衡。该框架提供交互式流程,可对同步或异步(自主)运行的单个或多个AI智能体进行风险评分、评估与缓解。AURA专为"人在回路"(HITL)监督机制设计,提供智能体到人(A2H)的通信机制,支持与智能体系统无缝集成以实现自主自评估,并能与既有协议(MCP和A2A)及工具实现互操作。本框架支持负责任且透明的智能体AI部署,在平衡计算资源的同时提供稳健的风险检测与缓解方案,使其成为企业环境中大规模可治理智能体AI的关键赋能工具。