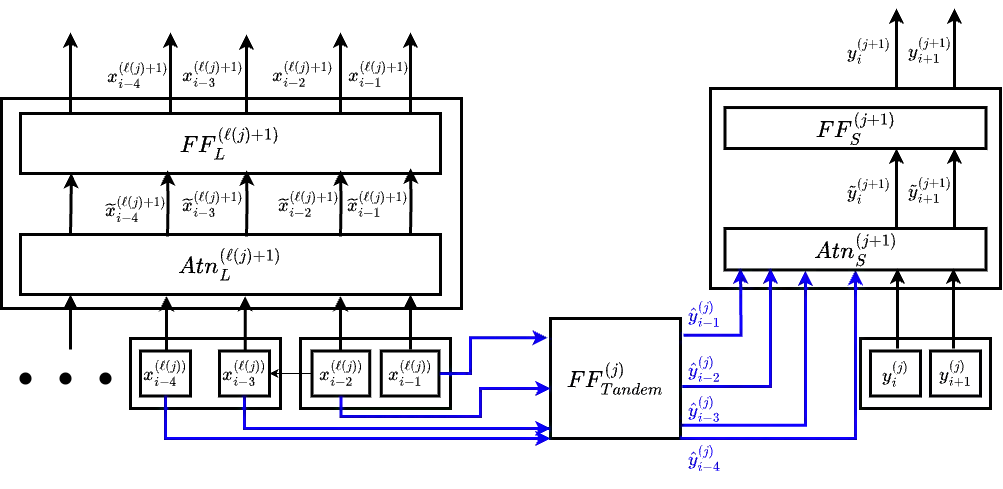

The autoregressive nature of conventional large language models (LLMs) inherently limits inference speed, as tokens are generated sequentially. While speculative and parallel decoding techniques attempt to mitigate this, they face limitations: either relying on less accurate smaller models for generation or failing to fully leverage the base LLM's representations. We introduce a novel architecture, Tandem transformers, to address these issues. This architecture uniquely combines (1) a small autoregressive model and (2) a large model operating in block mode (processing multiple tokens simultaneously). The small model's predictive accuracy is substantially enhanced by granting it attention to the large model's richer representations. On the PaLM2 pretraining dataset, a tandem of PaLM2-Bison and PaLM2-Gecko demonstrates a 3.3% improvement in next-token prediction accuracy over a standalone PaLM2-Gecko, offering a 1.16x speedup compared to a PaLM2-Otter model with comparable downstream performance. We further incorporate the tandem model within the speculative decoding (SPEED) framework where the large model validates tokens from the small model. This ensures that the Tandem of PaLM2-Bison and PaLM2-Gecko achieves substantial speedup (around 1.14x faster than using vanilla PaLM2-Gecko in SPEED) while maintaining identical downstream task accuracy.

翻译:传统大型语言模型(LLMs)的自回归特性因需逐token生成而固有地限制了推理速度。尽管推测解码与并行解码技术试图缓解此问题,但均面临局限:要么依赖精度较低的小模型生成,要么未能充分利用基座LLM的表示能力。我们提出一种新型架构——串联Transformer(Tandem Transformers)来解决上述难题。该架构独特地融合了(1)一个小型自回归模型与(2)一个以块模式(同时处理多个token)运行的大型模型。通过允许小型模型访问大型模型更丰富的表示,其预测精度得到显著提升。在PaLM2预训练数据集上,PaLM2-Bison与PaLM2-Gecko串联后,其下一个token预测准确率相比独立PaLM2-Gecko提升3.3%,并在下游性能相当的条件下,相比PaLM2-Otter模型实现1.16倍加速。我们进一步将串联模型融入推测解码(SPEED)框架,其中大型模型验证小型模型生成的token。这使得PaLM2-Bison与PaLM2-Gecko串联模型在保持等同下游任务精度的同时,实现显著加速(比在SPEED中使用原生PaLM2-Gecko快约1.14倍)。