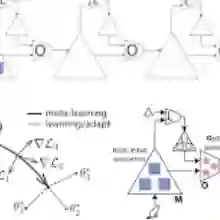

Group-Relative Policy Optimization (GRPO) has emerged as an efficient paradigm for aligning Large Language Models (LLMs), yet its efficacy is primarily confined to domains with verifiable ground truths. Extending GRPO to open-domain settings remains a critical challenge, as unconstrained generation entails multi-faceted and often conflicting objectives - such as creativity versus factuality - where rigid, static reward scalarization is inherently suboptimal. To address this, we propose MAESTRO (Meta-learning Adaptive Estimation of Scalarization Trade-offs for Reward Optimization), which introduces a meta-cognitive orchestration layer that treats reward scalarization as a dynamic latent policy, leveraging the model's terminal hidden states as a semantic bottleneck to perceive task-specific priorities. We formulate this as a contextual bandit problem within a bi-level optimization framework, where a lightweight Conductor network co-evolves with the policy by utilizing group-relative advantages as a meta-reward signal. Across seven benchmarks, MAESTRO consistently outperforms single-reward and static multi-objective baselines, while preserving the efficiency advantages of GRPO, and in some settings even reducing redundant generation.

翻译:群体相对策略优化(GRPO)已成为对齐大型语言模型(LLM)的高效范式,但其有效性主要局限于具备可验证真值的领域。将GRPO扩展至开放域环境仍是一个关键挑战,因为无约束生成涉及多维度且常相互冲突的目标——例如创造力与事实准确性——此时采用僵化、静态的奖励标量化本质上难以达到最优。为此,我们提出MAESTRO(面向奖励优化的标量化权衡元学习自适应估计),该方法引入元认知编排层,将奖励标量化视为动态潜在策略,并利用模型终端隐藏状态作为语义瓶颈来感知任务特定优先级。我们将此问题构建为双层优化框架下的上下文赌博机问题,其中轻量级Conductor网络通过利用群体相对优势作为元奖励信号,与策略网络协同演化。在七项基准测试中,MAESTRO始终优于单奖励与静态多目标基线方法,同时保持了GRPO的效率优势,并在某些场景下减少了冗余生成。