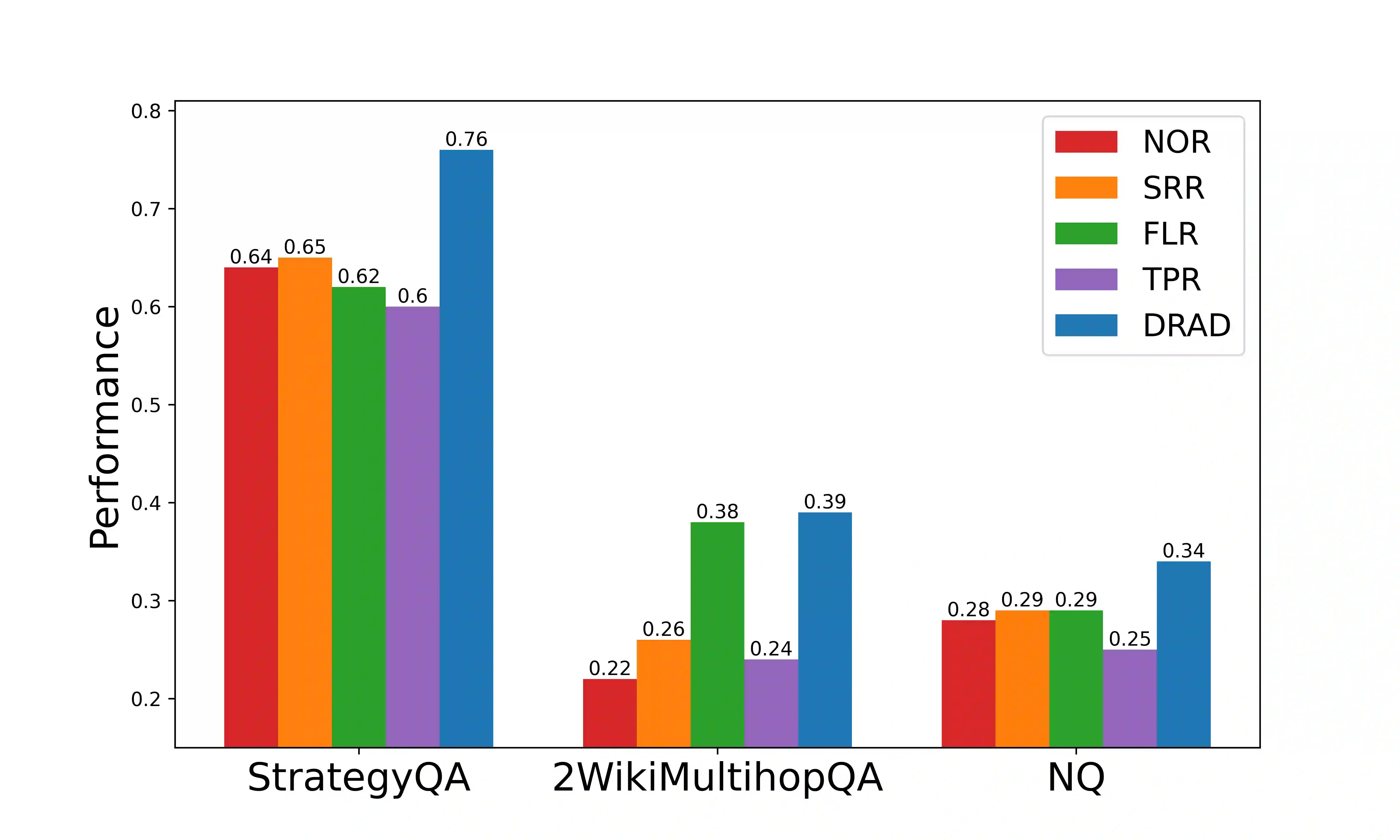

The emergence of Large Language Models (LLMs) has revolutionized how users access information, shifting from traditional search engines to direct question-and-answer interactions with LLMs. However, the widespread adoption of LLMs has revealed a significant challenge known as hallucination, wherein LLMs generate coherent yet factually inaccurate responses. This hallucination phenomenon has led to users' distrust in information retrieval systems based on LLMs. To tackle this challenge, this paper proposes Dynamic Retrieval Augmentation based on hallucination Detection (DRAD) as a novel method to detect and mitigate hallucinations in LLMs. DRAD improves upon traditional retrieval augmentation by dynamically adapting the retrieval process based on real-time hallucination detection. It features two main components: Real-time Hallucination Detection (RHD) for identifying potential hallucinations without external models, and Self-correction based on External Knowledge (SEK) for correcting these errors using external knowledge. Experiment results show that DRAD demonstrates superior performance in both detecting and mitigating hallucinations in LLMs. All of our code and data are open-sourced at https://github.com/oneal2000/EntityHallucination.

翻译:大型语言模型(LLMs)的出现彻底改变了用户获取信息的方式,从传统的搜索引擎转向与LLMs的直接问答交互。然而,LLMs的广泛应用揭示了一个被称为“幻觉”的重大挑战,即LLMs生成连贯但事实上不准确的回答。这种幻觉现象导致了用户对基于LLMs的信息检索系统的不信任。为应对这一挑战,本文提出了一种基于幻觉检测的动态检索增强方法(Dynamic Retrieval Augmentation based on hallucination Detection, DRAD),作为一种检测和缓解LLMs幻觉的新方法。DRAD通过基于实时幻觉检测动态调整检索过程,改进了传统的检索增强方法。它具有两个主要组件:用于在不依赖外部模型的情况下识别潜在幻觉的实时幻觉检测(Real-time Hallucination Detection, RHD),以及用于利用外部知识纠正这些错误的基于外部知识的自我校正(Self-correction based on External Knowledge, SEK)。实验结果表明,DRAD在检测和缓解LLMs幻觉方面均表现出优越性能。我们的所有代码和数据已在 https://github.com/oneal2000/EntityHallucination 开源。