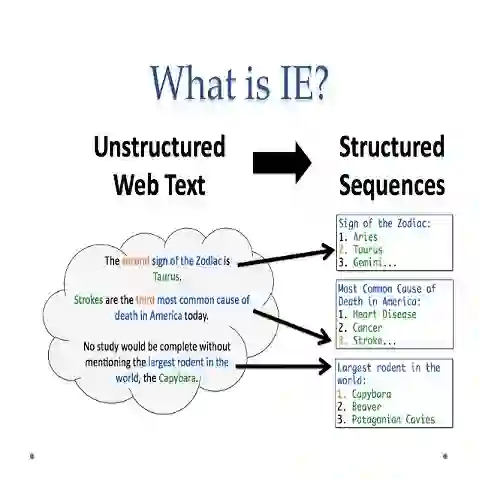

Zero-shot information extraction (IE) aims to build IE systems from the unannotated text. It is challenging due to involving little human intervention. Challenging but worthwhile, zero-shot IE reduces the time and effort that data labeling takes. Recent efforts on large language models (LLMs, e.g., GPT-3, ChatGPT) show promising performance on zero-shot settings, thus inspiring us to explore prompt-based methods. In this work, we ask whether strong IE models can be constructed by directly prompting LLMs. Specifically, we transform the zero-shot IE task into a multi-turn question-answering problem with a two-stage framework (ChatIE). With the power of ChatGPT, we extensively evaluate our framework on three IE tasks: entity-relation triple extract, named entity recognition, and event extraction. Empirical results on six datasets across two languages show that ChatIE achieves impressive performance and even surpasses some full-shot models on several datasets (e.g., NYT11-HRL). We believe that our work could shed light on building IE models with limited resources.

翻译:零样本信息抽取旨在从无标注文本中构建信息抽取系统。由于几乎不涉及人工干预,该任务具有挑战性。尽管困难但极具价值,零样本信息抽取能显著降低数据标注所需的时间与人力成本。近期大规模语言模型在零样本场景下展现出优异性能,这启发我们探索基于提示的方法。本研究旨在探究是否可通过直接提示大规模语言模型构建强大的信息抽取系统。具体而言,我们将零样本信息抽取任务转化为多轮问答问题,并提出两阶段框架ChatIE。借助ChatGPT的能力,我们在三个信息抽取任务上对框架进行了全面评估:实体关系三元组抽取、命名实体识别和事件抽取。跨两种语言的六个数据集的实验结果表明,ChatIE取得了令人瞩目的性能,甚至在部分数据集上超越了某些全监督模型。我们相信本研究能为资源受限环境下构建信息抽取模型提供新的思路。