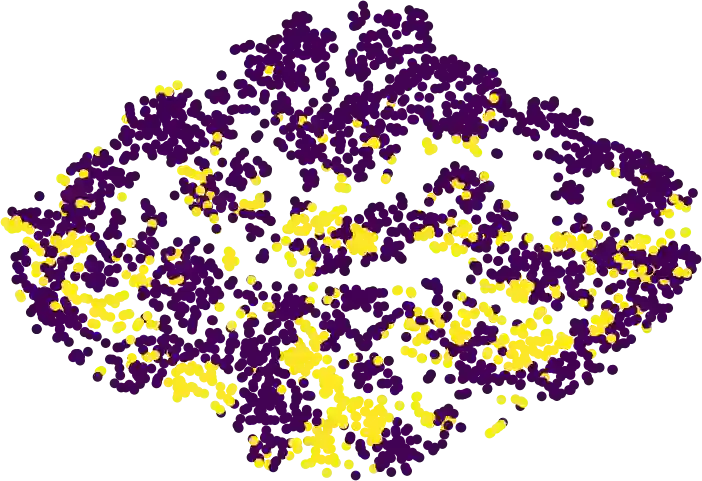

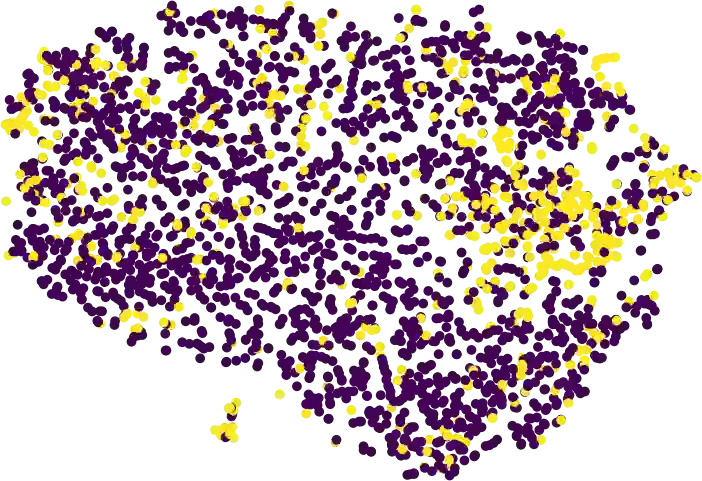

Recent advancements in large language models (LLMs) have significantly enhanced their knowledge and generative capabilities, leading to a surge of interest in leveraging LLMs for high-quality data synthesis. However, synthetic data generation via prompting LLMs remains challenging due to LLMs' limited understanding of target data distributions and the complexity of prompt engineering, especially for structured formatted data. To address these issues, we introduce DiffLM, a controllable data synthesis framework based on variational autoencoder (VAE), which further (1) leverages diffusion models to reserve more information of original distribution and format structure in the learned latent distribution and (2) decouples the learning of target distribution knowledge from the LLM's generative objectives via a plug-and-play latent feature injection module. As we observed significant discrepancies between the VAE's latent representations and the real data distribution, the latent diffusion module is introduced into our framework to learn a fully expressive latent distribution. Evaluations on seven real-world datasets with structured formatted data (i.e., Tabular, Code and Tool data) demonstrate that DiffLM generates high-quality data, with performance on downstream tasks surpassing that of real data by 2-7 percent in certain cases. The data and code will be publicly available upon completion of internal review.

翻译:近年来,大型语言模型(LLMs)的知识储备与生成能力显著提升,引发了利用LLMs进行高质量数据合成的广泛兴趣。然而,通过提示LLMs生成合成数据仍面临挑战,主要源于LLMs对目标数据分布的理解有限以及提示工程的复杂性,尤其对于结构化格式数据。为解决这些问题,我们提出了DiffLM——一个基于变分自编码器(VAE)的可控数据合成框架。该框架进一步(1)利用扩散模型在学习的潜在分布中保留更多原始分布与格式结构的信息,并(2)通过即插即用的潜在特征注入模块,将目标分布知识的学习与LLMs的生成目标解耦。由于我们观察到VAE的潜在表示与真实数据分布之间存在显著差异,我们在框架中引入了潜在扩散模块以学习具有充分表达能力的潜在分布。在七个包含结构化格式数据(即表格数据、代码数据及工具数据)的真实数据集上的评估表明,DiffLM能够生成高质量数据,其在下游任务中的性能在某些情况下甚至超过真实数据2-7个百分点。数据与代码将在完成内部审核后公开提供。