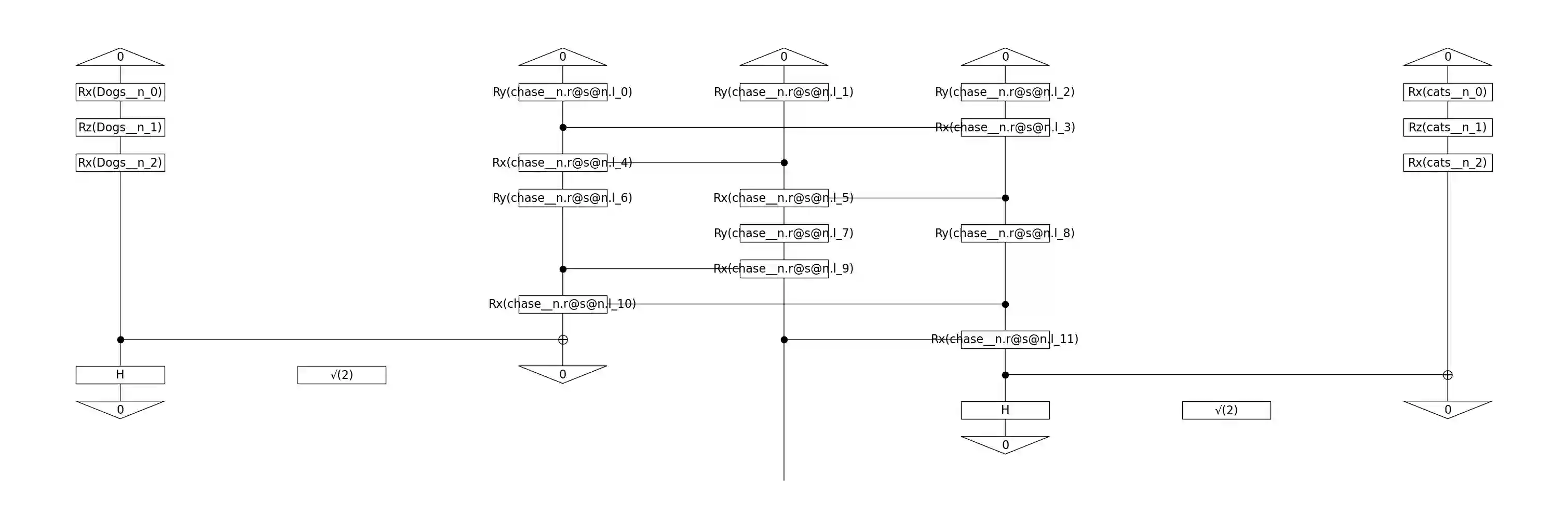

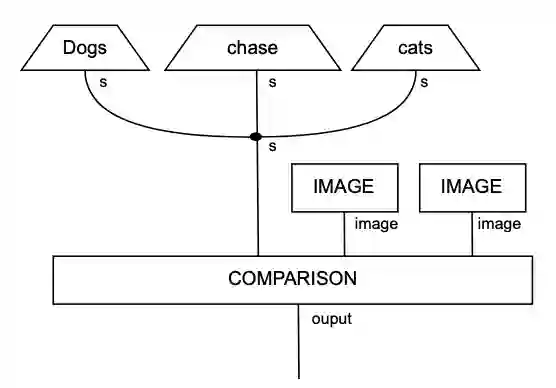

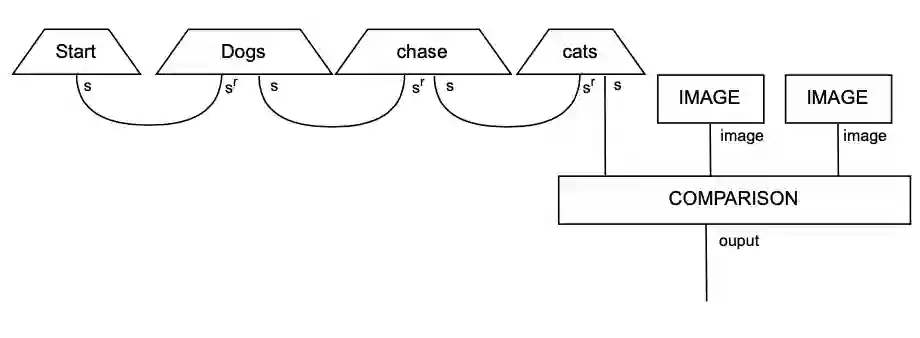

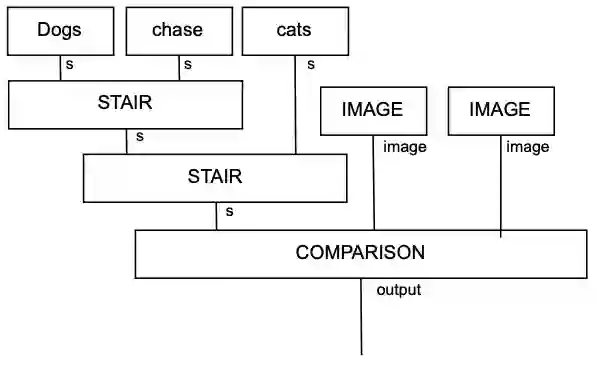

While large language models (LLMs) have advanced the field of natural language processing (NLP), their "black box" nature obscures their decision-making processes. To address this, researchers developed structured approaches using higher order tensors. These are able to model linguistic relations, but stall when training on classical computers due to their excessive size. Tensors are natural inhabitants of quantum systems and training on quantum computers provides a solution by translating text to variational quantum circuits. In this paper, we develop MultiQ-NLP: a framework for structure-aware data processing with multimodal text+image data. Here, "structure" refers to syntactic and grammatical relationships in language, as well as the hierarchical organization of visual elements in images. We enrich the translation with new types and type homomorphisms and develop novel architectures to represent structure. When tested on a main stream image classification task (SVO Probes), our best model showed a par performance with the state of the art classical models; moreover the best model was fully structured.

翻译:尽管大型语言模型(LLM)推动了自然语言处理(NLP)领域的发展,但其“黑箱”特性掩盖了其决策过程。为解决这一问题,研究人员开发了使用高阶张量的结构化方法。这些方法能够建模语言关系,但由于其规模过大,在经典计算机上进行训练时会陷入停滞。张量天然存在于量子系统中,通过将文本转换为变分量子电路在量子计算机上进行训练提供了一种解决方案。本文中,我们提出了MultiQ-NLP:一个用于处理多模态文本+图像数据的结构感知数据处理框架。此处的“结构”指语言中的句法和语法关系,以及图像中视觉元素的层次化组织。我们通过引入新的类型和类型同态来丰富翻译过程,并开发了新颖的架构来表示结构。在主流的图像分类任务(SVO Probes)上进行测试时,我们的最佳模型与最先进的经典模型表现相当;此外,该最佳模型是完全结构化的。