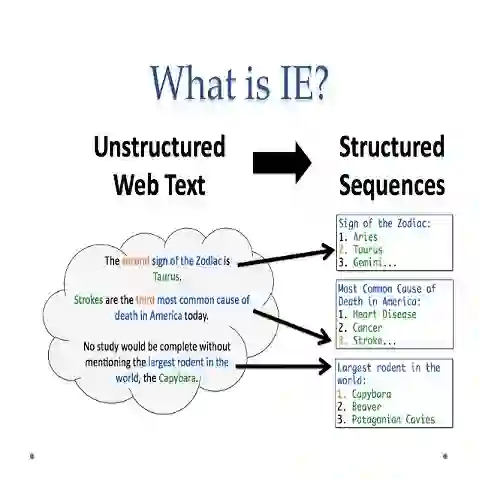

Information extraction (IE) aims to extract complex structured information from the text. Numerous datasets have been constructed for various IE tasks, leading to time-consuming and labor-intensive data annotations. Nevertheless, most prevailing methods focus on training task-specific models, while the common knowledge among different IE tasks is not explicitly modeled. Moreover, the same phrase may have inconsistent labels in different tasks, which poses a big challenge for knowledge transfer using a unified model. In this study, we propose a regularization-based transfer learning method for IE (TIE) via an instructed graph decoder. Specifically, we first construct an instruction pool for datasets from all well-known IE tasks, and then present an instructed graph decoder, which decodes various complex structures into a graph uniformly based on corresponding instructions. In this way, the common knowledge shared with existing datasets can be learned and transferred to a new dataset with new labels. Furthermore, to alleviate the label inconsistency problem among various IE tasks, we introduce a task-specific regularization strategy, which does not update the gradients of two tasks with 'opposite direction'. We conduct extensive experiments on 12 datasets spanning four IE tasks, and the results demonstrate the great advantages of our proposed method

翻译:信息抽取旨在从文本中提取复杂的结构化信息。针对各类信息抽取任务已构建了大量数据集,导致数据标注耗时费力。然而,现有主流方法多聚焦于训练任务专用模型,未能显式建模不同信息抽取任务间的共性知识。此外,同一短语在不同任务中可能存在标签不一致问题,这给基于统一模型的知识迁移带来了巨大挑战。本研究提出一种基于正则化迁移学习的信息抽取方法(TIE),该方法采用指令图解码器。具体而言,我们首先为所有知名信息抽取任务的数据集构建指令池,随后提出指令图解码器,该解码器能根据相应指令将各种复杂结构统一解码为图。如此,现有数据集共享的共性知识得以学习,并迁移至包含新标签的新数据集。此外,为缓解不同信息抽取任务间的标签不一致问题,我们引入任务专属正则化策略,该策略不更新具有"相反方向"梯度的两个任务的梯度。我们在涵盖四个信息抽取任务的12个数据集上进行了广泛实验,结果充分证明了本方法的显著优势。