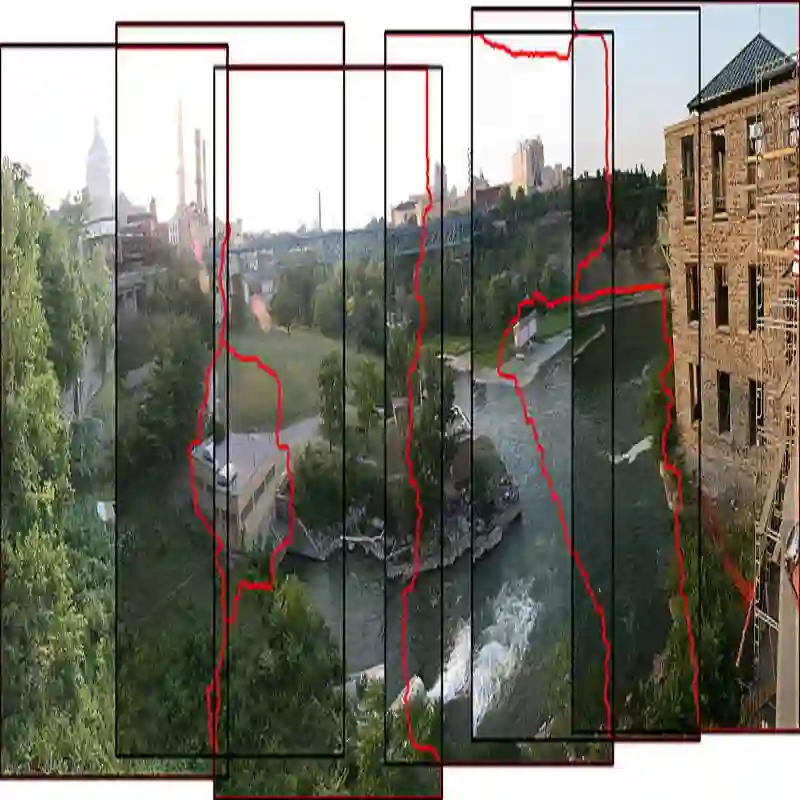

Collaborative perception has garnered significant attention for its ability to enhance the perception capabilities of individual vehicles through the exchange of information with surrounding vehicle-agents. However, existing collaborative perception systems are limited by inefficiencies in user interaction and the challenge of multi-camera photorealistic visualization. To address these challenges, this paper introduces ChatStitch, the first collaborative perception system capable of unveiling obscured blind spot information through natural language commands integrated with external digital assets. To adeptly handle complex or abstract commands, ChatStitch employs a multi-agent collaborative framework based on Large Language Models. For achieving the most intuitive perception for humans, ChatStitch proposes SV-UDIS, the first surround-view unsupervised deep image stitching method under the non-global-overlapping condition. We conducted extensive experiments on the UDIS-D, MCOV-SLAM open datasets, and our real-world dataset. Specifically, our SV-UDIS method achieves state-of-the-art performance on the UDIS-D dataset for 3, 4, and 5 image stitching tasks, with PSNR improvements of 9%, 17%, and 21%, and SSIM improvements of 8%, 18%, and 26%, respectively.

翻译:协同感知因其能够通过与环境中的车辆智能体交换信息来增强单车感知能力而受到广泛关注。然而,现有的协同感知系统受限于用户交互效率低下以及多相机逼真可视化方面的挑战。为解决这些问题,本文提出了ChatStitch,这是首个能够通过结合外部数字资产的自然语言指令来揭示被遮挡盲区信息的协同感知系统。为熟练处理复杂或抽象的指令,ChatStitch采用了基于大语言模型的多智能体协同框架。为了实现人类最直观的感知,ChatStitch提出了SV-UDIS,这是首个在非全局重叠条件下的环视无监督深度图像拼接方法。我们在UDIS-D、MCOV-SLAM公开数据集以及我们的真实世界数据集上进行了大量实验。具体而言,我们的SV-UDIS方法在UDIS-D数据集上针对3张、4张和5张图像的拼接任务,分别实现了9%、17%和21%的PSNR提升,以及8%、18%和26%的SSIM提升,达到了最先进的性能。