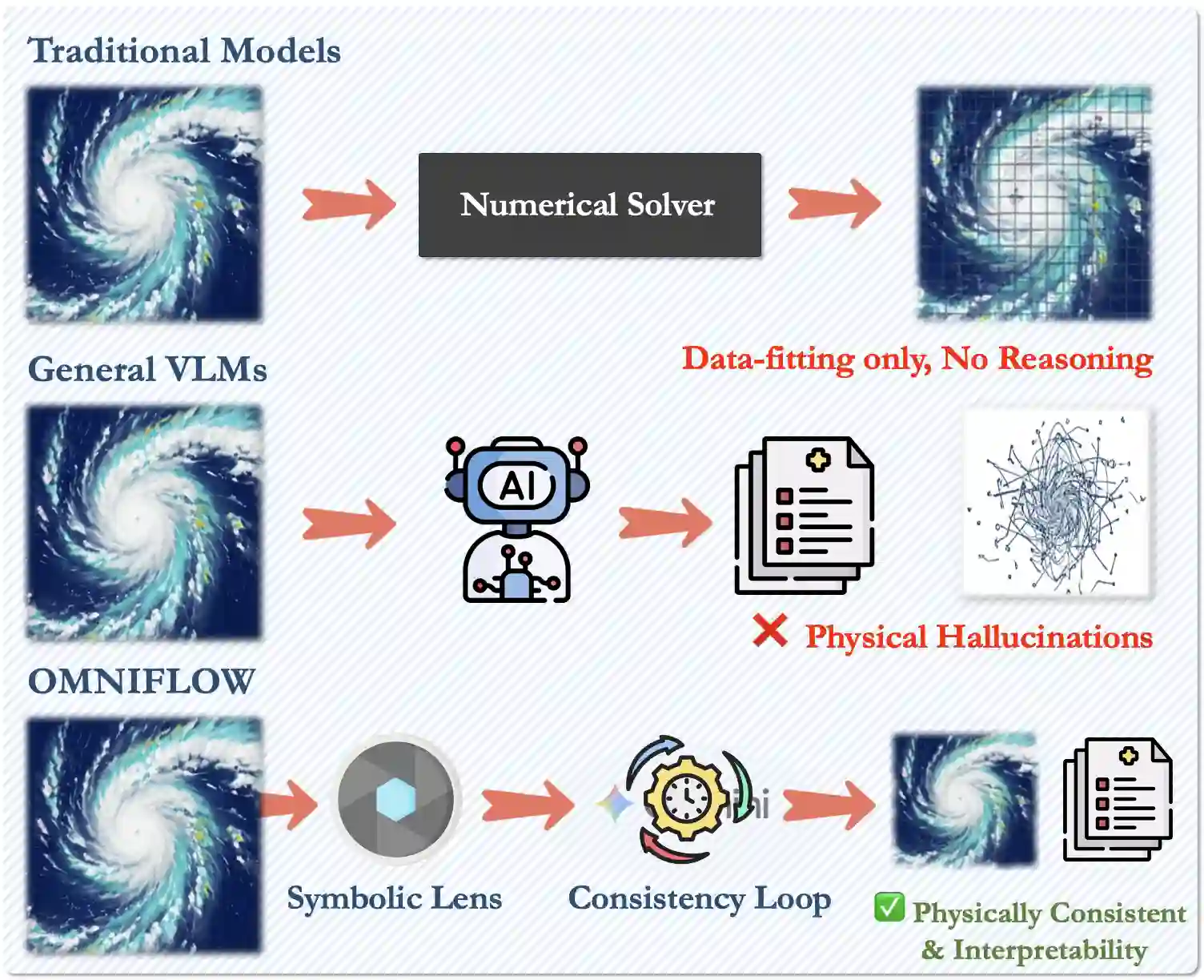

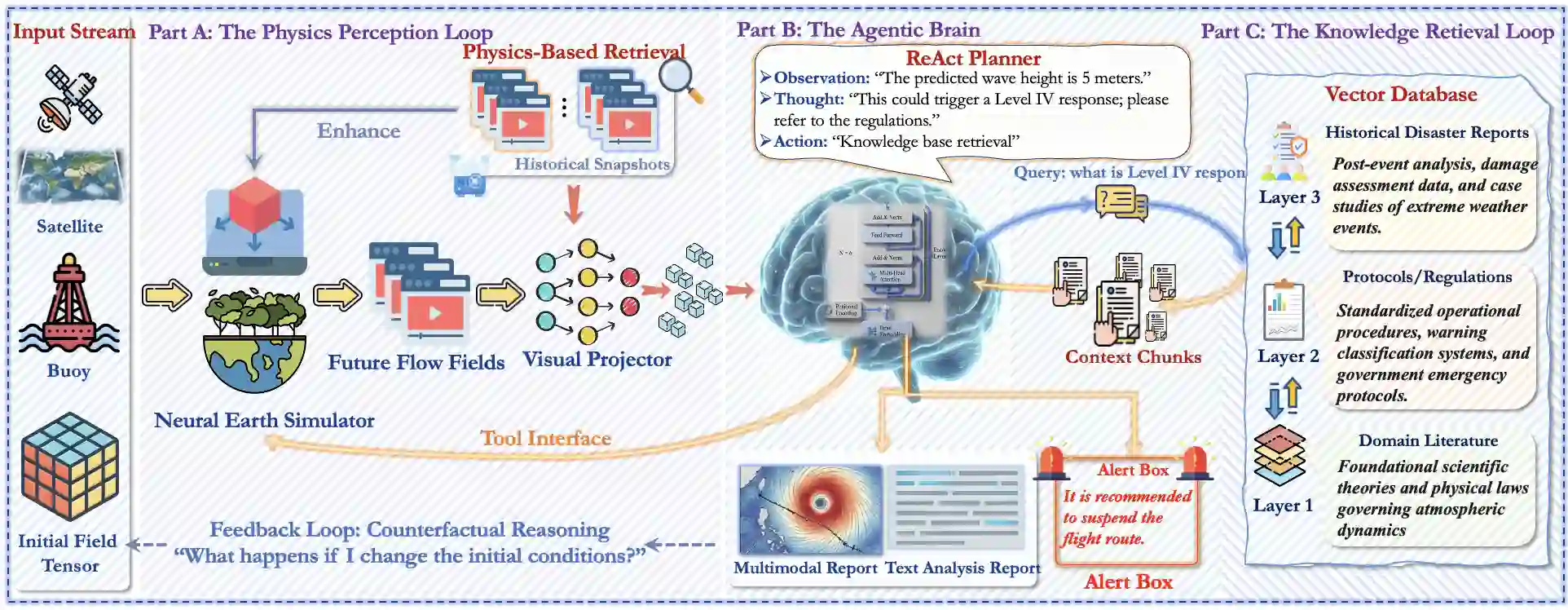

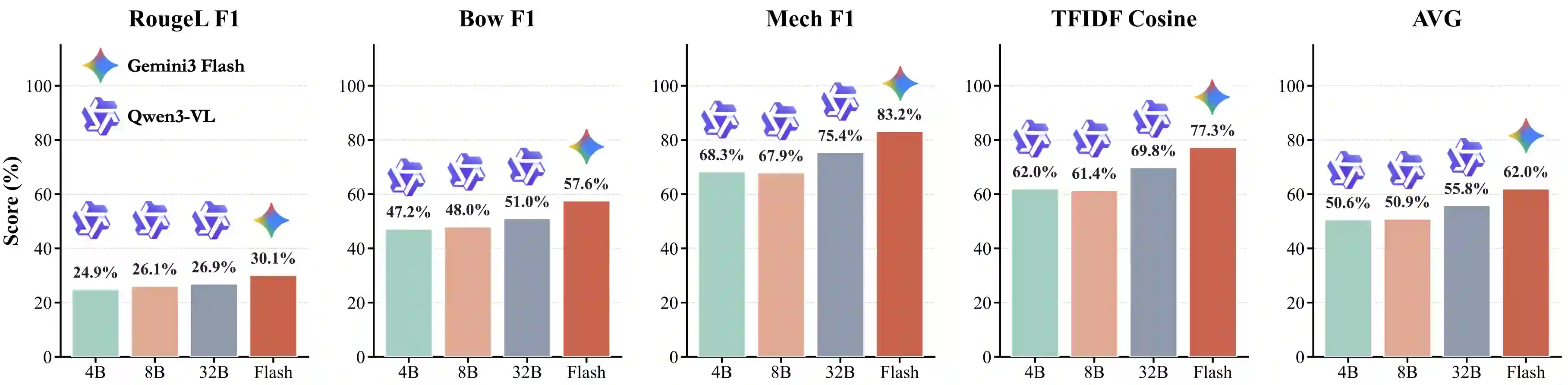

Large Language Models (LLMs) have demonstrated exceptional logical reasoning capabilities but frequently struggle with the continuous spatiotemporal dynamics governed by Partial Differential Equations (PDEs), often resulting in non-physical hallucinations. Existing approaches typically resort to costly, domain-specific fine-tuning, which severely limits cross-domain generalization and interpretability. To bridge this gap, we propose OMNIFLOW, a neuro-symbolic architecture designed to ground frozen multimodal LLMs in fundamental physical laws without requiring domain-specific parameter updates. OMNIFLOW introduces a novel \textit{Semantic-Symbolic Alignment} mechanism that projects high-dimensional flow tensors into topological linguistic descriptors, enabling the model to perceive physical structures rather than raw pixel values. Furthermore, we construct a Physics-Guided Chain-of-Thought (PG-CoT) workflow that orchestrates reasoning through dynamic constraint injection (e.g., mass conservation) and iterative reflexive verification. We evaluate OMNIFLOW on a comprehensive benchmark spanning microscopic turbulence, theoretical Navier-Stokes equations, and macroscopic global weather forecasting. Empirical results demonstrate that OMNIFLOW significantly outperforms traditional deep learning baselines in zero-shot generalization and few-shot adaptation tasks. Crucially, it offers transparent, physically consistent reasoning reports, marking a paradigm shift from black-box fitting to interpretable scientific reasoning.

翻译:大型语言模型(LLMs)已展现出卓越的逻辑推理能力,但在处理由偏微分方程(PDEs)支配的连续时空动力学问题时常常遇到困难,经常产生不符合物理规律的幻觉。现有方法通常依赖于成本高昂、领域特定的微调,这严重限制了跨领域泛化能力和可解释性。为弥合这一差距,我们提出了OMNIFLOW,一种神经符号架构,旨在将冻结的多模态LLMs锚定于基本物理定律,而无需进行领域特定的参数更新。OMNIFLOW引入了一种新颖的“语义-符号对齐”机制,将高维流张量投影为拓扑语言描述符,使模型能够感知物理结构而非原始像素值。此外,我们构建了一个物理引导的思维链(PG-CoT)工作流,通过动态约束注入(例如质量守恒)和迭代式反射验证来协调推理过程。我们在一个涵盖微观湍流、理论纳维-斯托克斯方程以及宏观全球天气预报的综合基准上评估了OMNIFLOW。实证结果表明,OMNIFLOW在零样本泛化和少样本适应任务上显著优于传统的深度学习基线。至关重要的是,它提供了透明且物理一致的推理报告,标志着从黑盒拟合到可解释科学推理的范式转变。