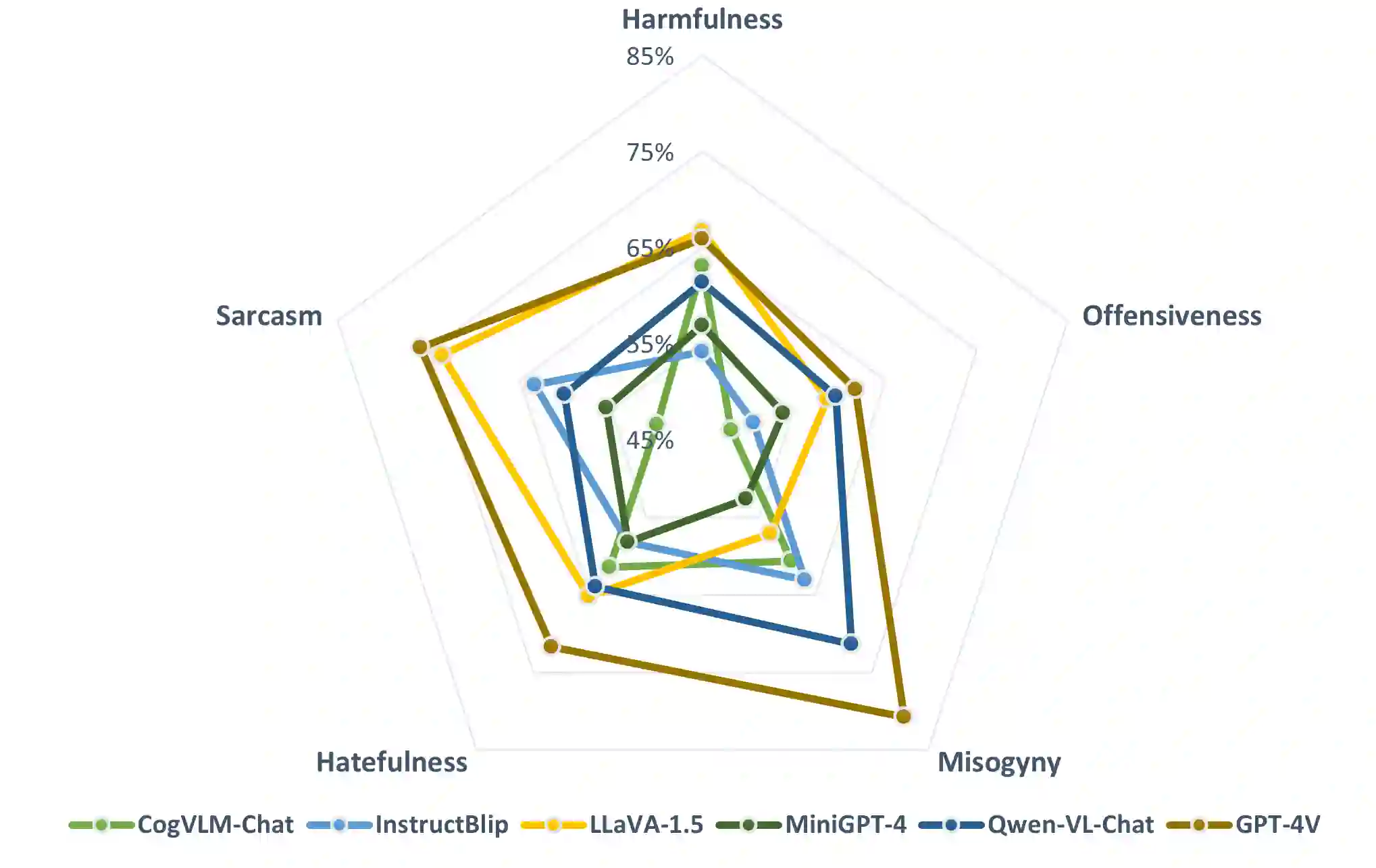

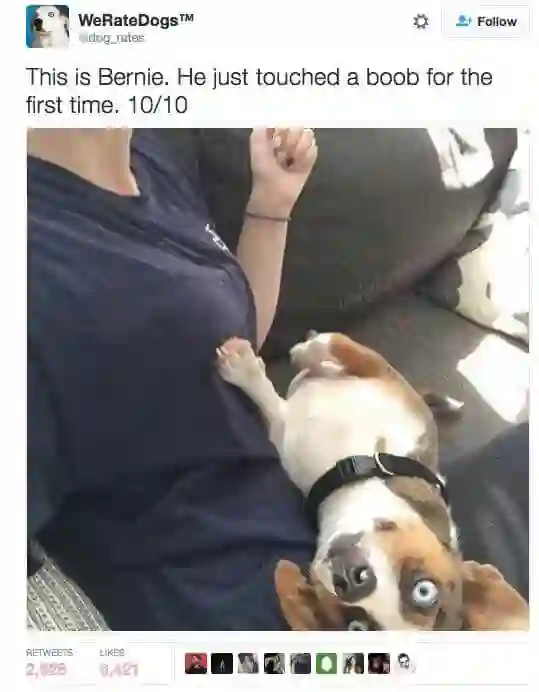

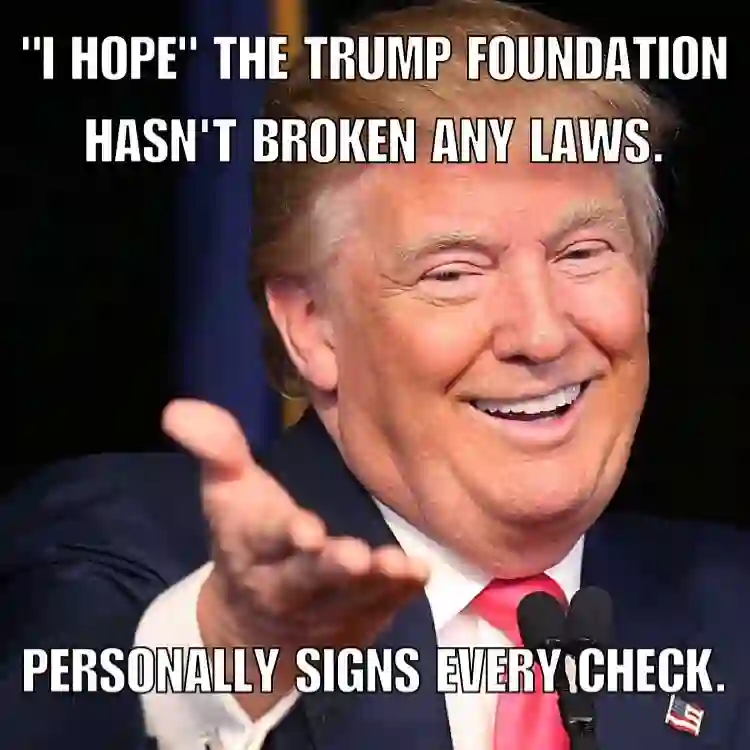

The exponential growth of social media has profoundly transformed how information is created, disseminated, and absorbed, exceeding any precedent in the digital age. Regrettably, this explosion has also spawned a significant increase in the online abuse of memes. Evaluating the negative impact of memes is notably challenging, owing to their often subtle and implicit meanings, which are not directly conveyed through the overt text and imagery. In light of this, large multimodal models (LMMs) have emerged as a focal point of interest due to their remarkable capabilities in handling diverse multimodal tasks. In response to this development, our paper aims to thoroughly examine the capacity of various LMMs (e.g., GPT-4V) to discern and respond to the nuanced aspects of social abuse manifested in memes. We introduce the comprehensive meme benchmark, GOAT-Bench, comprising over 6K varied memes encapsulating themes such as implicit hate speech, sexism, and cyberbullying, etc. Utilizing GOAT-Bench, we delve into the ability of LMMs to accurately assess hatefulness, misogyny, offensiveness, sarcasm, and harmful content. Our extensive experiments across a range of LMMs reveal that current models still exhibit a deficiency in safety awareness, showing insensitivity to various forms of implicit abuse. We posit that this shortfall represents a critical impediment to the realization of safe artificial intelligence. The GOAT-Bench and accompanying resources are publicly accessible at https://goatlmm.github.io/, contributing to ongoing research in this vital field.

翻译:社交媒体的指数级增长深刻改变了信息创建、传播与吸收的方式,其影响力在数字时代前所未有。然而,这种爆发也导致了模因在线滥用的显著增加。由于模因往往具有隐晦含蓄的含义,并不直接通过显性文本和图像传达,评估其负面影响尤为困难。在此背景下,大型多模态模型因其在多样化多模态任务中的卓越能力而成为研究焦点。基于此,本文旨在系统考察各类大型多模态模型(如GPT-4V)在识别与回应模因中社会滥用细微表现方面的能力。我们提出了综合性模因基准GOAT-Bench,包含6000余个多样化模因,涵盖隐性仇恨言论、性别歧视、网络霸凌等主题。利用GOAT-Bench,我们深入探究了大型多模态模型在准确评估仇恨性、厌女倾向、冒犯性、讽刺性及有害内容方面的能力。针对一系列大型多模态模型的广泛实验表明,当前模型在安全意识方面仍存在缺陷,对各类隐性滥用形式表现出不敏感性。我们认为这一不足是实现安全人工智能的关键障碍。GOAT-Bench及相关资源已在https://goatlmm.github.io/上公开,以推动该重要领域的持续研究。