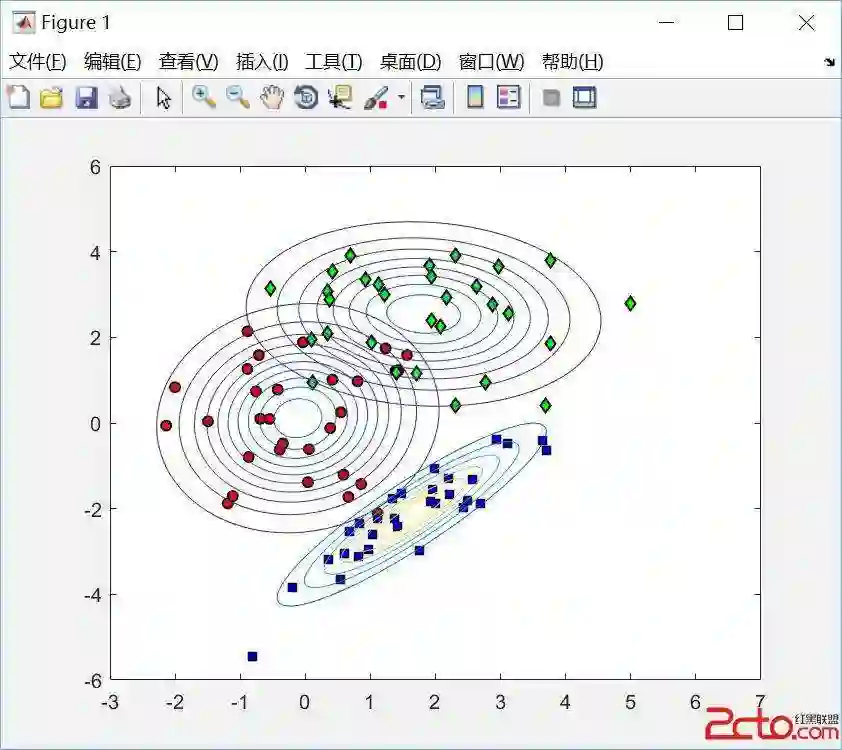

There are now many explainable AI methods for understanding the decisions of a machine learning model. Among these are those based on counterfactual reasoning, which involve simulating features changes and observing the impact on the prediction. This article proposes to view this simulation process as a source of creating a certain amount of knowledge that can be stored to be used, later, in different ways. This process is illustrated in the additive model and, more specifically, in the case of the naive Bayes classifier, whose interesting properties for this purpose are shown.

翻译:当前存在众多可解释的人工智能方法,用于理解机器学习模型的决策过程。其中基于反事实推理的方法通过模拟特征变化并观察对预测结果的影响来实现这一目标。本文提出将这一模拟过程视为知识创造的来源——这种知识可以被存储并在后续以不同方式加以利用。该过程以加性模型为背景进行阐述,特别针对朴素贝叶斯分类器展开分析,并展示了其在该应用场景中特有的优良性质。