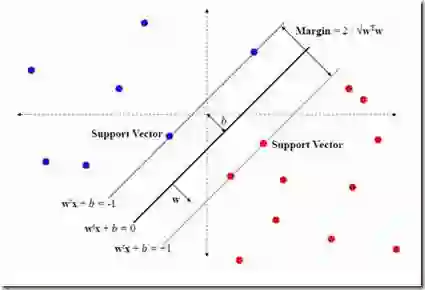

The increasing prevalence of Large Language Models (LLMs) demands effective safeguards for their operation, particularly concerning their tendency to generate out-of-context responses. A key challenge is accurately detecting when LLMs stray from expected conversational norms, manifesting as topic shifts, factual inaccuracies, or outright hallucinations. Traditional anomaly detection struggles to directly apply within contextual semantics. This paper outlines our experiment in exploring the use of Representation Engineering (RepE) and One-Class Support Vector Machine (OCSVM) to identify subspaces within the internal states of LLMs that represent a specific context. By training OCSVM on in-context examples, we establish a robust boundary within the LLM's hidden state latent space. We evaluate out study with two open source LLMs - Llama and Qwen models in specific contextual domain. Our approach entailed identifying the optimal layers within the LLM's internal state subspaces that strongly associates with the context of interest. Our evaluation results showed promising results in identifying the subspace for a specific context. Aside from being useful in detecting in or out of context conversation threads, this research work contributes to the study of better interpreting LLMs.

翻译:随着大型语言模型(LLMs)的日益普及,对其运行实施有效保障变得尤为必要,特别是针对其易产生脱离语境回复的倾向。核心挑战在于准确检测LLMs何时偏离预期的对话规范,表现为话题转移、事实错误或完全虚构。传统异常检测方法难以直接应用于语境语义内部。本文阐述了我们探索利用表征工程(RepE)与单类支持向量机(OCSVM)来识别LLMs内部状态中表征特定语境的子空间的实验。通过在语境示例上训练OCSVM,我们在LLM隐藏状态潜在空间中建立了稳健的边界。我们使用两个开源LLM——Llama与Qwen模型,在特定语境领域评估了本研究。我们的方法包括识别LLM内部状态子空间中与目标语境高度关联的最优层级。评估结果表明,该方法在识别特定语境的子空间方面取得了良好效果。除了可用于检测对话线程是否处于语境之内或之外,本研究工作亦有助于推进对LLMs的更好解释研究。