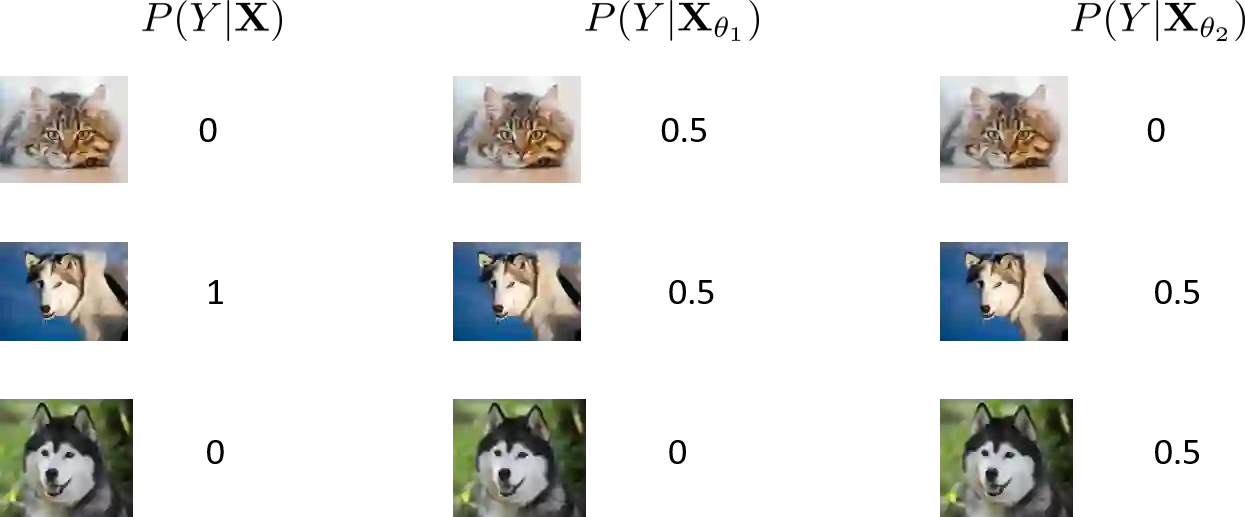

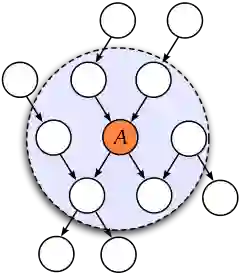

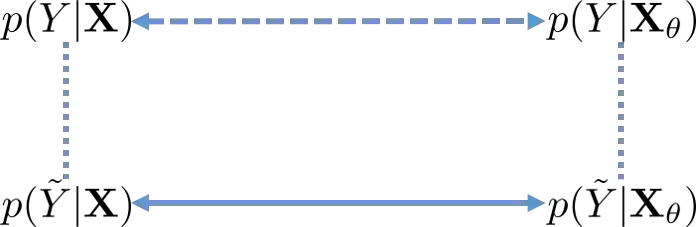

This paper presents a novel feature selection method leveraging the Wasserstein distance to improve feature selection in machine learning. Unlike traditional methods based on correlation or Kullback-Leibler (KL) divergence, our approach uses the Wasserstein distance to assess feature similarity, inherently capturing class relationships and making it robust to noisy labels. We introduce a Markov blanket-based feature selection algorithm and demonstrate its effectiveness. Our analysis shows that the Wasserstein distance-based feature selection method effectively reduces the impact of noisy labels without relying on specific noise models. We provide a lower bound on its effectiveness, which remains meaningful even in the presence of noise. Experimental results across multiple datasets demonstrate that our approach consistently outperforms traditional methods, particularly in noisy settings.

翻译:本文提出了一种新颖的特征选择方法,该方法利用Wasserstein距离来改进机器学习中的特征选择。与基于相关性或Kullback-Leibler (KL)散度的传统方法不同,我们的方法使用Wasserstein距离来评估特征相似性,其固有地捕获了类别关系,并使其对噪声标签具有鲁棒性。我们引入了一种基于马尔可夫毯的特征选择算法,并论证了其有效性。我们的分析表明,基于Wasserstein距离的特征选择方法能够有效降低噪声标签的影响,且无需依赖特定的噪声模型。我们给出了其有效性的下界,即使在存在噪声的情况下,该下界仍然具有意义。在多个数据集上的实验结果表明,我们的方法始终优于传统方法,尤其是在噪声环境中。